Data center operators are accustomed to shifting hardware strategies, but few have witnessed a project vanish—and then potentially reemerge—with such abruptness as NVIDIA’s Rubin CPX.

The Rubin CPX was designed to bridge the gap between training and inference: a single chip optimized for both. It promised lower latency, higher throughput, and better power efficiency than existing solutions. However, in late 2023, NVIDIA quietly removed it from its roadmap, replacing it with Groq LPUs for pure inference workloads.

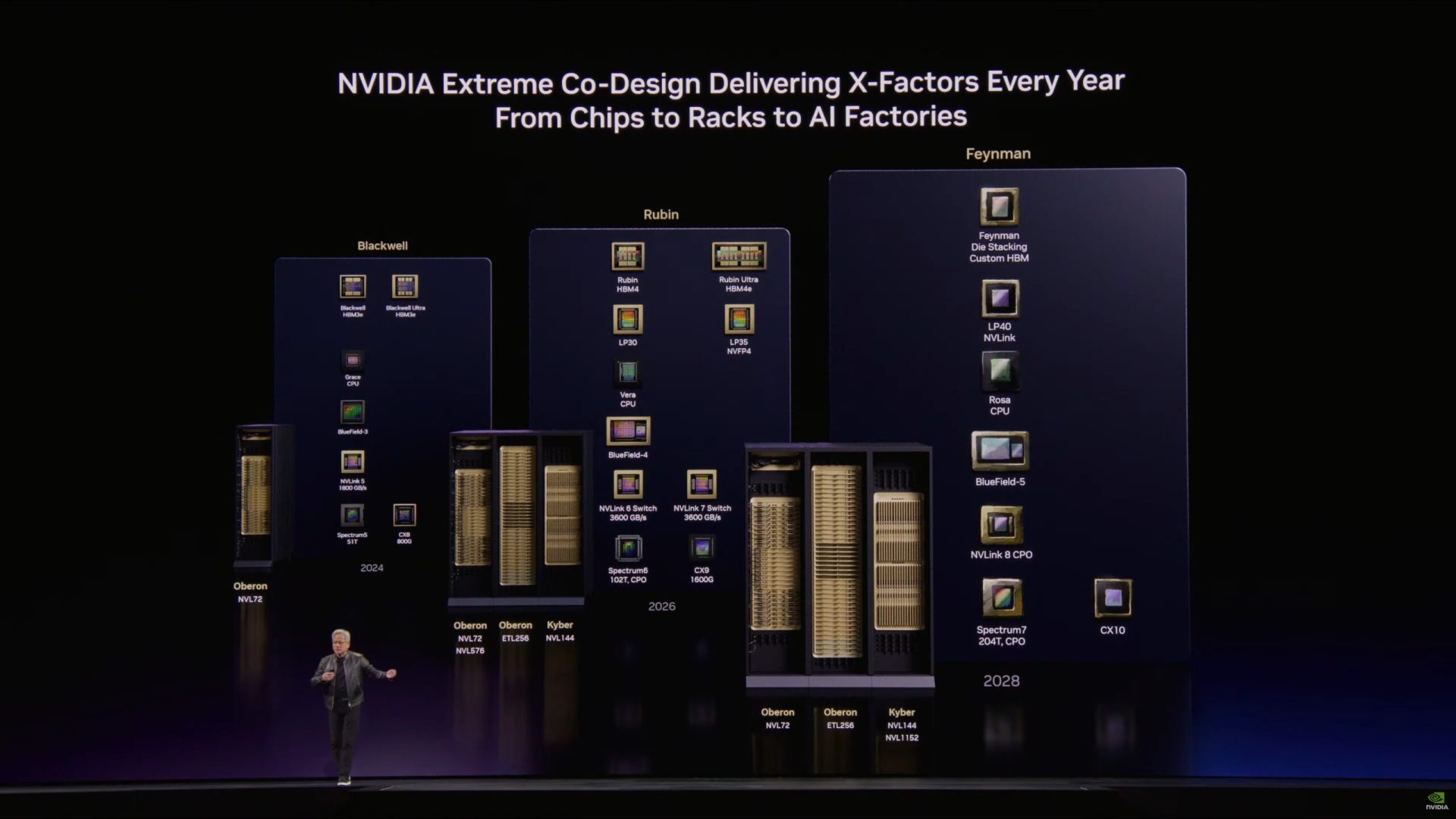

Now, industry insiders suggest that Rubin may not be gone forever. Instead, it could return under a new name—Feynman—and a revised architecture aimed at 2028. If this happens, it would mark a significant pivot in NVIDIA’s strategy for AI infrastructure.

What Was the Rubin CPX?

The Rubin CPX was built around two core principles: efficiency and specialization. It targeted inference workloads with a unique architecture that avoided traditional GPU bottlenecks. Early benchmarks suggested it could deliver up to 20% better performance per watt than NVIDIA’s then-current H100 GPUs for certain AI tasks.

- Core Design: A hybrid accelerator combining tensor cores, CUDA cores, and a custom inference engine.

- Memory: 96 GB HBM2e, optimized for low-latency access to AI models.

- Power: Targeted at 300W TDP, lower than many competing solutions.

Why Did It Disappear?

The Rubin CPX vanished from NVIDIA’s roadmap for several reasons. First, Groq LPUs offered a more immediate solution for inference-only workloads, with their own claims of superior efficiency and lower latency. Second, NVIDIA’s focus shifted toward scaling up existing GPU architectures—like the H100 and later the B100—to handle both training and inference.

There was also a strategic tradeoff: building an entirely new accelerator meant higher risk. If Rubin CPX couldn’t match the performance or efficiency of Groq LPUs, NVIDIA would lose ground in a critical market segment—AI inference. The company likely decided to hedge its bets by partnering with Groq instead.

What’s Next for Feynman?

If Rubin CPX is reborn as Feynman, it won’t be the same chip. Reports suggest NVIDIA will rethink its architecture, possibly integrating lessons learned from both the H100 and Groq LPU designs. The goal would be to create a chip that’s more than just a faster GPU—one that redefines how data centers handle AI workloads.

But there are unknowns. Will Feynman focus solely on inference, or will it try to unify training and inference again? How will its performance compare to Groq LPUs or NVIDIA’s next-generation GPUs? And most importantly, when will we see it?

- What We Know: Rubin CPX was removed from the roadmap in late 2023. Groq LPUs are now NVIDIA’s primary inference solution.

- What’s Uncertain: Whether Feynman will return, its exact architecture, and when it might launch.

The AI infrastructure landscape is evolving rapidly. NVIDIA’s moves—whether with Groq LPUs or a future Feynman chip—will shape how data centers operate in the coming years. For now, the focus remains on efficiency, heat management, and performance. But if history repeats itself, Rubin CPX’s story may not be over yet.