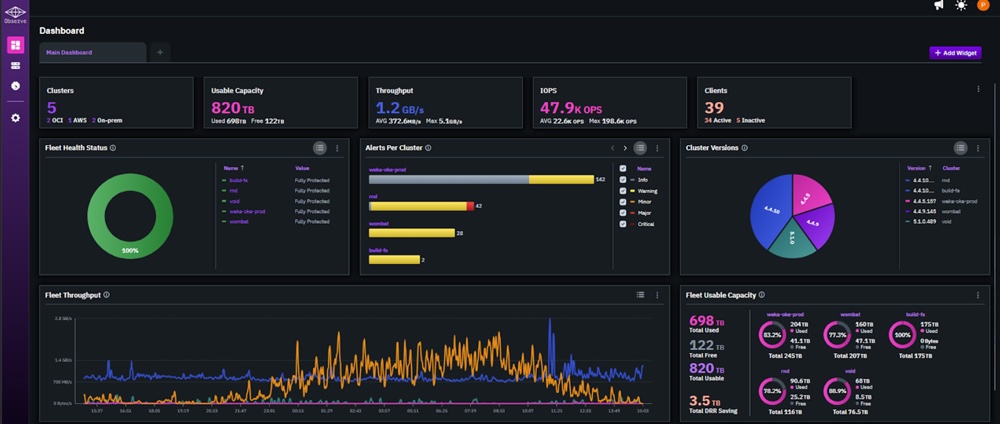

In the race to accelerate AI training across massive datasets, Weka has introduced NeuralMesh, a framework designed to streamline workflows that once required custom engineering. The promise is compelling: users can now deploy models on distributed systems without rewriting code for each hardware tier—whether CPU, GPU, or FPGA. Yet beneath this abstraction lies a critical tradeoff: the convenience of a single API comes with increased storage demands and potential performance penalties when data doesn’t fit in memory.

This shift reflects broader tensions in AI infrastructure. On one hand, the industry is pushing toward more unified software stacks to reduce operational complexity. On the other, hardware diversity—from NVIDIA’s GPU dominance to emerging FPGA accelerators—means that abstraction layers must balance flexibility with efficiency. NeuralMesh aims to sit at this intersection, but its success hinges on whether it can mitigate the storage overhead without sacrificing too much performance.

NeuralMesh is built around a mesh-based data placement system that dynamically distributes datasets across available hardware. It claims to eliminate the need for manual sharding or partitioning by automatically handling data locality. For users accustomed to frameworks like PyTorch or TensorFlow, this represents a significant simplification: no longer must they engineer solutions for memory constraints or hardware-specific optimizations. Instead, NeuralMesh abstracts these concerns away, presenting a unified interface regardless of whether the workload runs on a single GPU node or spans thousands of CPUs.

However, the framework does not come without caveats. Weka states that NeuralMesh introduces a 20% to 30% storage overhead due to its mesh-based data representation. This is a non-trivial cost in environments where storage capacity is already stretched thin—especially for enterprises dealing with petabyte-scale datasets. Additionally, while the framework supports distributed execution across CPUs, GPUs, and FPGAs (including Intel’s Arria 10 and Stratix 10), its performance characteristics remain untested under real-world conditions. Benchmarks published by Weka show speedups of up to 2x for certain workloads when compared to traditional distributed training setups, but these results are preliminary and lack third-party validation.

The storage overhead is the most immediate concern. Unlike traditional in-memory frameworks that assume data fits within available RAM, NeuralMesh’s mesh model appears to treat data as a persistent, distributed resource. This could lead to slower iteration times if the framework must repeatedly fetch or reconstruct data from disk—a potential bottleneck for iterative training loops common in deep learning. Weka has not yet disclosed whether future optimizations will address this, leaving users to wonder how much of the performance gain is offset by these hidden costs.

For now, NeuralMesh positions itself as a tool for organizations that prioritize flexibility over raw performance. It targets industries like healthcare and finance, where datasets are fragmented across regulatory boundaries or stored in heterogeneous formats. By abstracting away hardware differences, it allows teams to focus on model development rather than infrastructure tweaks. Yet the storage tradeoff suggests this is not a free lunch: users must weigh the convenience of a unified API against the long-term cost of managing larger data footprints.

The broader AI ecosystem may benefit from such frameworks, but they also risk becoming another layer in an already complex stack. As more vendors attempt to unify disparate hardware through software abstractions, the challenge will be ensuring that these layers do not become performance black boxes. NeuralMesh’s general availability marks a step toward that future, but its lasting impact remains uncertain—particularly if storage costs grow alongside its adoption.