inference tasks are hitting a wall—literally. The demand for faster processing has outpaced memory capacity, leaving organizations scrambling for solutions that don’t require a complete infrastructure overhaul. Weka’s latest move, NeuralMesh, paired with NVIDIA STX, promises to bridge that gap by optimizing how AI models consume and release memory during inference. But whether this is a game-changer or just another stopgap remains an open question.

At its core, the problem is straightforward: AI models are growing in size and complexity, but traditional systems struggle to keep up without significant hardware upgrades. NeuralMesh claims to address this by dynamically managing memory allocation, allowing larger models to run on existing infrastructure without proportional increases in cost or power consumption. That’s the upside—here’s the catch.

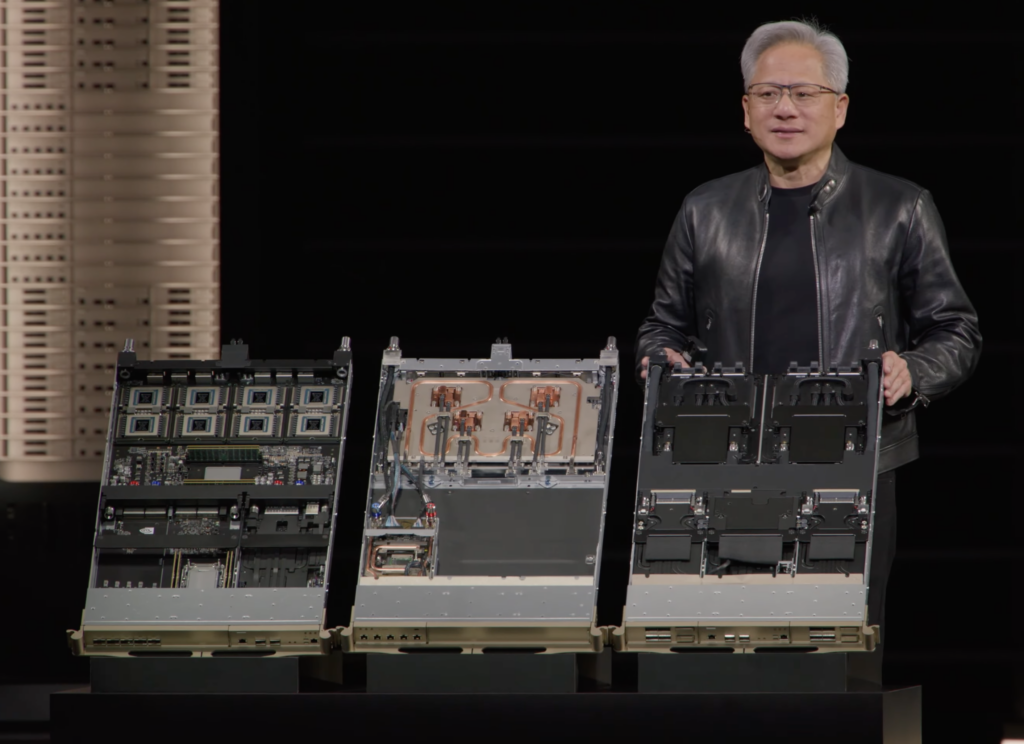

The integration works by leveraging NVIDIA STX for acceleration while Weka’s software layer handles the memory orchestration. Benchmark results suggest a 30% reduction in latency for certain workloads, along with up to 40% more efficient memory utilization compared to baseline configurations. However, these figures come with caveats. The improvements are model-dependent; some architectures benefit more than others. Additionally, the solution is not plug-and-play—it requires careful tuning and may not fit seamlessly into every AI pipeline.

For organizations evaluating upgrade paths, this raises a critical question: Is NeuralMesh a viable alternative to costly hardware expansions, or does it merely delay the inevitable? Early adopters report reduced operational costs in controlled environments, but scalability remains unproven. Competitors like Hugging Face and TensorFlow have been refining their own memory-optimization techniques for years, often with open-source transparency that Weka’s closed ecosystem lacks.

Looking ahead, the real test will be how NeuralMesh performs under mixed workloads—where inference tasks compete for resources in unpredictable ways. If it can maintain its efficiency without becoming a bottleneck itself, it could shift the balance in favor of software-driven optimizations over hardware upgrades. But if the gains prove too narrow or the implementation too rigid, it may end up as just another tool in an already crowded market.