The RAM crunch triggered by AI's exponential growth isn't just an immediate concern—it signals a fundamental rethinking of how computing infrastructure is designed. The traditional model of memory allocation, where RAM acted as a temporary data buffer, no longer suffices when models require terabytes of working space to operate efficiently.

This shift has sent shockwaves through the semiconductor industry. Chipmakers are scrambling to develop next-generation memory technologies that can handle AI's unique requirements, while data center operators face difficult choices about how to balance performance with cost. The result is a market where high-capacity RAM modules have become both scarce and prohibitively expensive for many applications.

At a glance

- AI workloads are consuming RAM at unprecedented rates, outstripping current production capacity

- Micron warns of sustained supply shortages affecting AI development timelines

- New memory architectures (HBM, HBM2e) offer temporary relief but face their own scalability challenges

- Price volatility expected to continue through 2025 as demand grows 3-4x faster than supply

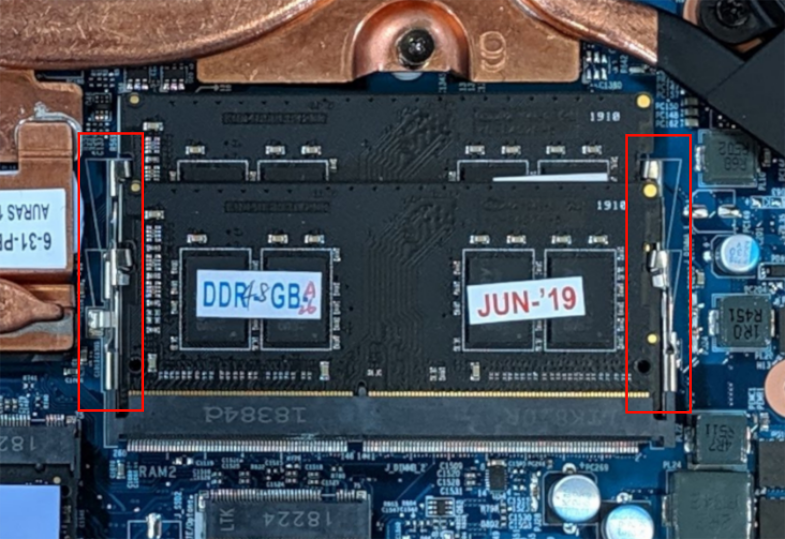

The situation is particularly acute for high-performance computing environments where AI models require rapid access to massive datasets. Current DDR5 modules, while an improvement over previous generations, simply don't provide the bandwidth or capacity needed for training large language models or complex neural networks. This has forced some developers to implement creative workarounds—like model sharding across multiple systems—which add complexity and reduce efficiency.

Impact

The economic ripple effects are already being felt throughout the industry. Cloud providers are passing on higher memory costs to enterprise customers, while hardware manufacturers face production bottlenecks that threaten to delay AI chip releases. Micron's latest capacity planning documents suggest this trend will continue unabated through at least 2025, with some market segments seeing demand grow three to four times faster than supply.

For end users, the implications are twofold: slower innovation cycles and increased operational costs. The AI arms race depends on having sufficient memory bandwidth to train models effectively, yet the current infrastructure can't keep pace. This creates a catch-22 where progress in one area (model size) directly limits progress in another (training efficiency).

Wrap

The RAM crunch represents more than just a supply chain issue—it's a test of whether the semiconductor industry can evolve as quickly as AI itself. Success will require breakthroughs in memory technology that go beyond incremental improvements, potentially involving new materials or architectural approaches. The coming year will reveal whether chipmakers can rise to this challenge or if AI's growth will be constrained by hardware limitations rather than computational ambition.