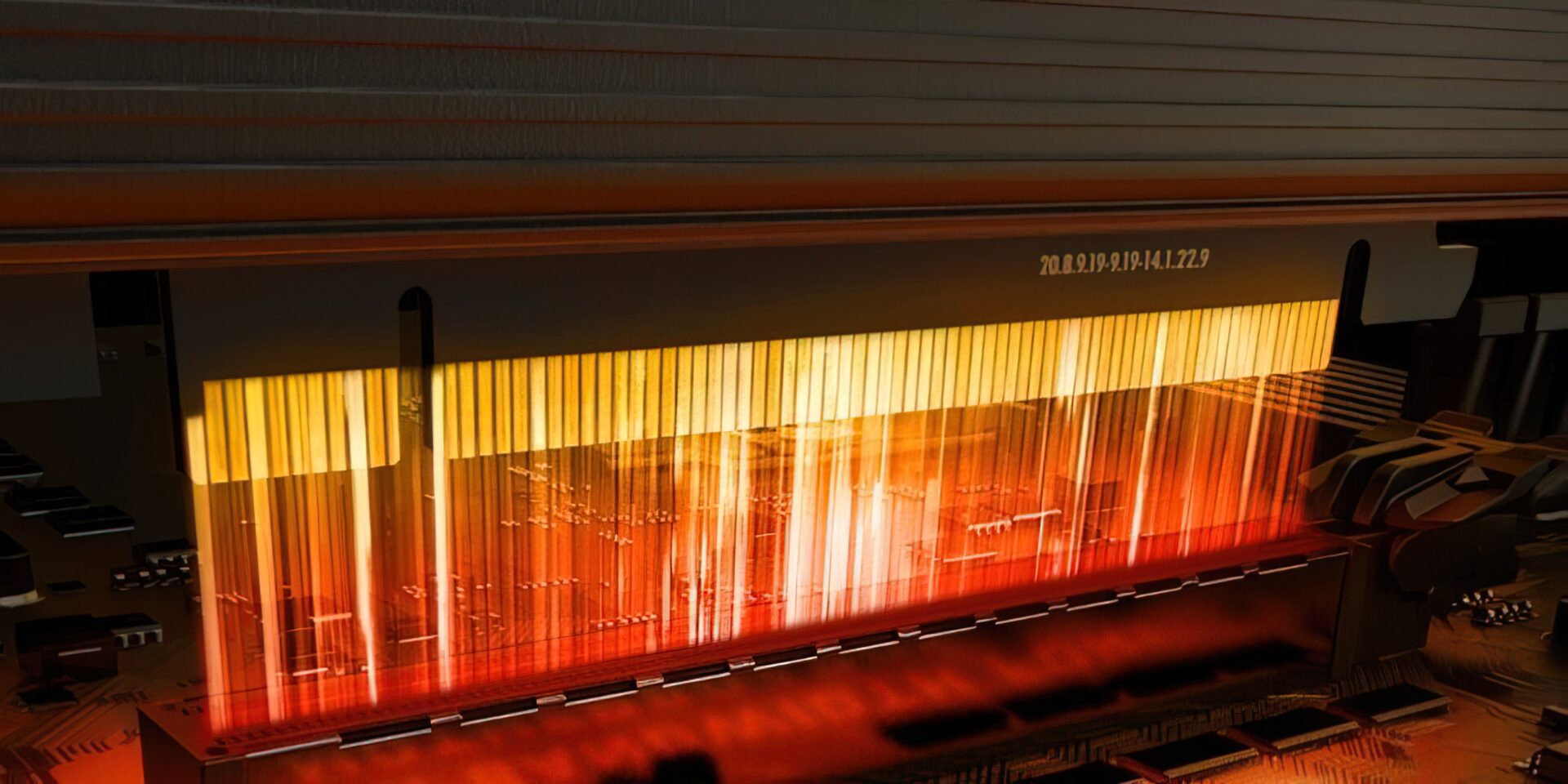

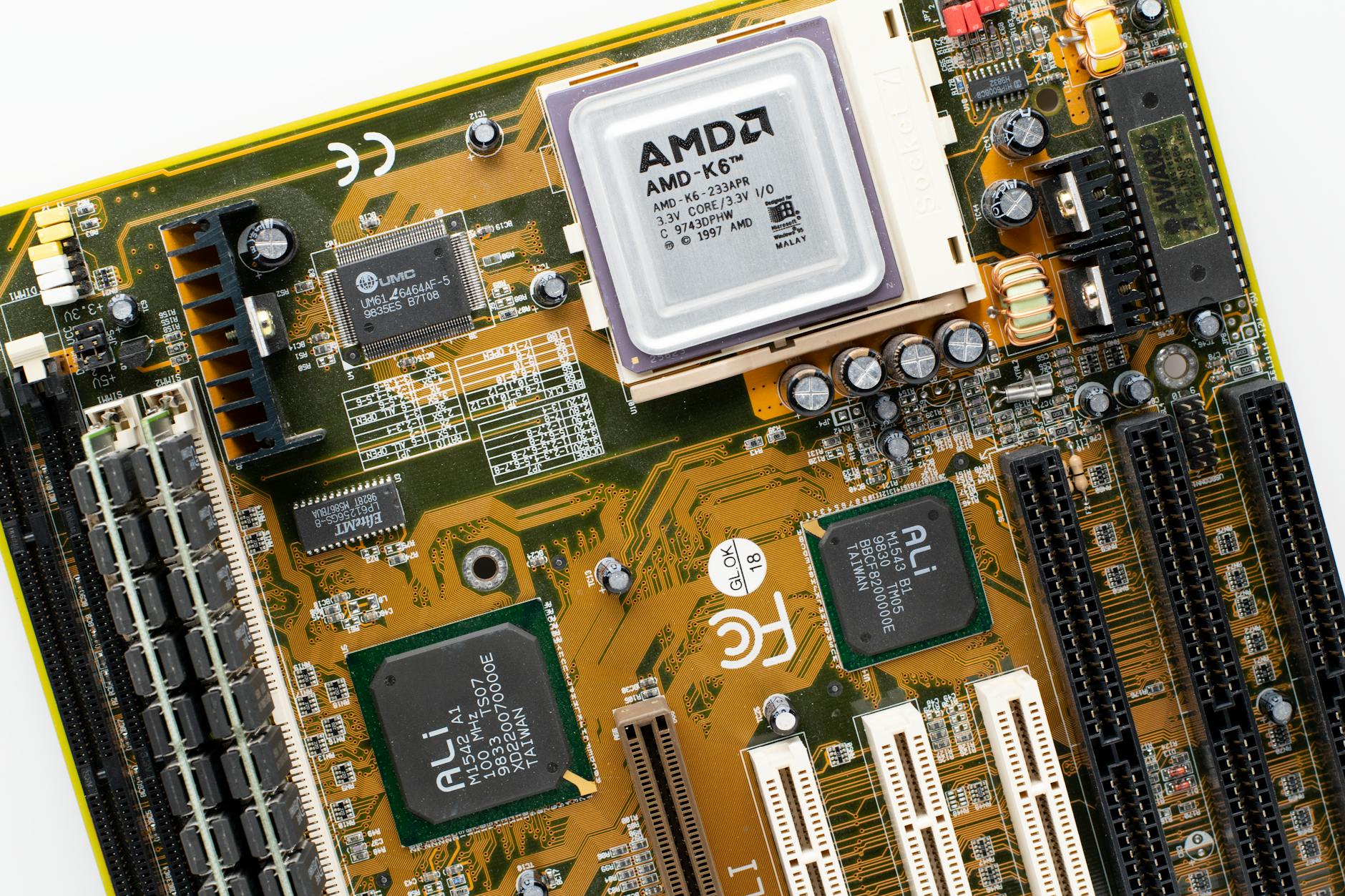

PCI Express 7.0 is on track for a major leap forward, but the industry’s appetite for faster data transfer—especially in AI—has outpaced its current capabilities. Rambus is stepping in with time division multiplexing (TDM) to bridge that gap, promising to double effective bandwidth without requiring new cabling or physical layer changes.

Traditional PCIe relies on parallel signaling, but TDM dynamically allocates bandwidth across lanes, allowing for more efficient data movement. This shift could be critical as AI workloads demand sustained throughput well beyond what today’s 16 GT/s PCIe 5.0 can deliver. Rambus claims the new architecture will support up to 32 GT/s speeds while maintaining compatibility with existing infrastructure.

What Changes in PCIe 7.0?

The upcoming standard will introduce several key advancements, but TDM is the standout innovation for AI and high-performance computing. Key specifications include

- Up to 32 GT/s signaling (double the current 16 GT/s).

- Support for 512 GB/s raw bandwidth per slot.

- Time division multiplexing for dynamic lane allocation, optimizing data flow in real-time.

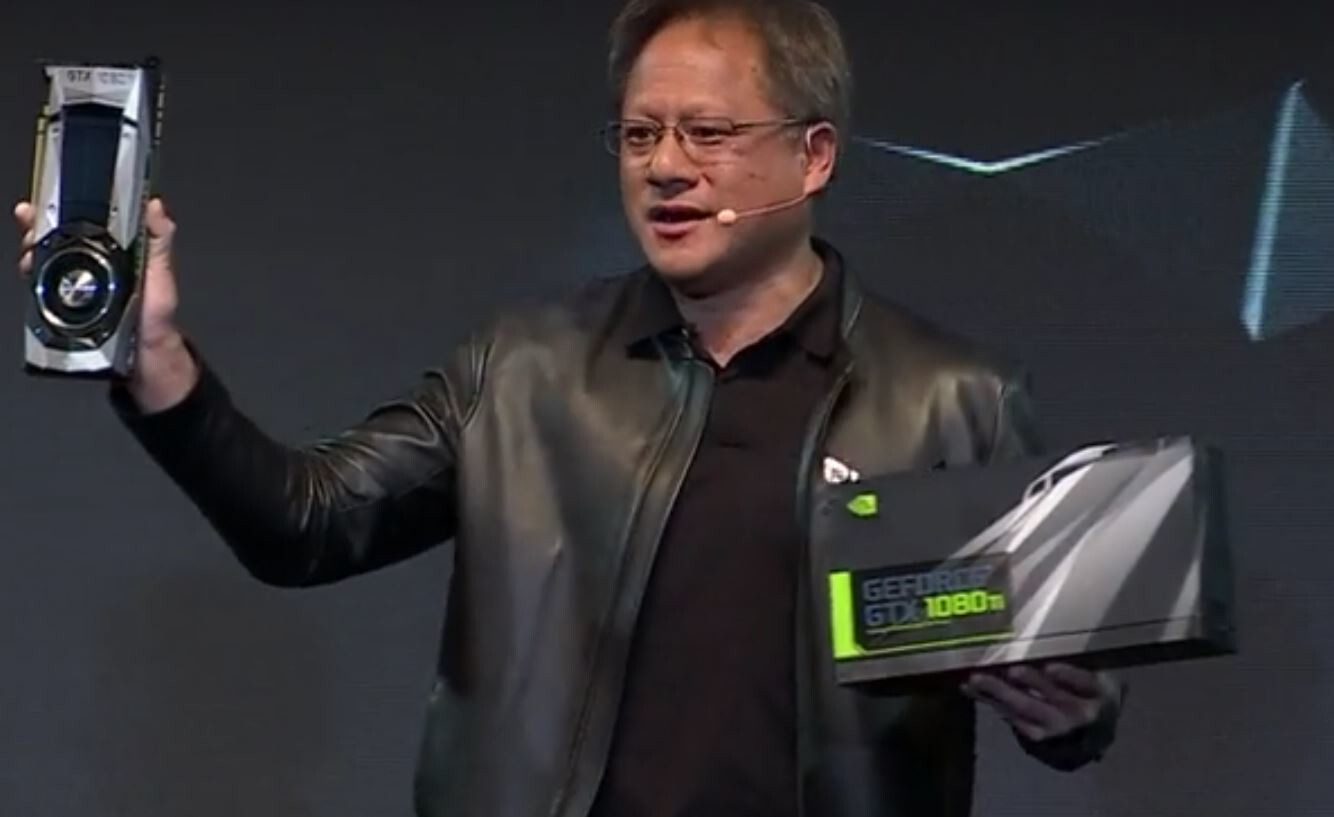

This isn’t just about raw speed—it’s about rethinking how data moves through systems. TDM allows for adaptive bandwidth distribution, which is crucial when AI models require bursty, unpredictable data access patterns. Rambus argues this will be a game-changer for data centers, where GPUs often sit idle waiting for data to arrive.

Industry Reaction and Adoption

The proposal has sparked interest, particularly among AI hardware developers who see it as a way to avoid costly redesigns. Early feedback suggests that TDM could be adopted incrementally, starting with high-end servers before trickling down to consumer GPUs. However, widespread adoption hinges on whether the industry can standardize the approach without creating fragmentation.

Looking Ahead

PCIe 7.0’s timeline remains fluid, but Rambus’ push for TDM signals a shift toward more flexible, adaptive architectures. If successful, this could set a new benchmark for data transfer standards, particularly in AI where latency and bandwidth are no longer just nice-to-haves—they’re requirements. The challenge now is proving that TDM can deliver on its promises without complicating the ecosystem further.