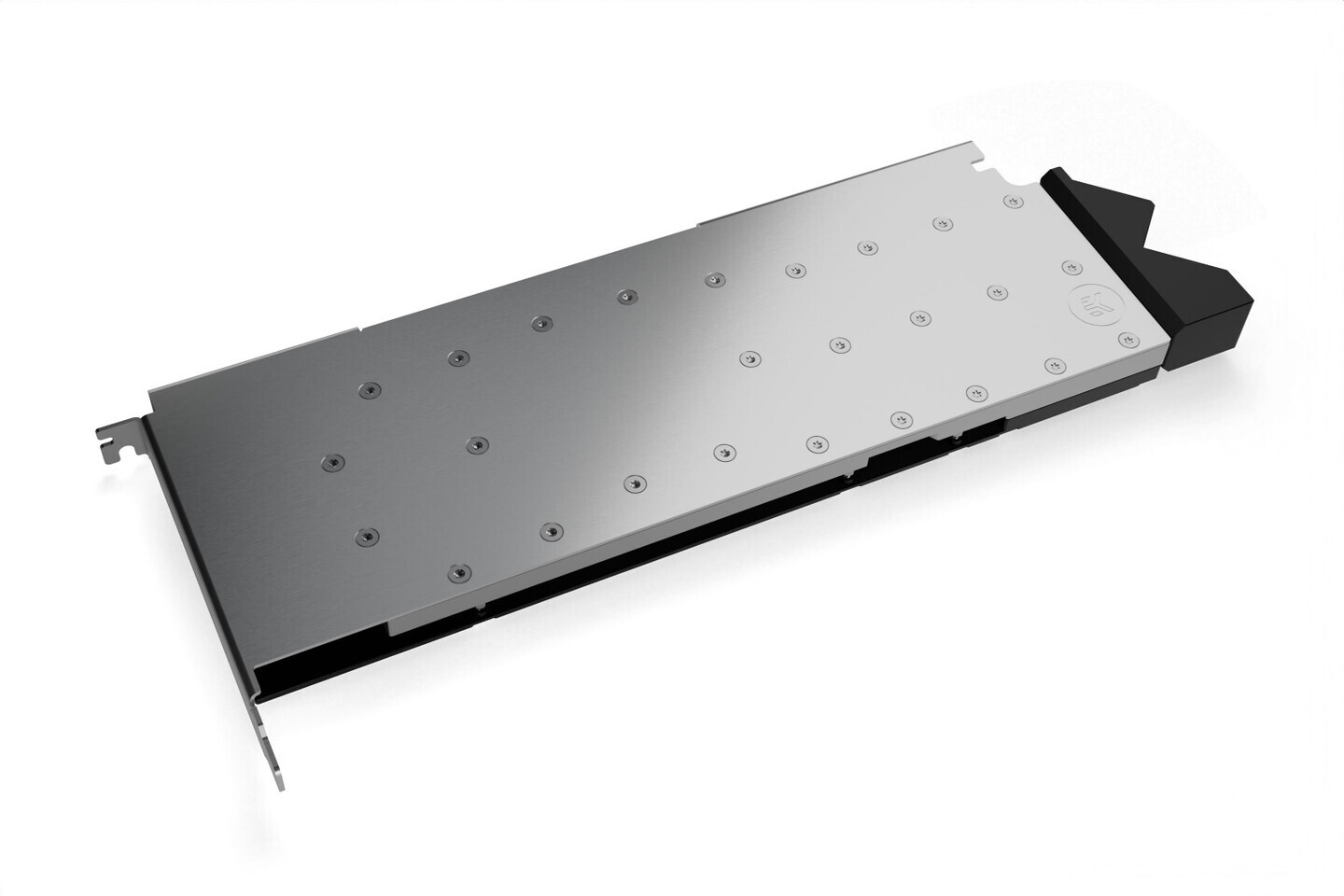

Data center efficiency hinges on more than just hardware—it’s about how that hardware is cooled. NVIDIA’s H200 NVL Tensor Core GPU now has a solution that addresses this balance: the EK-Pro GPU WB H200 NVL, designed to optimize space without compromising thermal performance.

The block replaces traditional dual-slot air coolers with a single-slot design, freeing up precious PCIe slots for additional GPUs. This isn’t just about fitting more hardware; it’s about ensuring those GPUs remain stable under sustained AI workloads. The cooling system covers the entire PCB, including HBM memory and VRM, providing consistent thermal management across all components.

- Key specifications:

- Single-slot form factor (replaces dual-slot air coolers)

- Dual-pass microfin stacks for uniform heat dissipation

- Nickel-plated copper base with stainless steel top

- 2 × G1/4 ports for scalable liquid-cooling setups

- Backplate retention system for structural rigidity

- Operating temperature: 5–60 °C, max pressure: 75,000 Pa (standard loop)

The dual-pass cooling engine is the core innovation. Coolant flows through two optimized microfin stacks in sequence, ensuring even heat removal regardless of flow direction. This minimizes thermal loss and keeps performance consistent under heavy loads—a critical requirement for AI training clusters.

For data center operators, the single-slot I/O bracket is a practical advantage. It replaces the stock shield, reducing the GPU’s physical footprint while maintaining full NVLink compatibility. The included aluminum backplate adds rigidity to the VRM and provides passive cooling, which is essential for long-term reliability in 24/7 operations.

This upgrade isn’t limited to high-end workstations; it’s tailored for high-density server deployments where PCIe slot space is at a premium. The block’s low-profile terminal and pre-installed brass standoffs simplify installation, even in tightly packed racks, ensuring seamless integration without sacrificing performance.

The real breakthrough lies in platform compatibility. Data centers can integrate this solution into existing liquid-cooling infrastructure without requiring redesigns. It’s a drop-in replacement that doesn’t just cool better—it enables operators to maximize GPU density while maintaining stability and efficiency.