In the fast-evolving landscape of artificial intelligence and high-performance computing, NVIDIA has made a significant adjustment to its 'Vera Rubin' superchip, setting the stage for a direct challenge against AMD's upcoming Instinct MI400 accelerators. This development comes as both companies prepare to redefine the boundaries of compute performance, memory bandwidth, and scalability in data center environments.

The 'Vera Rubin' project, initially targeting 13 TB/s of memory bandwidth, has undergone multiple upgrades since its announcement in March. , this figure had increased to 20.5 TB/s, with the latest confirmation at CES 2026 placing it at 22 TB/s. This represents a notable leap forward, particularly when compared to AMD's Instinct MI455X accelerator, which offers 19.6 TB/s of bandwidth. The upgrade reflects NVIDIA's strategy to enhance its competitive edge by leveraging faster DRAM and more efficient interconnects between CPUs, GPUs, and system components.

AMD's response to this competition is equally aggressive. The Instinct MI400 lineup, which includes the MI455X for large-scale AI training and inference, and the MI430X for HPC and AI applications, promises significant advancements in compute performance and memory capacity. AMD claims that its new GPUs will deliver up to 40 FP4 and 20 FP8 PFLOPs, roughly doubling the compute performance of the current MI350 series. This transition also marks a shift from HBM3e to HBM4 memory, increasing capacity from 288 GB to 432 GB and raising total bandwidth from 8 TB/s to 19.6 TB/s per GPU. Additionally, each GPU provides 300 GB/s of scale-out bandwidth, expanding AI data format support and AI pipelines.

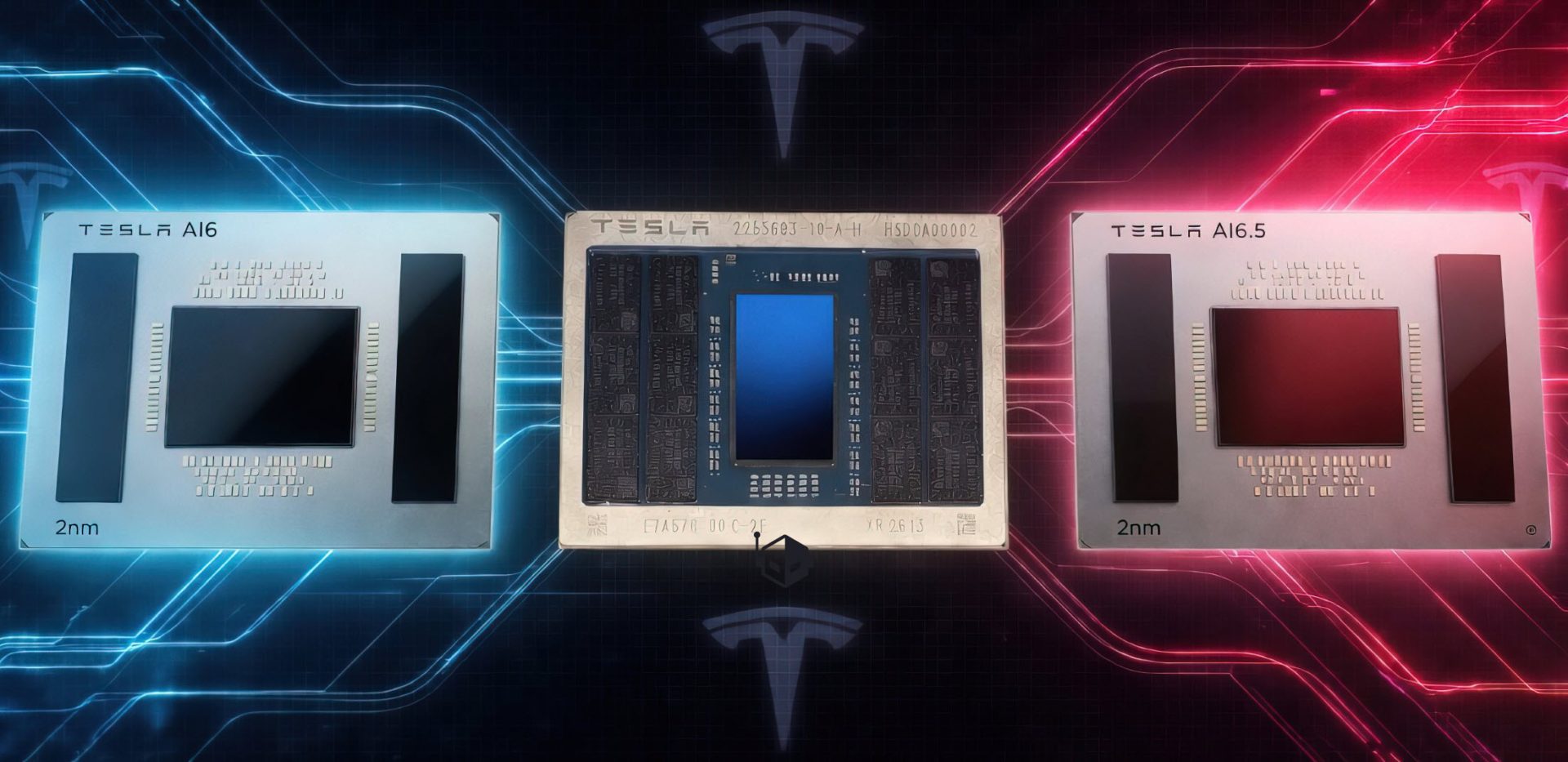

NVIDIA's 'Vera Rubin' superchip is designed to match this level of performance with its own set of innovations. Each Rubin GPU integrates two reticle-sized compute chiplets paired with eight HBM4 stacks, providing approximately 288 GB of HBM4 per GPU and about 576 GB for the full Superchip. Performance targets are set at around 50 PetaFLOPS of FP4 compute per Rubin GPU, resulting in roughly 100 PetaFLOPS FP4 for the two-GPU Superchip. This configuration positions NVIDIA to compete effectively with AMD's offerings, particularly in terms of memory capacity and scale-out bandwidth.

The implications of these advancements are significant for hyperscale data centers and AI workloads. Both companies are vying to provide solutions that balance performance, scalability, and efficiency, catering to the growing demands of large-scale AI training and inference tasks. As these technologies move from development to production, it will be interesting to observe how they perform in real-world scenarios and which solution emerges as the preferred choice for hyperscalers.

Key specs for both NVIDIA's 'Vera Rubin' and AMD's Instinct MI400 series highlight the technological advancements being made

- Memory Bandwidth:

- NVIDIA 'Vera Rubin': 22 TB/s

- AMD Instinct MI455X: 19.6 TB/s

- Compute Performance:

- AMD Instinct MI400 lineup: Up to 40 FP4 and 20 FP8 PFLOPs, roughly twice the compute performance of the current MI350 series.

- Memory Capacity:

- NVIDIA 'Vera Rubin': Approximately 576 GB of HBM4 for the full Superchip

- AMD Instinct MI400 lineup: Up to 432 GB of HBM4 memory

- Scale-out Bandwidth:

- NVIDIA 'Vera Rubin': Not specified, but designed to compete with AMD's 300 GB/s per GPU

- AMD Instinct MI455X: 300 GB/s per GPU

- AI Data Format Support:

- Both NVIDIA and AMD are expanding AI data format support and AI pipelines.

These specifications underscore the technological advancements being made in memory bandwidth, compute performance, and memory capacity. For hyperscalers and AI developers, these improvements translate to faster processing times, greater efficiency, and the ability to handle more complex workloads. However, the choice between NVIDIA and AMD solutions will likely depend on specific use cases, cost considerations, and integration requirements.

As both companies prepare to launch their new products, the competitive landscape in AI and HPC is poised for significant change. The focus on memory bandwidth and compute performance reflects the increasing demands of AI workloads, where efficiency and scalability are paramount. NVIDIA's upgrade to its 'Vera Rubin' superchip and AMD's introduction of the Instinct MI400 series represent a new chapter in this ongoing competition, one that will shape the future of high-performance computing for years to come.