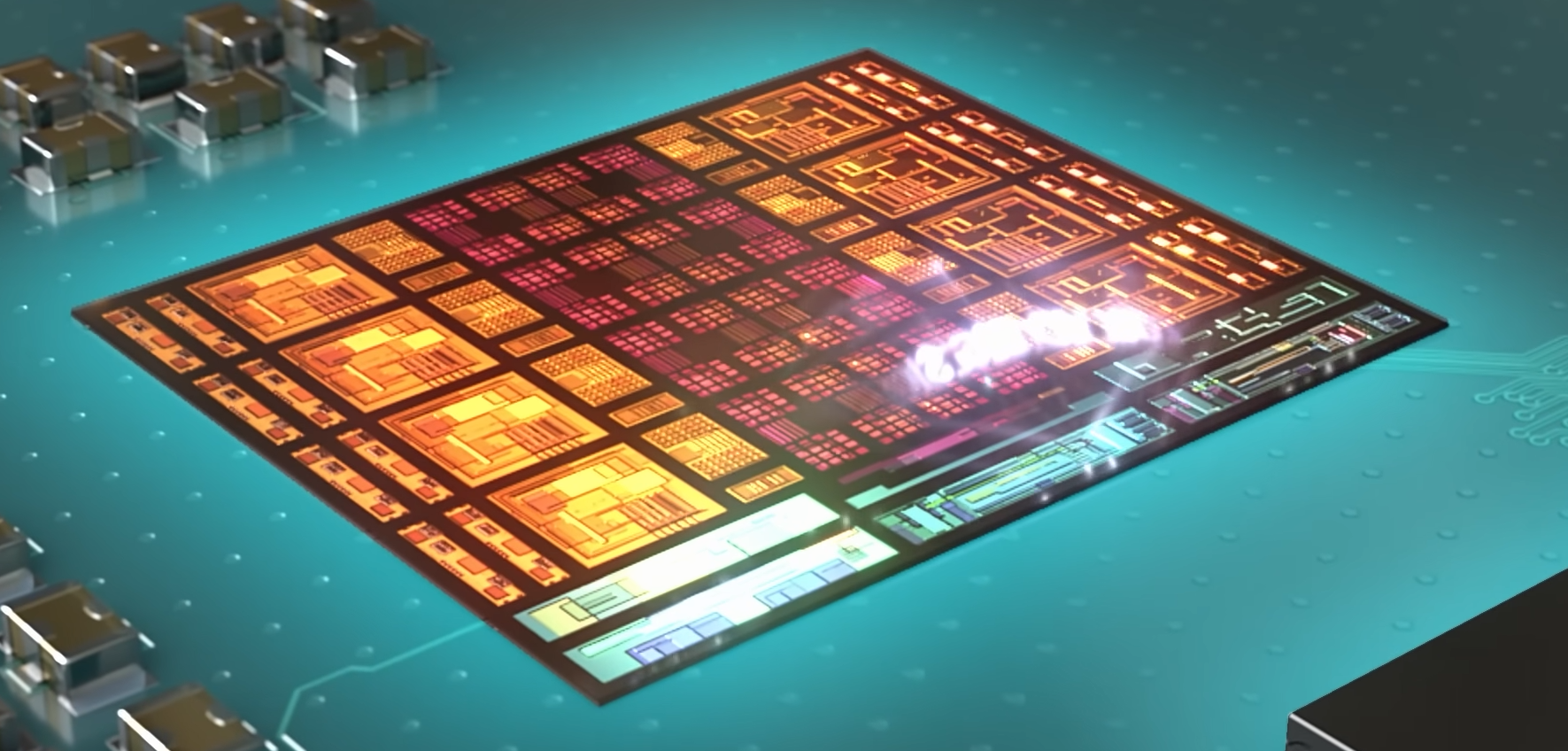

Microsoft’s new Maia 200 chip isn’t just another GPU. It’s a full-stack redesign for AI inference—one that challenges the idea that general-purpose hardware can efficiently handle the diverse demands of modern AI workloads.

From interactive copilots to batch processing, AI inference has become a fragmented landscape. Latency-sensitive tasks like real-time assistants require near-instant responses, while batch operations prioritize throughput and cost efficiency. Microsoft’s answer? A custom silicon platform built from the ground up to address these tradeoffs.

With claims of **30% better performance per dollar** compared to existing Azure hardware, the Maia 200 signals a shift toward specialized inference accelerators—one that could reshape how cloud providers deploy AI workloads at scale.

What People Might Assume

Many would expect Microsoft to simply optimize existing GPU architectures for inference. After all, NVIDIA’s dominance in AI training has made GPUs the default choice for most workloads. But the Maia 200 takes a different approach

- Not a GPU replacement: Unlike traditional accelerators, Maia is designed specifically for inference, not training.

- Precision over brute force: Instead of relying on high-precision floating-point operations, it bets on **FP4**—a narrow-precision format that Microsoft claims preserves accuracy while cutting compute and memory costs.

- Memory as a bottleneck solver: With **272MB of on-die SRAM** and **216GB of HBM3e**, Maia prioritizes data locality to reduce off-chip traffic, a common bottleneck in AI workloads.

- Scale-up networking: An integrated **2.8TB/s Ethernet fabric** allows clustering up to **6,144 accelerators**, optimizing for low-latency, high-bandwidth inference clusters.

The result? A chip that Microsoft argues is **30% more efficient** than its current fleet—without sacrificing flexibility.

What’s Actually Changing

The Maia 200 isn’t just an incremental upgrade. It’s a **full-stack rethinking** of how AI inference is processed, from silicon to software

1. A Microarchitecture Built for Inference

Maia’s design centers on **tiles and clusters**—a hierarchical structure that balances compute and memory efficiency

- Tile Tensor Unit (TTU): Handles matrix operations and convolutions, optimized for **FP4, FP6, and FP8** precision.

- Tile Vector Processor (TVP): A programmable SIMD engine supporting **FP8, BF16, FP16, and FP32** for mixed-precision workloads.

- Multi-level SRAM: **272MB of on-die SRAM** (split between tile and cluster levels) reduces HBM bandwidth demand by keeping working sets local.

- Hierarchical DMA: A multi-tiered data movement system ensures compute pipelines aren’t stalled by memory transfers.

This structure allows Maia to **minimize off-chip data movement**, a critical bottleneck in AI inference where memory bandwidth often limits performance.

2. Narrow Precision as the Efficiency Lever

Microsoft is doubling down on **FP4**—a 4-bit floating-point format—as the sweet spot for inference efficiency

- FP4 throughput: Claims **2x FP8** and **8x BF16** performance, enabling higher tokens per second at lower power.

- Mixed-precision support: The TTU handles **FP8 activations with FP4 weights**, while the TVP supports broader precision for operators needing higher accuracy.

- Line-rate reshaping: An integrated unit up-converts low-precision formats without bottlenecks, ensuring smooth compute pipelines.

The bet here is that **FP4 can match FP16 accuracy** in many inference tasks while slashing compute and memory costs—a claim that, if proven, could redefine AI hardware economics.

3. A Networking Fabric for Scale

Maia’s **on-die NIC and Ethernet-based interconnect** (with **2.8TB/s bandwidth**) enables clustering without external switches, reducing latency and improving efficiency

- Fully Connected Quad (FCQ): Groups four accelerators for local tensor-parallel traffic, keeping high-intensity operations off the network.

- AI Transport Layer (ATL): Runs over standard Ethernet but adds features like **packet spraying, multipath routing, and congestion control** for inference workloads.

- Hierarchical collectives: Optimized for inference synchronization patterns (unlike training workloads, which often use all-to-all communication).

This design allows Maia to **scale to 6,144 accelerators** while maintaining low-latency, high-bandwidth communication—critical for large inference clusters.

4. Azure-Native Integration

Maia isn’t just a chip; it’s a **systems-level play** designed to integrate seamlessly with Azure

- Rack and power compatibility: Aligns with Azure’s mechanical and thermal standards, enabling mixed fleets of GPUs and accelerators.

- Liquid and air cooling: Supports **second-gen liquid cooling** for high-density deployments.

- Azure control plane integration: Managed via the same tooling as GPU fleets, with support for **Microsoft Foundry and Copilot workloads**.

- Heterogeneous scheduling: Workloads can be partitioned for **performance per dollar, latency, or capacity** without reworking orchestration.

This ensures Maia fits into Microsoft’s broader AI strategy—**not as a replacement for GPUs, but as a specialized option for inference-heavy workloads**.

What It Means Now

The Maia 200 isn’t just another chip in Microsoft’s arsenal. It’s a **statement on the future of AI infrastructure**—one where **general-purpose hardware may no longer be the most efficient choice** for inference.

For cloud providers, this could mean **lower costs per token** while maintaining performance. For developers, it opens new possibilities for **optimizing workloads at the hardware level**—whether through Microsoft’s **Maia SDK, Triton compiler, or Nested Parallel Language (NPL)** for fine-grained control over data movement and SRAM placement.

But the bigger question is whether **FP4 and narrow precision** will live up to Microsoft’s claims. If they do, Maia could set a new standard for AI efficiency—one that other hyperscalers may feel compelled to follow.

One thing is clear: **Microsoft is no longer just renting GPU cycles.** It’s building its own path to AI dominance.

Key Specs

- Process: TSMC N3

- Peak Performance: 10.1 PetaOPS (FP4)

- Precision Support: FP4, FP6, FP8 (TTU); FP8, BF16, FP16, FP32 (TVP)

- Memory: 272MB on-die SRAM + 216GB HBM3e (7TB/s bandwidth)

- Networking: On-die NIC, 2.8TB/s Ethernet fabric, supports up to 6,144 accelerators

- Cooling: Air and liquid-cooled options

- Azure Integration: Rack-compatible, managed via Azure control plane

- Performance Claim: 30% better performance per dollar vs. current Azure fleet

- Target Workloads: Interactive copilots, batch summarization, advanced reasoning with long context windows

The Maia 200 is **not a drop-in replacement for GPUs**. It’s a **specialized inference engine**—one that Microsoft believes will be critical as AI workloads grow more diverse and demanding.

For enterprises running AI at scale, this could mean **more cost-effective inference**—but only if the software ecosystem (and FP4’s accuracy tradeoffs) prove viable at production scale.

Availability and pricing have not been confirmed.