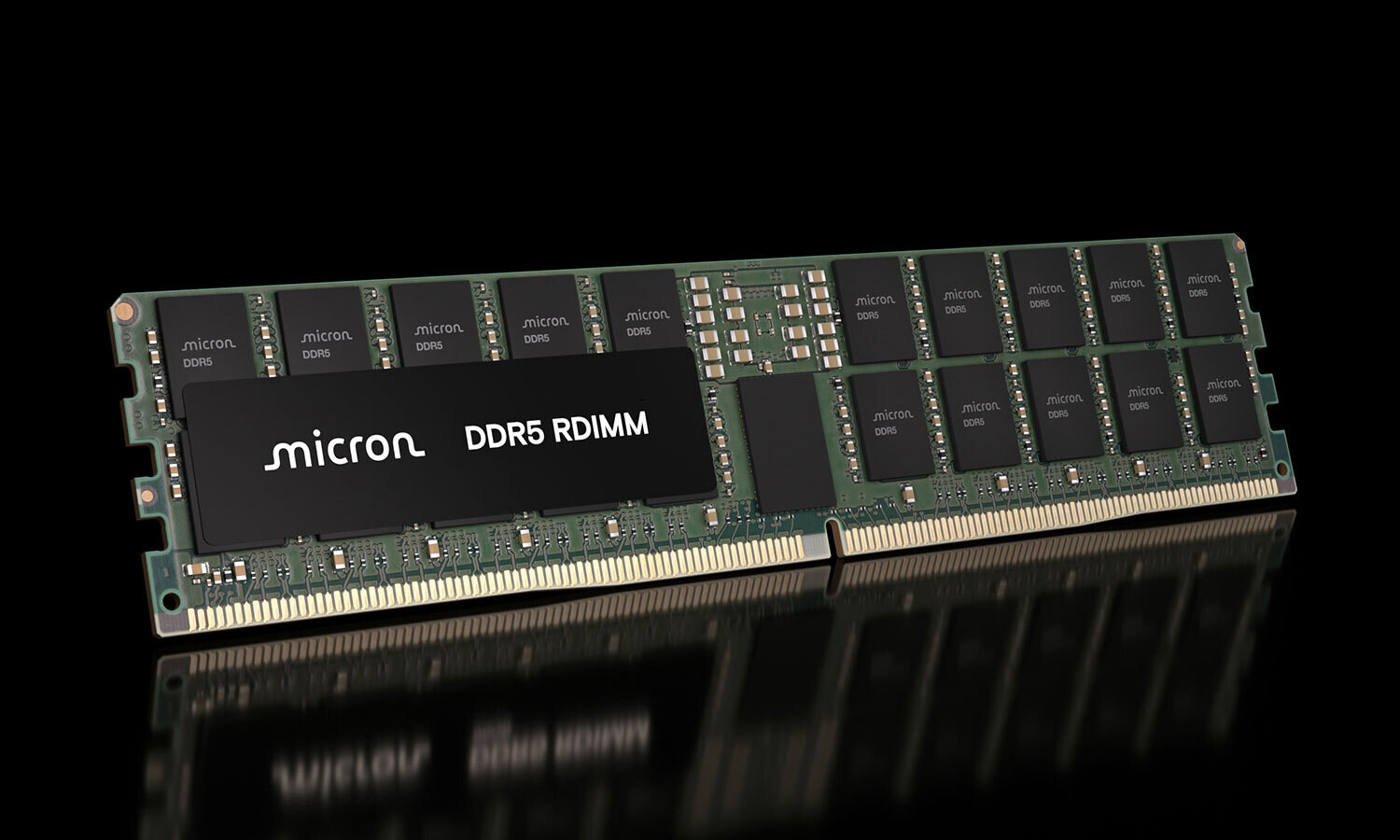

In the relentless march toward more capable AI infrastructure, Micron has taken a notable step forward with its 256GB DDR5-9200MT/s RDIMM modules. These modules, built on the company's 1-gamma DRAM technology, are now being sampled to key ecosystem partners for validation. While the technology is still in the early stages of deployment, it represents a significant leap in memory capacity and efficiency—one that could reshape how data centers are designed and operated.

The 256GB modules are engineered with advanced packaging techniques, including 3D stacking (3DS) and through-silicon vias (TSVs), which connect multiple memory dies. This approach not only increases capacity but also delivers speeds up to 9,200MT/s—more than 40% faster than what's currently available in volume production. The result is a module that can reduce operating power by over 40% compared to two 128GB modules, making it an attractive option for AI workloads where thermal and power constraints are critical.

For data center operators and server architects, the introduction of these high-capacity modules addresses a pressing need. The proliferation of large language models (LLMs), agentic AI, and real-time inference has created a demand for greater memory capacity per socket while maintaining efficiency. Micron's solution aims to meet that demand without compromising on performance or power consumption.

However, the path from sampling to widespread availability is not without its challenges. While Micron is collaborating with ecosystem partners to ensure compatibility across current and next-generation server platforms, the actual deployment timeline remains uncertain. The company has hinted at broader industry trends, such as the potential exit from consumer markets in favor of high-capacity enterprise solutions, which could further influence supply dynamics.

Looking ahead, the 256GB DDR5 RDIMMs could play a pivotal role in scaling AI infrastructure more efficiently. If validated successfully, they may set a new benchmark for memory capacity and power efficiency in data centers. For now, the focus remains on ecosystem validation and ensuring that the technology can deliver on its promises without introducing new bottlenecks.