PC builders and data center operators are facing a critical inflection point: the explosive growth of AI-driven memory demand. Dell’s latest insights suggest that by 2028, memory requirements will skyrocket to levels previously considered unimaginable. This isn’t just about raw capacity—it’s about bandwidth, latency, and how systems scale with next-generation workloads.

Today, the market is still adapting to HBM (High Bandwidth Memory) as a standard for AI accelerators. But Dell warns that the real crunch will come when memory becomes the bottleneck, not just in training runs but in inference and real-time processing. The question isn’t whether this will happen—it’s how quickly buyers can pivot before they’re locked into legacy platforms.

What Changes for Buyers?

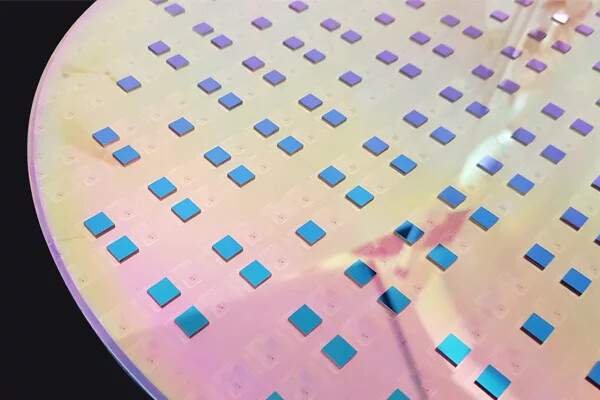

The immediate impact is twofold: cost and compatibility. Memory modules that once fit within standard DIMM slots are now being replaced by specialized HBM stacks, often integrated directly onto GPUs or CPUs. This shift forces a hard choice—build systems around existing infrastructure or migrate to new architectures that support high-bandwidth memory natively.

For PC builders, this means rethinking cooling, power delivery, and even case design. Traditional motherboards with DIMM slots may become obsolete, leaving enthusiasts and enterprises with a stark decision: upgrade entire platforms or risk performance degradation. The trade-off is clear—performance gains come at the cost of flexibility.

Platform Lock-In Looms

That’s the upside—here’s the catch. High-bandwidth memory isn’t just about raw speed; it’s about ecosystem lock-in. Vendors like AMD and NVIDIA are already integrating HBM into their latest GPUs, but the proprietary nature of these solutions means that switching between brands becomes increasingly difficult. For enterprises running large-scale AI workloads, this could translate to vendor dependency—a scenario where memory upgrades are dictated by hardware manufacturers rather than open-market availability.

Dell’s projection of 'unimaginable' demand isn’t hyperbole; it’s a direct response to the scaling needs of generative AI models. Current HBM solutions (like AMD’s Infinity Fabric or NVIDIA’s NVLink) are already pushing the limits, but 2028 could see memory requirements leapfrog even these benchmarks. The implication? Buyers who wait too long may find themselves stuck with underpowered systems, unable to scale without a complete overhaul.

Looking Ahead

The roadmap for AI-ready memory is already being drawn, but the timeline remains uncertain. What’s certain is that the shift from DDR to HBM isn’t just a technical upgrade—it’s a strategic one. For PC builders, this means preparing now for a future where memory isn’t just an afterthought; it’s the linchpin of system performance. The alternative? Risking obsolescence in a market where bandwidth dictates everything.