Data centers are increasingly under pressure to handle larger datasets and more complex computations, particularly in AI development. A new GPU model aims to meet this demand by combining high bandwidth with specialized compute units, potentially reshaping how organizations approach large-scale data processing.

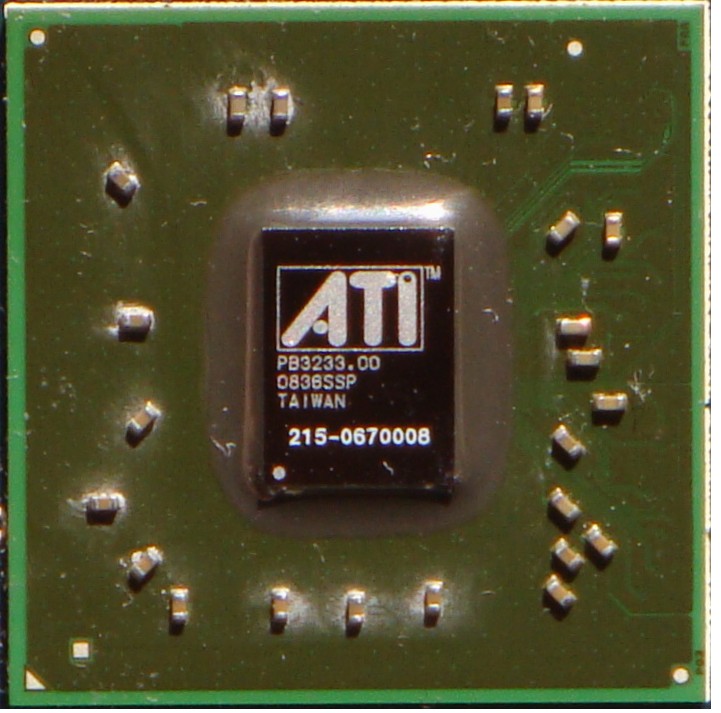

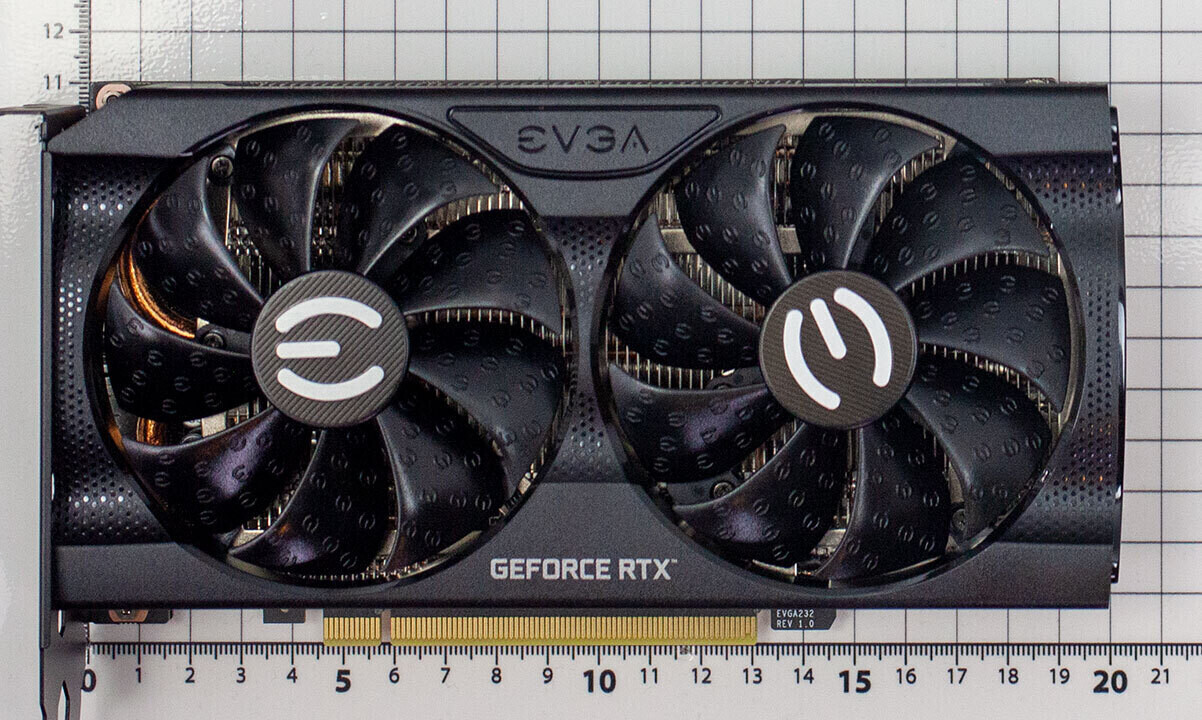

AMD’s latest GPU introduces 24 GB of GDDR6 memory paired with a 192-bit memory bus, delivering up to 384 GB/s bandwidth. This architecture supports 5,120 CUDA cores, which AMD claims can achieve approximately 17 TFLOPS in single-precision performance. The design prioritizes efficiency for tasks like matrix operations and parallel computations, critical components of AI model training.

- Advanced memory technologies reduce latency, addressing a common bottleneck in data-intensive workloads.

- Third-generation Tensor Cores are included to optimize AI-specific processing, enhancing performance for machine learning applications.

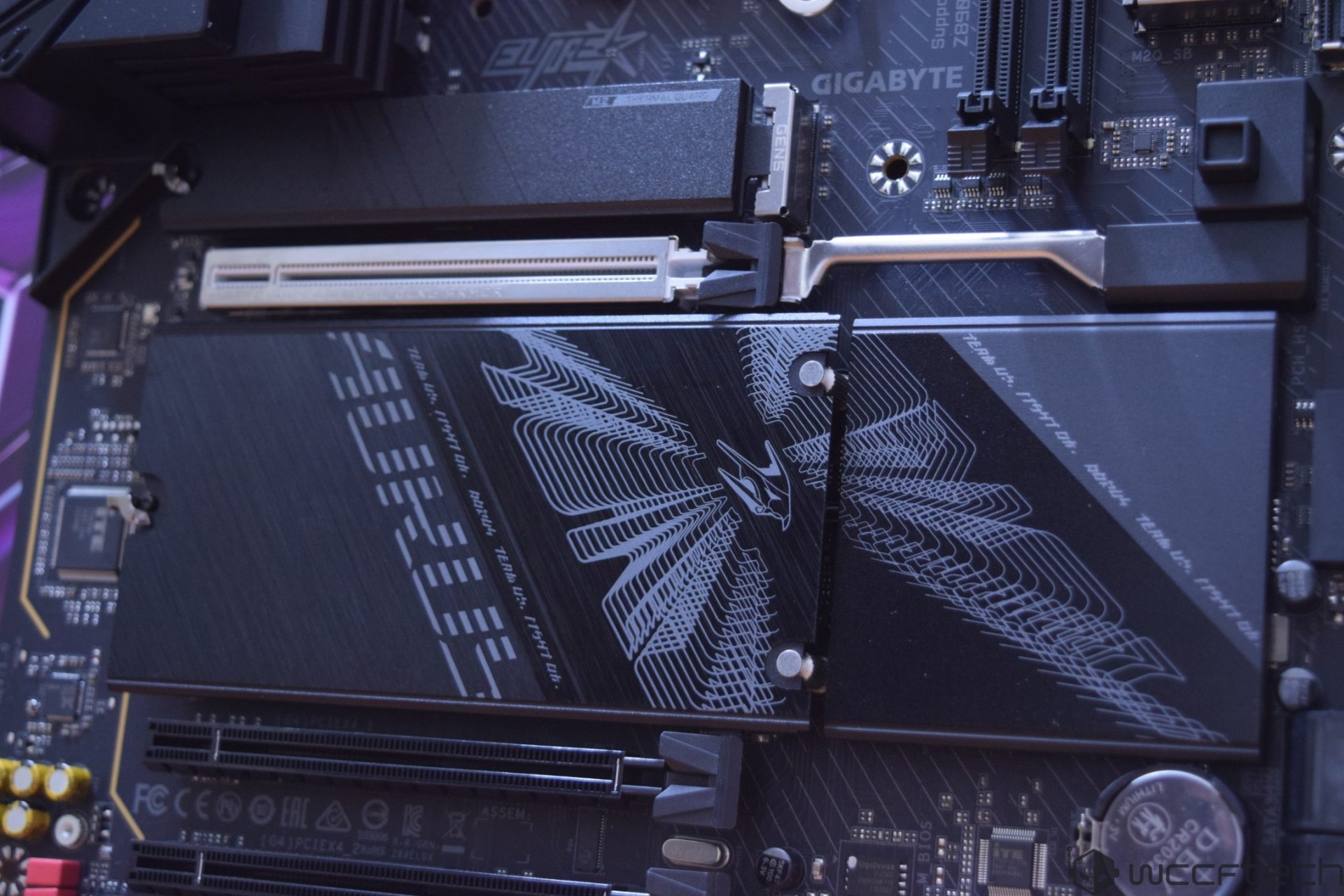

The GPU’s focus on data workloads raises questions about compatibility with existing software stacks. Developers may need to evaluate whether the performance gains outweigh potential integration challenges, especially if their current workflows rely on older architectures. Balancing raw performance with adaptability will be crucial in determining its market adoption.

Availability is expected within the next quarter at an estimated price of $1,299. Its introduction could prompt a reassessment of data center strategies for organizations heavily invested in AI workloads. Whether this model becomes a standard or faces competition from established alternatives remains to be seen.