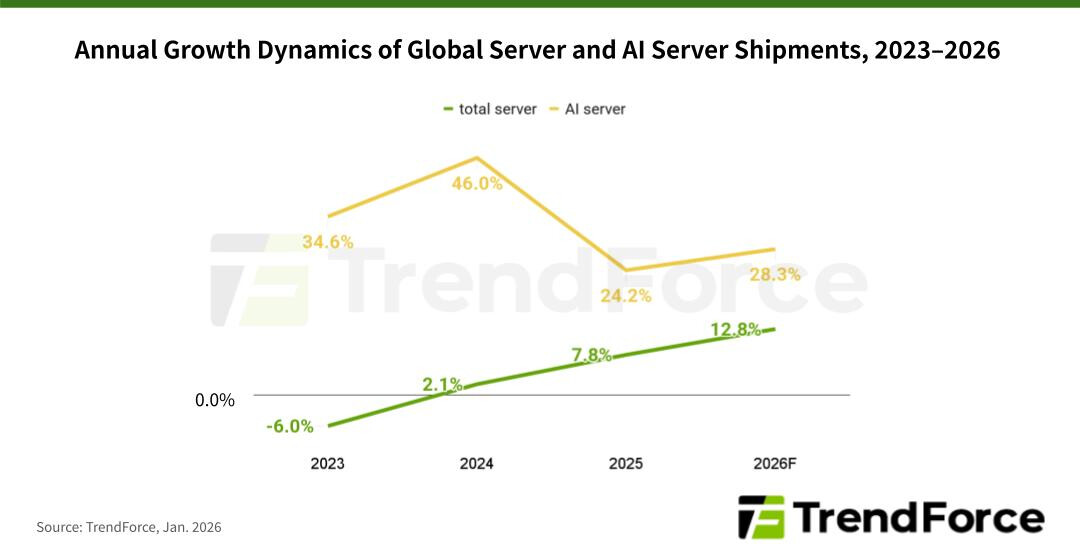

The AI server market is poised for unprecedented growth in 2026, with global shipments expected to rise more than 28% year-over-year. This surge mirrors a fundamental shift in the industry—from the high-stakes race to train ever-larger language models to a broader focus on inference workloads that power AI agents, Copilot services, and other real-time applications.

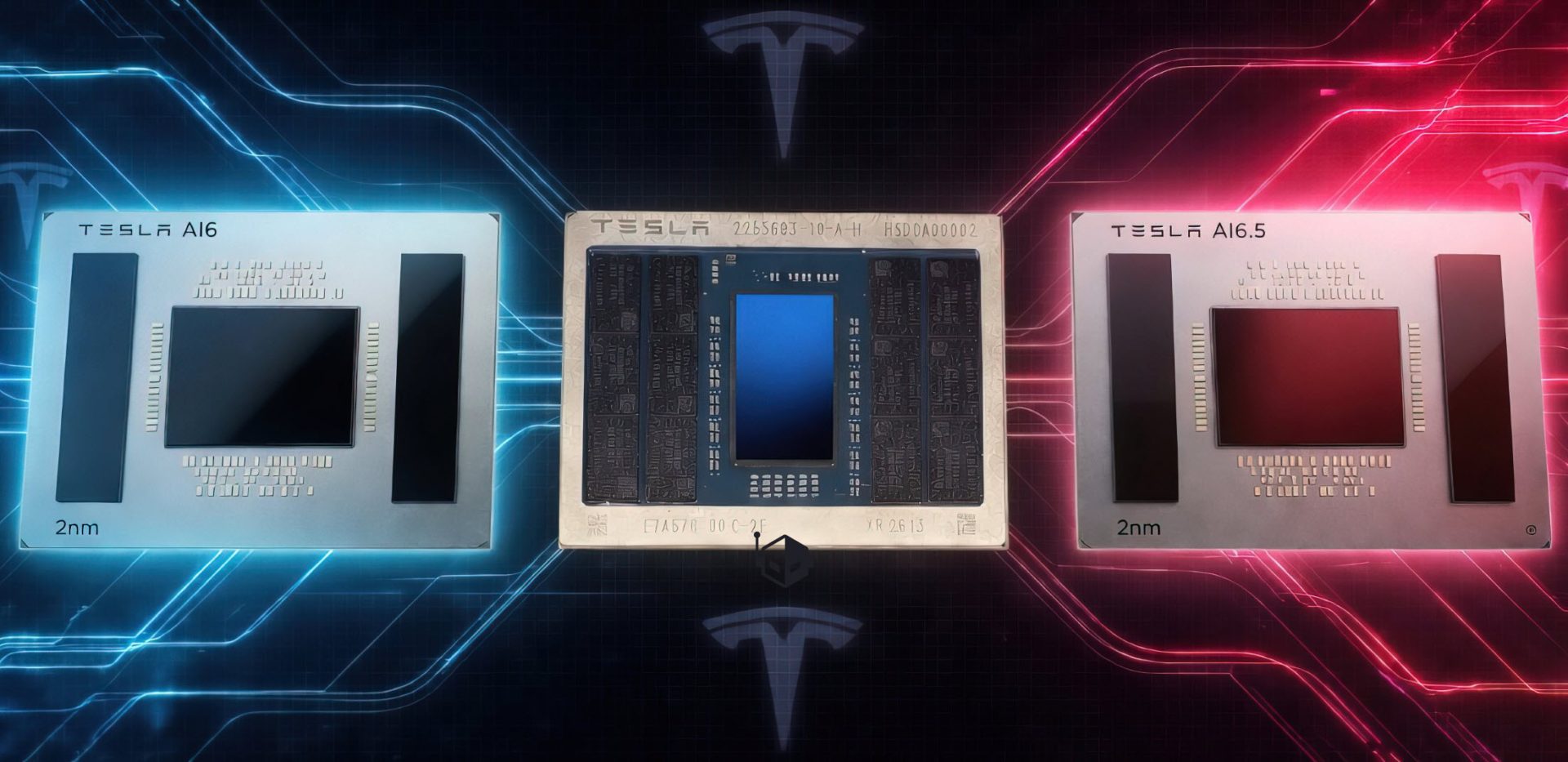

Unlike the GPU-heavy server deployments of 2024–2025, which prioritized parallel processing for training, today’s infrastructure is diversifying. General-purpose servers now play a critical role, handling pre- and post-inference tasks while ASIC-based systems carve out a growing share. By the end of 2026, ASIC-based AI servers are projected to account for nearly 28% of shipments—the highest since 2023—reflecting both the maturity of in-house designs from players like Google and Meta and the increasing efficiency of specialized hardware for edge deployments.

North American cloud service providers (CSPs) remain the driving force behind this growth. The combined capital expenditures of the top five—Google, AWS, Meta, Microsoft, and Oracle—are expected to jump 40% year-over-year in 2026. A significant portion of this spending will go toward replacing aging general-purpose servers acquired during the cloud investment boom of 2019–2021, but the larger trend is expansion: supporting the massive daily inference traffic generated by services like Copilot and Gemini.

GPUs will still dominate the market, making up nearly 70% of shipments. NVIDIA’s GB300-based platforms are likely to lead early in the year, while VR200-based systems gain traction later. However, ASICs are not just a niche—they’re growing faster than GPU-based alternatives. Google, in particular, is doubling down on its TPU technology, which now supports external clients like Anthropic and is increasingly integrated into Google Cloud Platform. This positions the company to become a dominant player in both ASIC development and market share.

Beyond North America, government sovereign cloud projects and edge AI deployments are adding momentum. The result? A server market that’s not just scaling vertically but diversifying horizontally—balancing raw performance with specialized efficiency as AI moves from data centers to the edge.