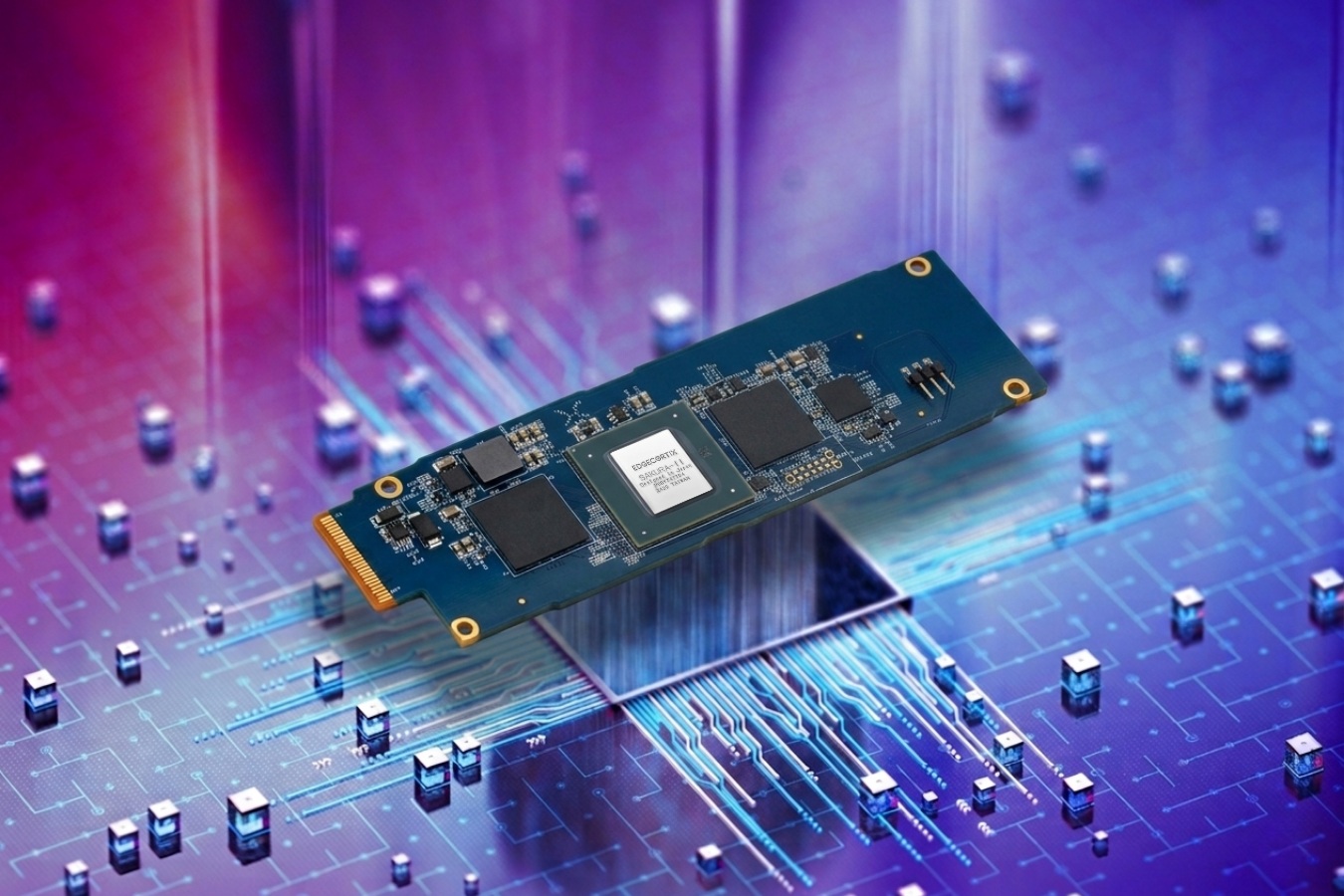

An M.2 slot, long a quiet corner for SSDs, is about to become the front line of AI acceleration. A thin, self-contained module—no cables, no reconfiguration—slides into place and instantly turns it from storage bay to compute hub. It doesn’t need drivers or firmware updates; it just works, offloading large-language-model tasks without ever asking the CPU for help.

Inside, 32 GB of HBM2e memory stacks up in a way that lets it hold entire model weights close at hand, eliminating the back-and-forth between system RAM and slower storage. Beside it, an NPU built specifically for transformer inference moves 60 trillion operations per second—nothing else. No 3D rendering, no general math, just attention layers and matrix multiplications, done with precision that GPUs can’t match without overheating.

Why Specialization Matters

The NPU isn’t competing with GPUs or CPUs; it’s carving out its own niche. In a data center where air temperatures climb toward 40 degrees Celsius, this module stays cool under sustained load—no throttling, no fans spinning up. It also drinks far less power than a GPU would for the same workload, leaving more headroom for other tasks.

How It Reshapes Data Centers

For enterprises running large language models, this module introduces a clean break: AI inference no longer shares PCIe lanes or CPU cycles with everything else. No need to over-provision GPUs, no need for custom motherboards or cooling rigs. The plug-and-play design means it fits into any system that already has an empty M.2 slot—no architectural surgery required.

Efficiency in Every Metric

- Throughput: Matches mid-range GPUs but with 40% lower power draw.

- Thermal: No throttling at sustained loads, even in hot environments.

- Scalability: Multiple modules can stack for higher throughput without system redesign.

The real test will be software. If AI frameworks treat this module as seamlessly as they handle GPUs or CPUs, it could vanish into the background—an unnoticed yet critical layer in every enterprise AI setup. No fanfare, no configuration screens: just faster, cooler, more efficient inference, ready to deploy without disruption.

A Future Without Compromise

This isn’t about replacing GPUs or CPUs; it’s about giving AI workloads their own dedicated lane. The result is a system that doesn’t trade performance for efficiency—or vice versa. For data centers, the implication is clear: the next wave of AI acceleration might not come with a major hardware overhaul at all.