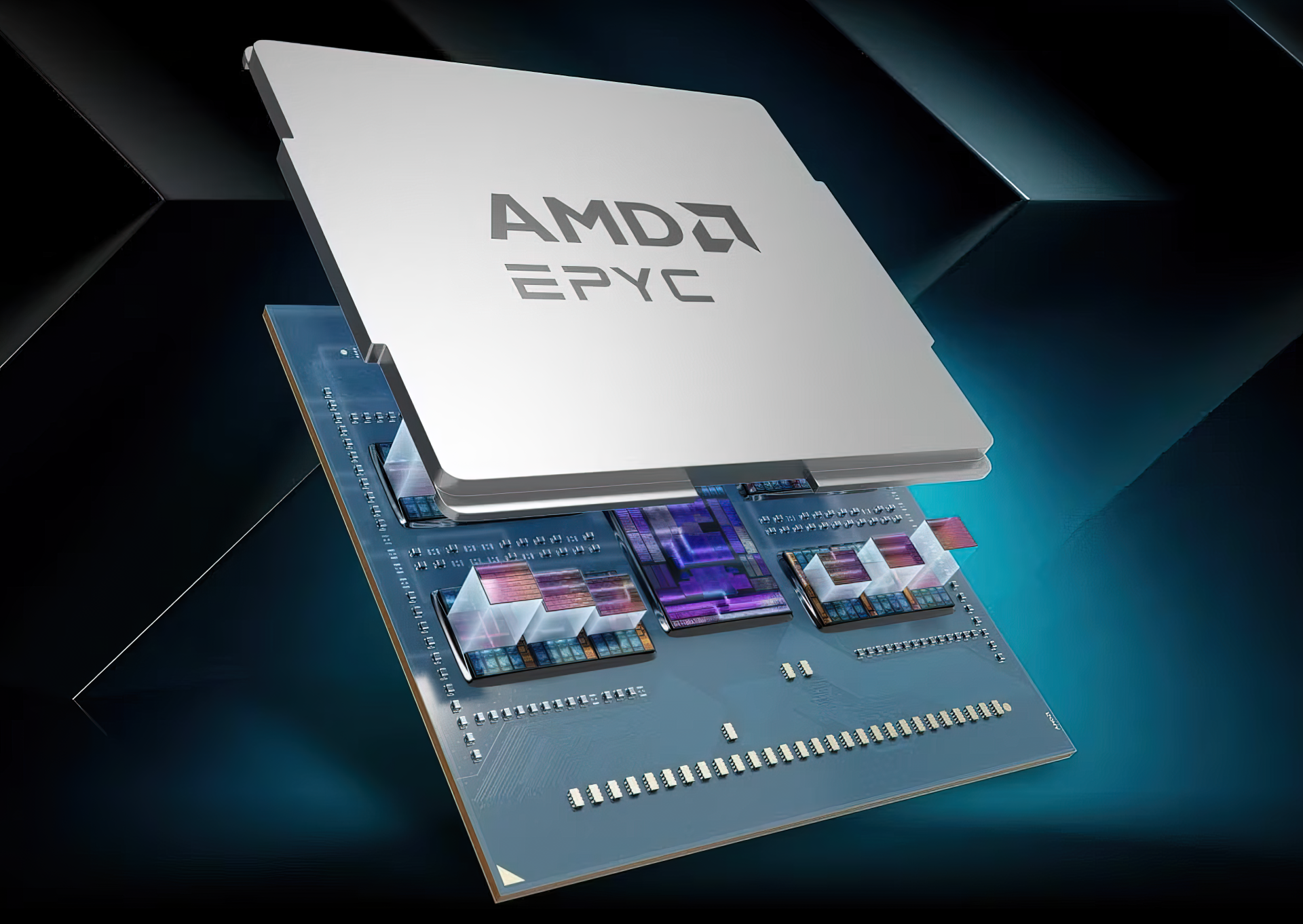

Hardware Semiconductor Agentic AI Pushes CPUs to Pack 400 GB of Memory, 4x More Than Today, as DRAM Shortage Spirals Toward 2027 Hassan Mujtaba • at EDT Add on Google CPUs or GPUs, but require lots of memory for running Agentic AI, and this demand is spiraling to unseen levels as DRAM constraints persist. CPUs Running Agentic AI Will Be Equipped With Up to 400 GB of Memory, Further Crushing The DRAM Supply Chain Memory makers are earning big profits but are also unable to meet the demand. We have seen reports on how major manufacturers are rapidly expanding their production facilities, but these are yet to become operational, and Samsung itself has stated that 2027 will be worse for the DRAM industry than 2026, so it's looking like a hopeless situation where DRAM will be in shortages until the AI boom continues. Related Story Intel & AMD Work On APX, The Next Major Step In The Evolution of x86 Architectures, Adds More Performance Without Requiring More Die Area & PowerTroubles For The Customers, Profits For Makers And with Agentic AI accelerating its pace, the main component that is being eyed is the CPU. While GPUs are still required and very much in demand, the ratio of GPUs to CPUs in datacenters has fallen from 8:1 to 4:1, and is likely to approach 1:1 in the near future as Agentic AI models require higher processing capabilities. At the same time, Agentic AI still requires lots of memory, and despite compression technologies through algorithms helping address the KV cache concerns, there is still the matter of demand rising. As such, the CPU segment will witness a massive growth in memory capacities. Citing industry sources, SE Daily reports that CPU makers are now planning to equip their AI CPUs with 300-400 GB of memory. This is a huge increase versus the existing 96-256 GB DRAM offered per chip. The report doesn't clarify what type of memory is being discussed here, as currently, CPU platforms can support 4 to 8 TB of memory, but that's for the entire platform using DIMMs. The upcoming MRDIMM technology will help with memory capacity and bandwidth boosts, but it's still a physically separate DRAM. The competition for memory capacity is expanding beyond GPUs to CPUs, showing signs of snowballing. Nvidia's next-generation AI chip, 'Vera Rubin,' features 288GB via eight HBM chips, while AMD's next-generation GPU, the MI400, boasts an even larger mammoth capacity of 432GB. Google's recently unveiled custom chip, the 8th-generation Tensor Processing Unit (TPU) TPU 8i, is also expected to feature 288GB of HBM capacity. Furthermore, as Intel's AI CPU 'Xeon' and AMD's 'Epyc' begin using high-capacity DDR5 of up to 400GB, the memory shortage is expected to persist. Machine Translated (via SE Daily) The likelihood is that maybe we could see certain CPU SKUs packaged with HBM, or the new HBF/ZAM memory standards that are being developed at the moment. AMD did release an EPYC SKU a while back with HBM memory, so it has a background of doing such chips. CPUs Wth More Memory Than GPUs Or the simpler way is that memory capacities per DIMM increase substantially. A single 400 GB DIMM will be more DRAM than current GPUs, such as NVIDIA's GB300 and AMD's MI350X with 288 GB HBM3E. Upcoming solutions will scale up DRAM with newer HBM4 standards and increased capacities. The increase in capacities can lead to further supply shortages, and as denser DRAM ICs become the focus, we can expect memory makers to back out of lower-end products. Samsung has already done this with LPDDR4 memory, where it ceased production and moved to the more profitable LPDDR5 solutions. But like LPDDR5, DDR5 comes in various shapes and sizes, and each has its own use. As DRAM demand for AI requires more of the high-end chips to be produced, fewer production lines would be allocated to lower-end products, leading to worse shortages and even higher prices for segments besides AI. About the : A Software Engineer by training and a PC enthusiast by passion, Hassan Mujtaba serves as 's for hardware section. With years of experience in the industry, he specializes in deep-dive technical analysis of next-generation CPU and GPU architectures, motherboards, and cooling solutions. His work involves not only breaking news on upcoming technologies but also extensive hands-on reviews and benchmarking. Follow on Google to get more of our news coverage in your feeds. Deal of the Day Further Reading AMD Aims Ryzen AI Halo Mini PC at NVIDIA’s $4,699 DGX Spark, Targets June Launch With Ryzen AI MAX+ 395 Apple’s Fortitude Against The DRAM Shortage Is Commendable, But CEO Hints That iPhone Price Stability Is Reaching Its Limits Samsung Officially Discontinues LPDDR4 Memory, But Still Sees ~50x Profit Jump & Expects Memory Shortages To Get Worse In 2027 ASRock Breaks Taichi Tradition With First All-White X870E Flagship, Built For AMD’s New Ryzen 9 9950X3D2 Dual Edition Read all on Agentic AI Pushes CPUs to Pack 400 GB of Memory, 4x More Than Today, as DRAM Shortage Spirals Toward 2027

Reading tools

Key takeaways

- Semiconductor Agentic AI Pushes CPUs to Pack 400 GB of Memory, 4x More Than Today, as DRAM Shortage Spirals Toward 2027...

- CPUs Running Agentic AI Will Be Equipped With Up to 400 GB of Memory, Further Crushing The DRAM Supply Chain Memory make...

- We have seen reports on how major manufacturers are rapidly expanding their production facilities, but these are yet to...

Share this article