Razer Introduces AIKit: A New Approach to Local LLM Development

The technology landscape is rapidly evolving with the increasing prominence of large language models. Traditionally, accessing and utilizing these powerful AI tools has often relied on cloud-based services, raising concerns about cost, data privacy, and latency. Razer recognizes this shift and has responded with AIKit, an open-source platform aiming to democratize LLM development by bringing high-performance capabilities directly to users’ hardware.

AIKit fundamentally simplifies the entire lifecycle of developing and deploying LLMs. The core functionality revolves around automating key aspects typically requiring significant technical expertise. This includes automatic GPU configuration, cluster formation for scalable processing, and intelligent optimization of settings specifically tailored for local inference and fine-tuning. The result is a system designed to deliver cloud-grade performance with dramatically reduced latency – all while maintaining complete local control over the data and models.

Key Features and Functionality

At its heart, AIKit is built around providing an accessible and efficient workflow for developers. Several key features contribute to this goal

- Simplified Setup: The platform’s out-of-the-box configuration dramatically reduces the initial setup time, allowing users to quickly begin experimenting with LLMs without extensive technical overhead.

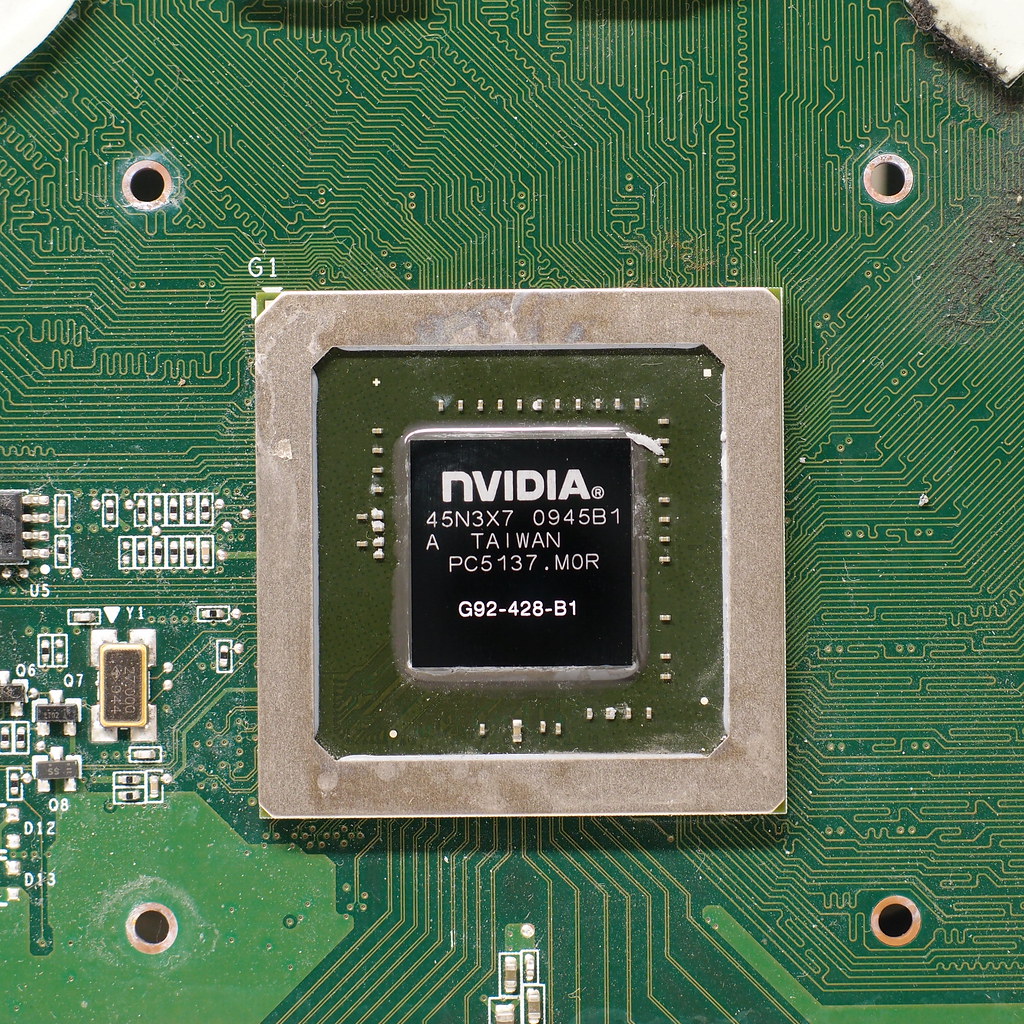

- GPU Optimization: AIKit intelligently utilizes Razer's GPU hardware, leveraging its processing power for accelerated inference and fine-tuning tasks. This optimization is critical for achieving high performance levels typically associated with cloud deployments.

- Cluster Support: For demanding workloads or larger models, AIKit provides the capability to form clusters of GPUs, effectively scaling up computational resources as needed.

- Framework Compatibility: The platform supports popular LLM frameworks, enabling developers to utilize their preferred tools and methodologies for model development and training.

- Local Control & Privacy: A significant advantage is the complete local control offered by AIKit. Users retain full ownership of their data and models, mitigating privacy concerns often associated with cloud-based solutions.

Targeting Researchers and Developers

AIKit is specifically targeted toward AI researchers and developers seeking a cost-effective and secure environment for exploring and experimenting with LLMs. The platform’s emphasis on local control makes it particularly attractive to those concerned about data security, regulatory compliance, or simply wanting to avoid ongoing cloud service fees. The ability to run over 280,000 LLMs locally with familiar frameworks represents a substantial advancement in accessibility for the AI community.

Hardware Integration and Performance

Razer’s involvement leverages its existing hardware ecosystem – particularly its GPUs – providing a foundation for high-performance local LLM development. The platform is designed to seamlessly integrate with Razer's GPU devices, optimizing them for AI workloads. While specific technical details regarding the underlying architecture and optimization techniques are not publicly disclosed, the goal is to deliver performance comparable to cloud-based solutions while retaining the benefits of local control.

Future Implications

The launch of AIKit signals a growing trend toward decentralized AI development. By providing an accessible and powerful open-source platform, Razer is contributing to a more democratized landscape where researchers and developers can freely explore and innovate with LLMs without being constrained by the limitations or costs of traditional cloud services. The potential applications span numerous fields, including natural language processing, content generation, data analysis, and more.