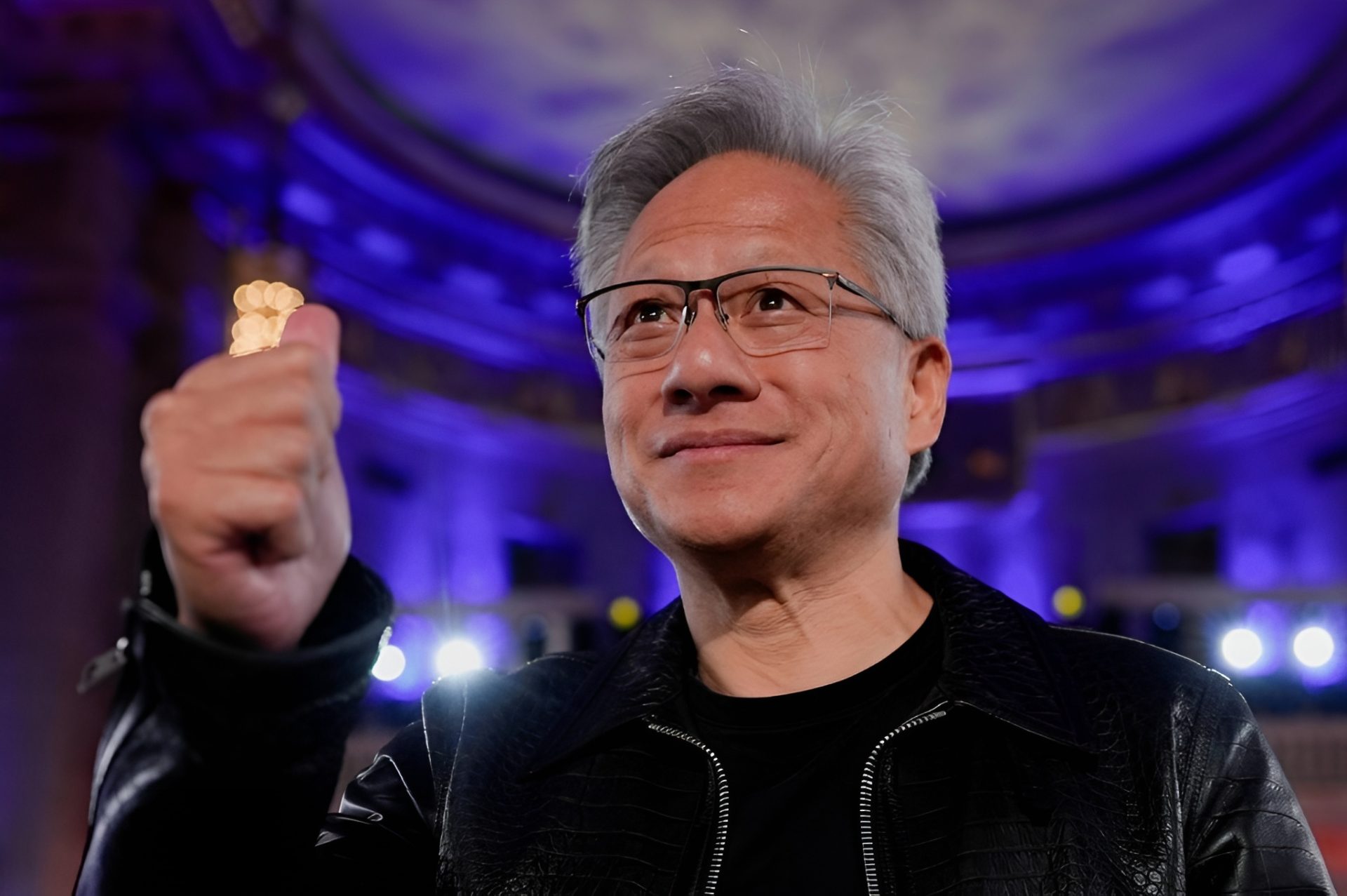

NVIDIA has quietly built one of the most powerful infrastructures in the tech industry, but its true strength lies not just in hardware or software. It's in the web of alliances it has woven over years—a network that extends beyond traditional partnerships to shape entire markets.

The company's recent focus on infrastructure has been a masterstroke, allowing it to control critical aspects of computing from data centers to edge devices. Yet, the details of how this infrastructure translates into market dominance are often overlooked. This is where NVIDIA's strategy becomes particularly compelling: by integrating its hardware, software, and ecosystem in ways that lock in users and competitors alike.

At a glance

- NVIDIA's infrastructure spans data centers, edge devices, and AI workloads, giving it unmatched control over computing pipelines.

- The company's alliances extend beyond traditional partnerships to include cloud providers, automakers, and even governments, creating a sticky ecosystem.

- Platform lock-in is a key driver of NVIDIA's dominance, with its software and tools making it difficult for competitors to break in.

- Recent advancements in AI acceleration and data center efficiency have further solidified its position, but challenges remain in balancing performance and power consumption.

The foundation of NVIDIA's infrastructure is built on a few core pillars. First, its dominance in AI acceleration—through products like the A100 and H100 GPUs—has made it indispensable for data center operators. These chips are not just faster; they redefine how AI models are trained and deployed. Second, NVIDIA's software stack, particularly CUDA and its ecosystem of development tools, ensures that once a user adopts its hardware, switching to another platform becomes a significant hurdle. This is the essence of platform lock-in.

But NVIDIA doesn't stop at hardware and software. Its alliances with cloud providers like AWS and Microsoft Azure, along with automakers like Tesla and BMW, create a network effect that further cements its position. These partnerships aren't just about selling more GPUs; they're about integrating NVIDIA's technology into the very fabric of how these companies operate. For example, Tesla's reliance on NVIDIA's Drive platform for autonomous driving is a testament to this integration. Similarly, cloud providers use NVIDIA's GPUs for their AI services, making it nearly impossible for competitors like AMD or Intel to dislodge them without a massive overhaul.

However, this dominance isn't without its tradeoffs. The complexity of NVIDIA's ecosystem can be daunting, and its solutions often come with higher power consumption and cost compared to alternatives. For instance, the H100 GPU delivers extraordinary performance for AI workloads but requires significant power infrastructure, which could be a limiting factor in some deployments. Balancing these tradeoffs will be crucial as NVIDIA continues to push the boundaries of what's possible in computing.

Looking ahead, NVIDIA's focus on infrastructure and alliances will likely shape the next decade of tech innovation. Its ability to integrate hardware, software, and ecosystem in a cohesive manner gives it an edge that competitors struggle to match. For power users and enterprises, this means a landscape where NVIDIA's solutions are not just preferred but often necessary. The challenge for other players will be to break this cycle, which is no small feat given the depth of NVIDIA's ecosystem.