A data center operator managing a multi-region AI training cluster needs to balance performance, cost, and physical constraints. Traditionally, scaling GPU workloads across sites meant juggling network latency, thermal output, and power draw—each additional node added complexity without guaranteed linear gains.

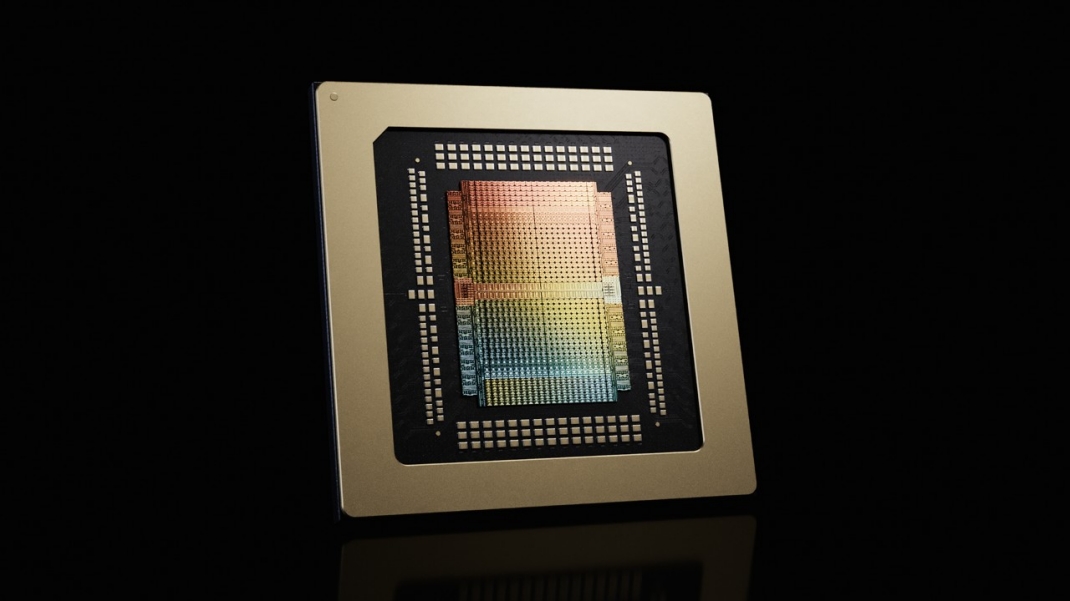

NVIDIA’s Rubin framework changes that equation. By integrating with Google’s virtual machine environment, Rubin stretches a single logical cluster to nearly one million GPUs spread across multiple physical locations. The result is a step forward in distributed AI infrastructure, but with trade-offs that demand closer examination.

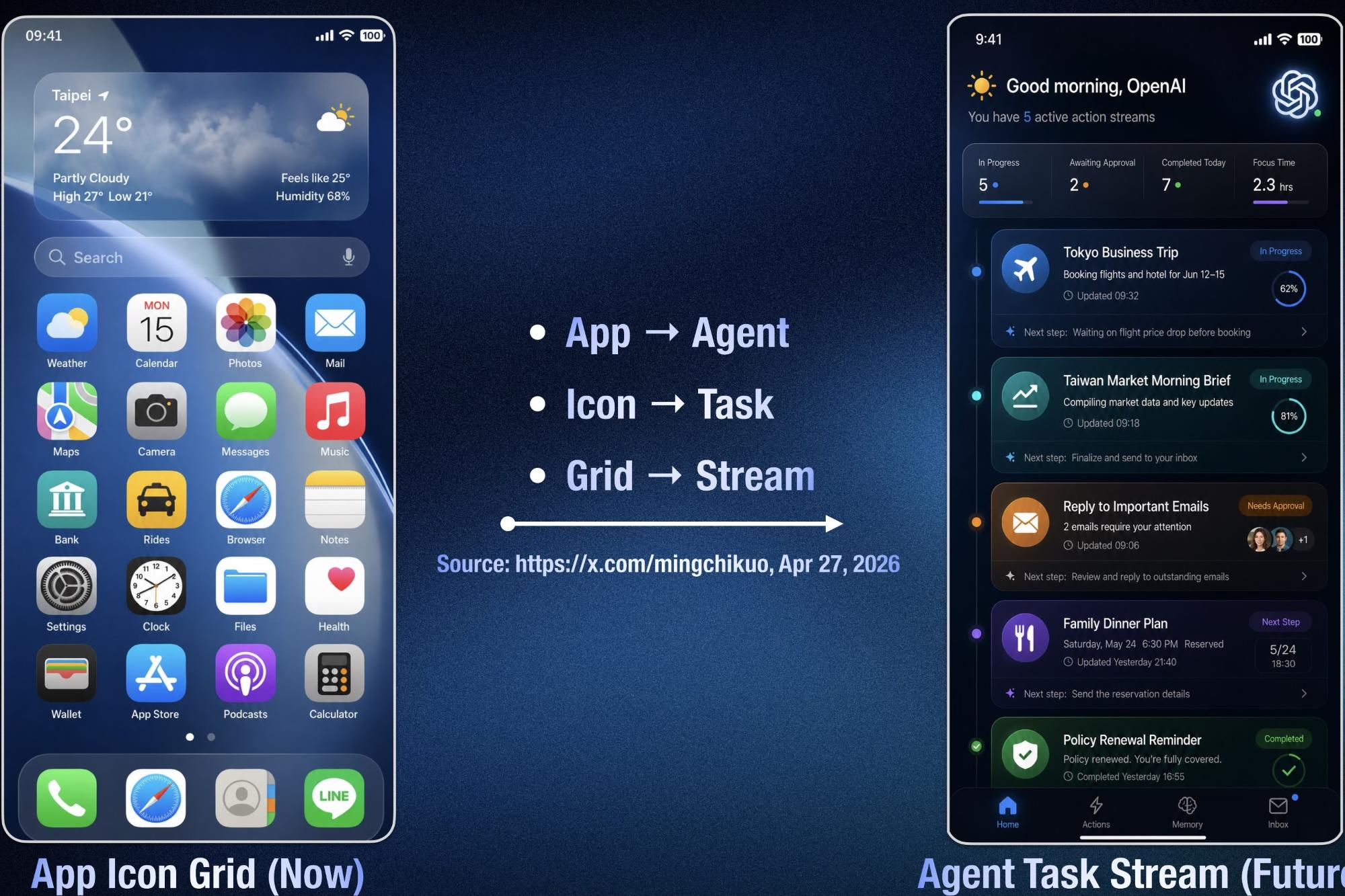

The core of the advancement lies in two layers: a lightweight virtual GPU abstraction and an optimized scheduling system. The virtual GPU layer abstracts hardware details, allowing Rubin to treat GPUs as if they were local—even when they’re thousands of miles away. This reduces network overhead by minimizing data movement between sites.

Under the hood, Rubin uses a combination of NVIDIA’s NVLink interconnect and Google’s custom networking stack to maintain low-latency communication. Benchmarks show that for certain AI workloads, the framework achieves near-linear scaling efficiency up to 10,000 GPUs per site. Beyond that, performance plateaus due to thermal and power constraints, a reality check that mirrors challenges in physical GPU clusters.

- A single Rubin-managed cluster can span nearly one million GPUs across multiple sites, but practical deployment is limited by thermal and power density.

- The framework’s virtual GPU abstraction reduces network latency for distributed AI training, though exact performance gains depend on workload type.

- Long-term roadmap suggests further optimizations in energy efficiency, but whether Rubin can break the 10,000-GPU-per-site barrier remains an open question.

The implications for small businesses are mixed. While Rubin’s scale is designed for hyperscale environments, its underlying principles—efficient virtualization and distributed scheduling—could trickle down to smaller deployments over time. For now, the technology reinforces NVIDIA’s position in large-scale AI infrastructure, but its real-world impact on smaller operations will depend on how these challenges are addressed in future iterations.