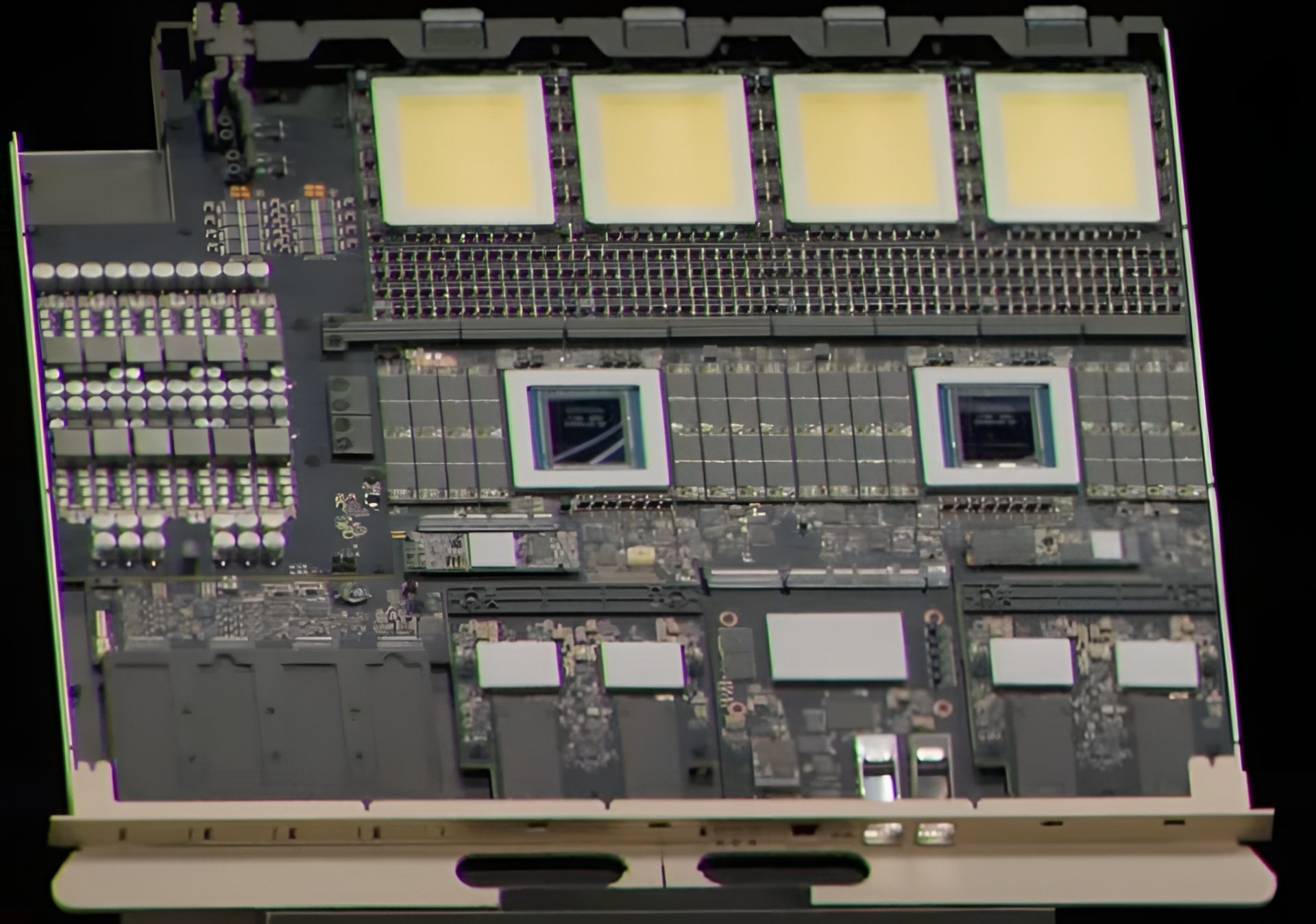

NVIDIA's Rubin Ultra GPU is emerging as a case study in adaptability, with reports suggesting a strategic retreat from its original four-die architecture to a more feasible dual-die configuration. This pivot isn't just about scaling back ambition—it’s about navigating the realities of modern semiconductor production without compromising on core performance metrics that matter most to power users.

The Rubin Ultra was always meant to push boundaries, with a multi-chip approach designed to maximize memory bandwidth and compute density for AI training and data center workloads. But in an industry where supply chain disruptions have become the norm rather than the exception, NVIDIA appears to be prioritizing practicality over theoretical peak performance. The dual-die design likely represents a compromise that preserves 24GB of GDDR6 memory at 17 Gbps while simplifying manufacturing complexity.

What Power Users Gain

- Accessibility: A dual-die architecture may improve yield rates and reduce production bottlenecks, making high-end GPUs more consistent in the market.

- Memory and Bandwidth: 24GB GDDR6 at 17 Gbps remains a critical specification for large-scale AI models and real-time rendering tasks.

- Software Ecosystem: Compatibility with Ada Lovelace ensures seamless integration with CUDA, TensorRT, and other frameworks that power modern workloads.

Key Advanced Details

The shift to dual-die doesn’t mean abandoning innovation. Under the hood, the Rubin Ultra is expected to retain NVIDIA’s latest architectural advancements, including improved ray tracing units and AI acceleration engines. For users deeply involved in scientific computing or high-fidelity rendering, this means maintaining performance parity with single-chip designs while potentially offering better thermal efficiency—a critical factor in data center deployments.

Limitations and Trade-offs

The most significant question is whether the dual-die approach will limit peak compute performance. While a four-die design could theoretically offer higher throughput, real-world gains often depend on software optimization and memory subsystem efficiency. For most AI workloads, the Rubin Ultra’s adjusted architecture should deliver strong single-precision and mixed-precision performance without the latency issues that can plague multi-chip designs.

Market Positioning

The Rubin Ultra is poised to sit between NVIDIA’s mid-range RTX 40-series GPUs and its high-end data center offerings. If it lands with a competitive price point, it could become a go-to choice for researchers and enterprises looking for a balance between performance and cost. However, enthusiasts chasing the absolute limits of real-time rendering or scientific simulation may find themselves constrained by this trade-off.

What’s Next

The GPU’s final specifications, pricing, and release timeline will be critical in determining its impact. NVIDIA’s track record suggests it will leverage this design to strengthen its position in AI infrastructure, particularly for inference workloads where memory bandwidth is king. For power users, the Rubin Ultra represents a fascinating experiment: Can practicality coexist with performance without sacrificing innovation? The answer may lie in how well NVIDIA optimizes its software stack to compensate for the hardware compromises.

Advanced Considerations

- Thermal Design: A dual-die layout could improve heat distribution, reducing the need for aggressive cooling solutions in data centers.

- Memory Compression: NVIDIA’s Tensor Cores may play a larger role in compressing memory bandwidth requirements, offsetting some of the limitations of a smaller die count.

- Software Optimization: Future CUDA updates could prioritize workload distribution across dual dies, minimizing performance loss compared to single-chip alternatives.