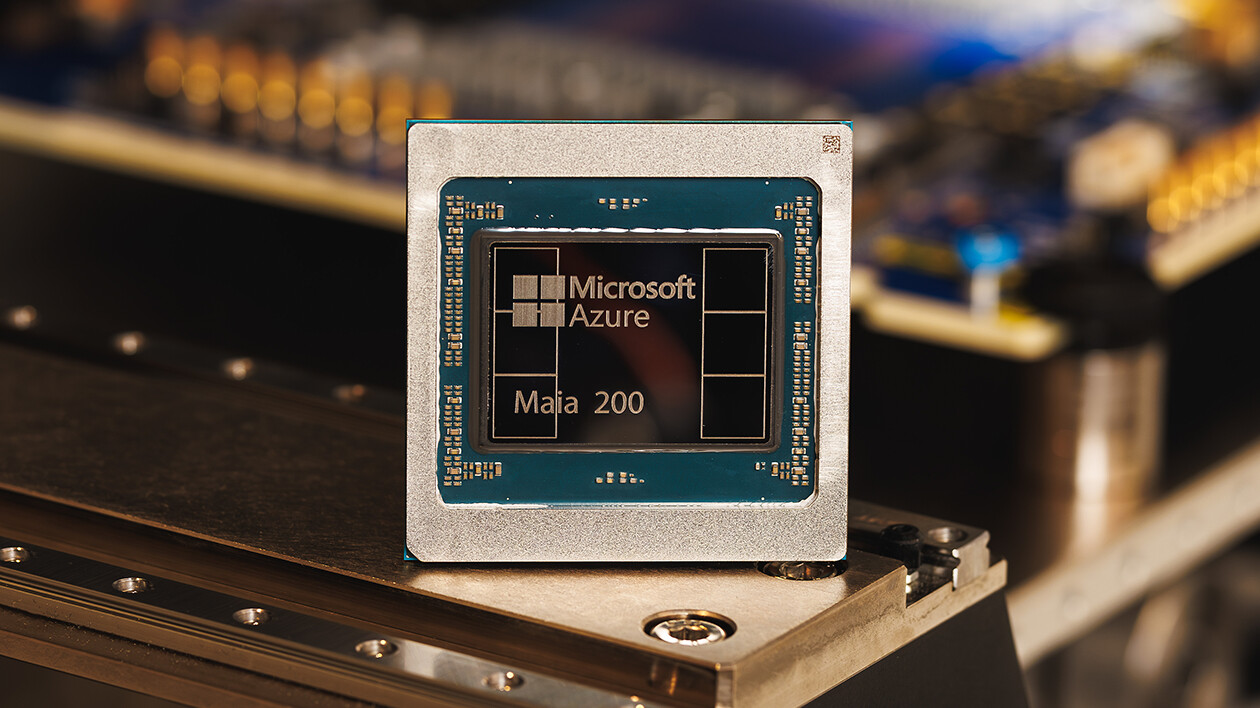

Microsoft has announced the Maia 200, a new AI accelerator designed to redefine inference economics for hyperscale workloads. Built on TSMC’s advanced 3nm process, the chip integrates 216GB of HBM3e memory and specialized tensor cores optimized for FP8 and FP4 precision, positioning it as the company’s most capable in-house silicon for AI tasks.

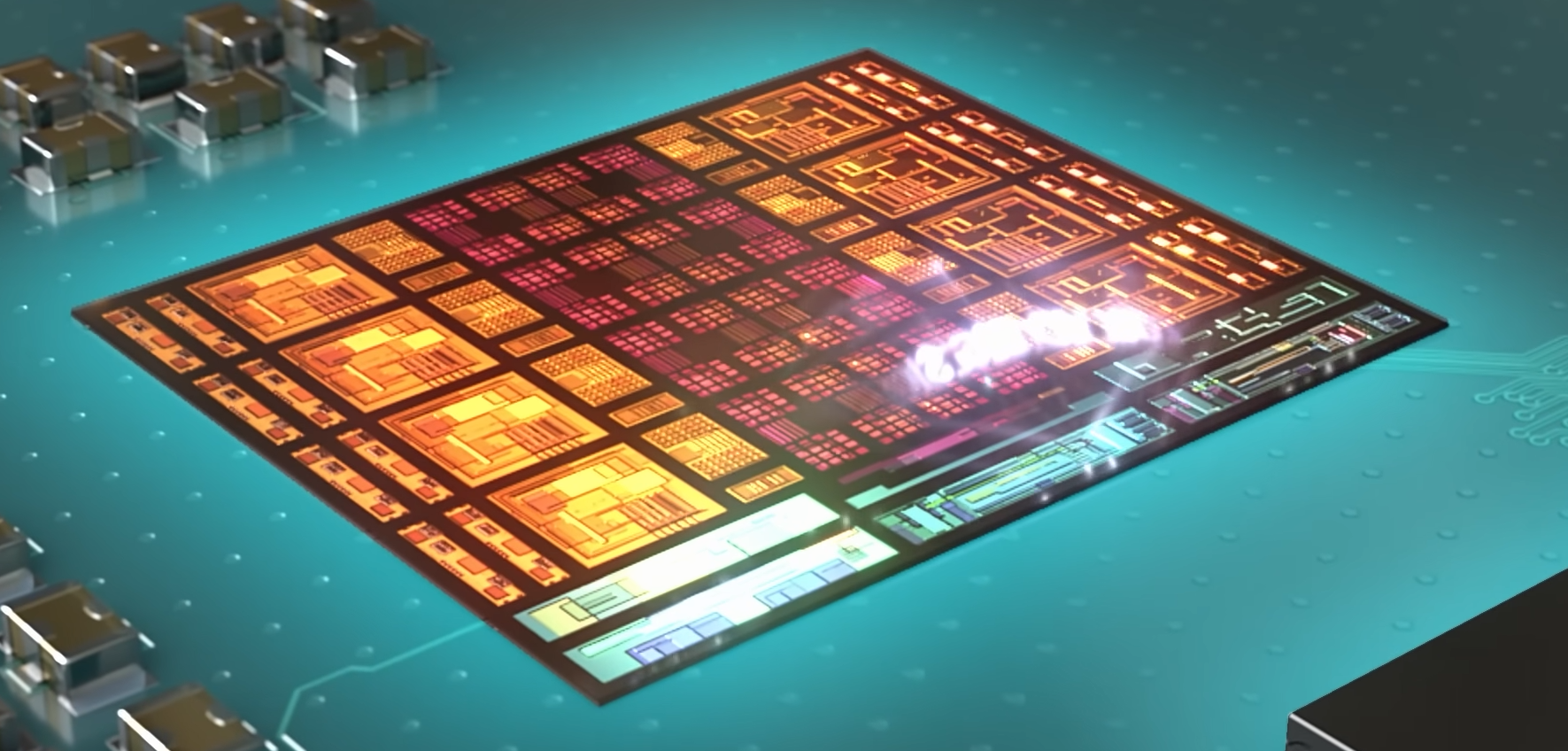

The Maia 200’s performance hinges on a combination of low-precision compute and a revamped memory subsystem. With over 10 petaFLOPS in FP4 and 5 petaFLOPS in FP8, the chip operates within a 750W TDP, balancing raw throughput with efficiency. Its 272MB of on-chip SRAM and a dedicated DMA engine reduce bottlenecks in data movement, a critical factor for large language models (LLMs) where token processing speed often lags behind compute capacity.

Key to its design is a two-tier scale-up network, leveraging standard Ethernet to connect accelerators without proprietary fabrics. Each Maia 200 exposes 2.8TB/s of bidirectional bandwidth, enabling clusters of up to 6,144 accelerators with predictable performance. This architecture simplifies deployment in Azure’s datacenters while reducing power and cost per inference operation.

- Process: TSMC 3nm

- Memory: 216GB HBM3e (7TB/s bandwidth)

- On-chip SRAM: 272MB

- Precision support: FP8/FP4 tensor cores

- Performance: >10 petaFLOPS FP4, >5 petaFLOPS FP8

- TDP: 750W

- Bandwidth: 2.8TB/s bidirectional per accelerator

- Networking: Ethernet-based scale-up fabric

- Cluster scale: Up to 6,144 accelerators

The Maia 200 is already deployed in Microsoft’s US Central datacenter near Des Moines, Iowa, with US West 3 (Phoenix, Arizona) slated for rollout. It will support Azure’s AI infrastructure, including OpenAI’s GPT-5.2 models and Microsoft 365 Copilot, while also accelerating synthetic data generation for reinforcement learning pipelines.

Developers gain access to the Maia SDK, which includes PyTorch integration, a Triton compiler, and optimized kernels. The SDK’s low-level programming language allows fine-grained control for specialized workloads, ensuring compatibility across Microsoft’s heterogeneous AI hardware.

Unlike competitors relying on custom fabrics, the Maia 200’s Ethernet-based networking reduces complexity and cost. Its liquid cooling Heat Exchanger Unit and pre-silicon validation—from chip design to datacenter deployment—cut time-to-production by half compared to prior Microsoft AI projects.

For enterprises and researchers, the Maia 200 represents a leap in inference efficiency, particularly for LLMs where precision trade-offs are critical. Its integration with Azure ensures seamless scaling, while the SDK lowers barriers for custom model optimization. With deployment underway, Microsoft’s push into first-party AI silicon underscores its commitment to reducing reliance on third-party accelerators in the long term.