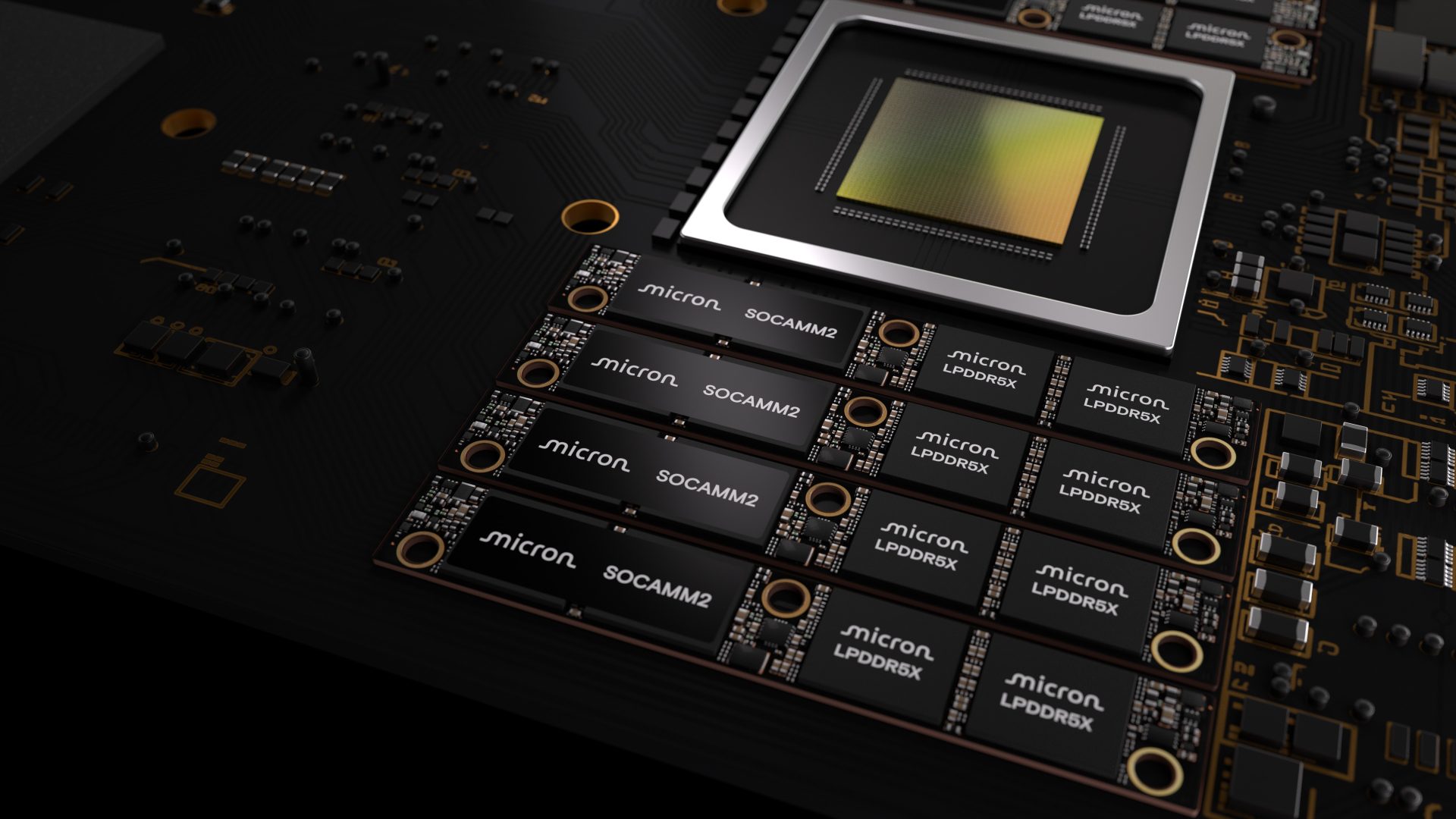

A single 256 GB memory module could soon become standard in next-generation AI servers, according to a new collaboration between Micron and NVIDIA. The partnership introduces the SOCAMM2, designed to address growing memory bottlenecks in large-scale AI deployments by combining increased capacity with optimized power efficiency.

Micron’s latest SOCAMM2 module represents a significant leap in per-module capacity, jumping from 192 GB to 256 GB. This expansion is achieved through a larger monolithic LPDRAM die—now at 32 GB per chip—allowing an 8-channel CPU to access up to 2 TB of memory. The company states that this configuration enables AI servers to handle long-context inference workloads more efficiently, with time-to-first-token (TTFT) improvements reported to be 2.3 times faster for extended contexts.

The SOCAMM2 is not just about raw capacity; it integrates a KV-cache optimization specifically tailored for agentic AI applications, where standalone CPU workloads are becoming increasingly common. While the benefits for AI infrastructure are clear, industry observers note that such high-capacity modules may strain DRAM supply chains, potentially diverting allocations from general-purpose products like GDDR7.

Performance and Competition

The 256 GB SOCAMM2 module is already being sampled to customers, with Micron showcasing it at NVIDIA’s upcoming GTC 2026 event. Early adopters, including NVIDIA’s data center CPU team, have highlighted its potential to complement next-generation AI CPUs by reducing memory bottlenecks—a critical factor as AI models grow in complexity.

However, the practical impact remains tied to demand. While the module promises lower latency and higher throughput for AI workloads, its real-world adoption will depend on how quickly enterprises scale beyond current 192 GB configurations. For now, the SOCAMM2 stands as a milestone in memory technology, but whether it becomes a standard or a niche solution remains an open question.

Key Specifications

- Capacity: 256 GB per module (up from 192 GB)

- Die size: 32 GB LPDRAM monolithic die

- Total memory per CPU: Up to 2 TB in an 8-channel configuration

- Performance: 2.3x improvement in TTFT for long-context inference

- Target workloads: Agentic AI, large-scale context processing

The collaboration between Micron and NVIDIA underscores a broader trend in AI hardware development, where memory efficiency is becoming as critical as compute power. For enterprises building or upgrading AI infrastructure, the SOCAMM2 could offer tangible performance gains—but only if the underlying systems are designed to leverage its capabilities.