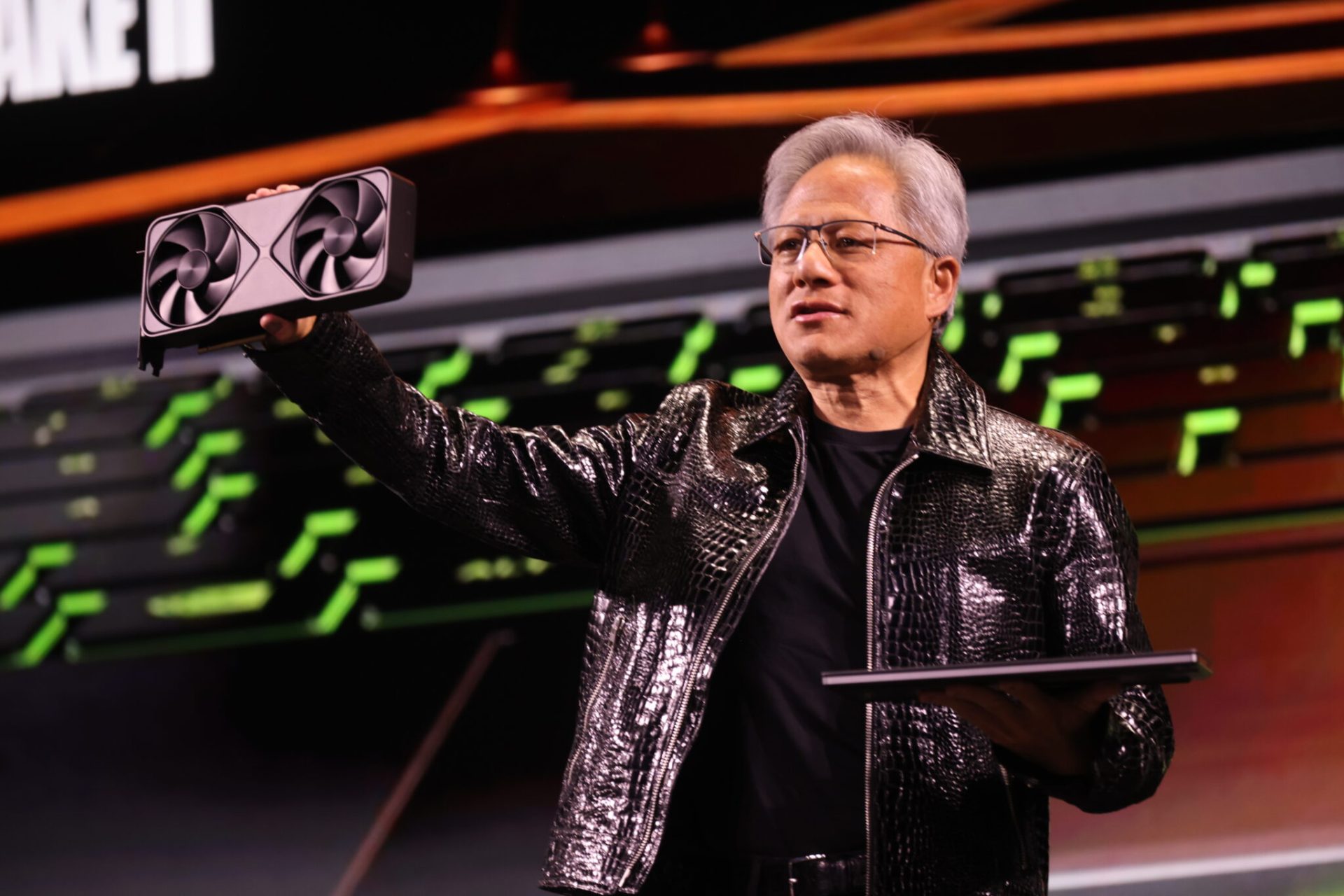

Meta and NVIDIA have announced a landmark partnership that will redefine AI infrastructure, spanning on-premises data centers, cloud deployments, and next-generation hardware. The collaboration, which includes the deployment of millions of NVIDIA Blackwell and Rubin GPUs alongside Arm-based Grace and Vera CPUs, marks a significant shift in how large-scale AI workloads are managed.

The partnership extends beyond hardware, integrating NVIDIA’s Spectrum-X Ethernet networking platform to enhance data center efficiency and support Meta’s AI-driven personalization and recommendation systems. This move underscores a broader industry trend toward unified, high-performance computing environments that balance speed, scalability, and energy consumption.

The Scale of the Collaboration

At the heart of the partnership is Meta’s ambitious AI roadmap, which demands infrastructure capable of handling both training and inference at unprecedented scale. NVIDIA’s Blackwell and Rubin GPUs will form the backbone of Meta’s data centers, while the integration of Grace CPUs—already in production—will deliver performance-per-watt improvements critical for large-scale AI workloads. Additionally, NVIDIA’s Vera CPUs, slated for deployment in 2027, will further expand Meta’s energy-efficient compute footprint.

This collaboration also introduces NVIDIA’s GB300-based systems, creating a unified architecture that simplifies operations across Meta’s on-premises and cloud environments. The adoption of Spectrum-X Ethernet networking ensures low-latency, high-throughput performance, optimizing both operational efficiency and power usage.

Privacy and Performance in AI

Meta’s adoption of NVIDIA Confidential Computing represents another key innovation within the partnership. This technology enables AI-powered capabilities on WhatsApp while ensuring user data remains encrypted and secure. The collaboration aims to extend these privacy-enhanced AI capabilities across Meta’s broader platform, addressing growing concerns around data confidentiality in large-scale AI deployments.

Why This Matters for the Industry

The partnership between Meta and NVIDIA sets a new standard for AI infrastructure, combining cutting-edge hardware with deep software integration. For Meta, this means access to a full-stack platform optimized for its unique workloads, from recommendation systems to generative AI models. For NVIDIA, it validates its strategy of offering a cohesive ecosystem—spanning CPUs, GPUs, networking, and software—that can scale with the demands of enterprise AI.

Beyond technical advancements, this collaboration highlights the growing importance of partnerships in driving AI innovation. By leveraging NVIDIA’s expertise in accelerated computing and Meta’s scale in AI research, the two companies are positioning themselves at the forefront of the next AI frontier. The implications extend to the broader tech industry, where similar alliances may become essential for companies seeking to deploy AI at scale while maintaining performance and efficiency.

The partnership also signals a shift toward more specialized, high-performance computing environments. As AI models grow in complexity, the need for optimized hardware and software stacks becomes increasingly critical. Meta and NVIDIA’s collaboration serves as a blueprint for how enterprises can future-proof their infrastructure against the evolving demands of AI.

With this alliance, Meta and NVIDIA are not just building data centers—they’re laying the groundwork for the next era of AI-driven innovation.