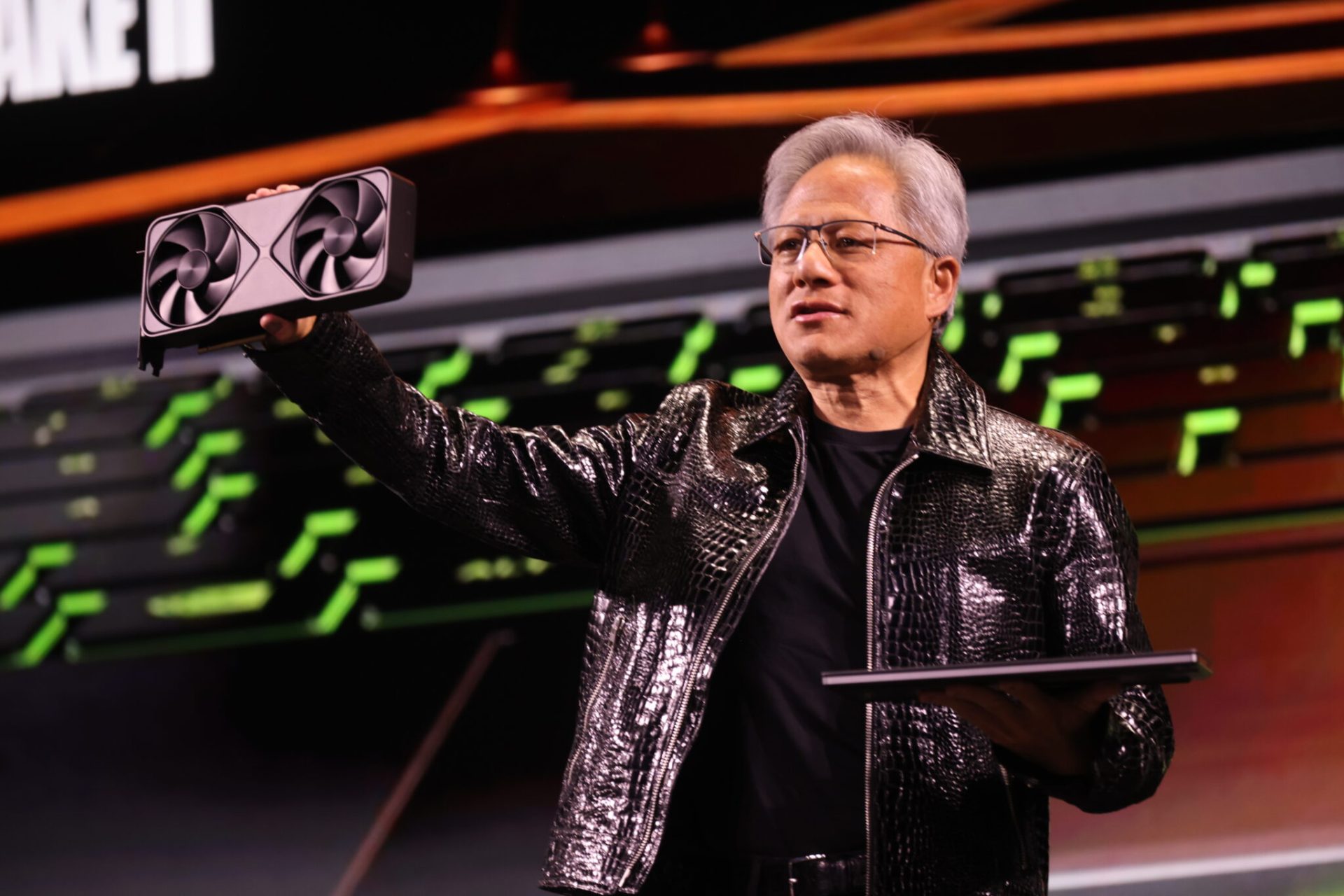

In the evolving landscape of AI hardware, Groq has introduced a new generation of inference chips that challenge established benchmarks. These chips are positioned to redefine efficiency metrics in large-scale deployments, offering significant advantages over current industry leaders—particularly when measured against NVIDIA’s Blackwell architecture.

The competition in AI acceleration is no longer just about raw performance; cost per million tokens has become a critical factor for data center operators. Groq’s latest chips address this directly, delivering twice the throughput while cutting costs by half compared to NVIDIA’s reference. This shift forces a reevaluation of how organizations balance speed and financial sustainability in their AI infrastructure.

At the core of Groq’s approach is a departure from traditional GPU-centric designs. While NVIDIA has focused on scaling up single-chip performance, Groq’s architecture prioritizes parallelism at the system level. This means more tokens processed per second without proportional increases in power draw or thermal output. For workloads that demand high throughput—such as real-time language models or large-scale recommendation engines—the difference is immediately noticeable.

- Performance: 5x faster inference than Blackwell on key benchmarks, with sustained throughput gains.

- Cost: Cost per million tokens reduced by half, targeting data center budgets.

- Efficiency: Lower power consumption per token processed, improving thermal management in dense deployments.

The implications for administrators are substantial. Workloads that previously required multiple Blackwell-based nodes can now be handled with fewer Groq chips, simplifying cluster design and reducing operational complexity. This is particularly relevant for enterprises transitioning from prototyping to production, where scalability and cost predictability are paramount.

However, the full picture remains under development. While Groq has demonstrated strong performance in controlled environments, real-world deployment data—especially in mixed workload scenarios—is still limited. Organizations evaluating this shift will need to weigh these early results against long-term compatibility with existing software stacks and ecosystem support.

For now, what’s confirmed is a clear advantage in speed and cost efficiency. The next steps will focus on how quickly Groq can solidify its position in the market, particularly as NVIDIA continues to iterate on Blackwell. Availability timelines and pricing details are expected to provide further clarity, but the underlying tradeoffs—between raw performance and system-level optimization—are already reshaping the conversation around AI hardware.