Meta and NVIDIA have announced a landmark partnership that will redefine large-scale AI infrastructure. The collaboration spans on-premises data centers, cloud deployments, and next-generation hardware, with Meta committing to deploy millions of NVIDIA Blackwell and Rubin GPUs alongside Arm-based Grace and Vera CPUs. The agreement also integrates NVIDIA’s Spectrum-X Ethernet switches into Meta’s Facebook Open Switching System, creating a unified architecture for training and inference workloads.

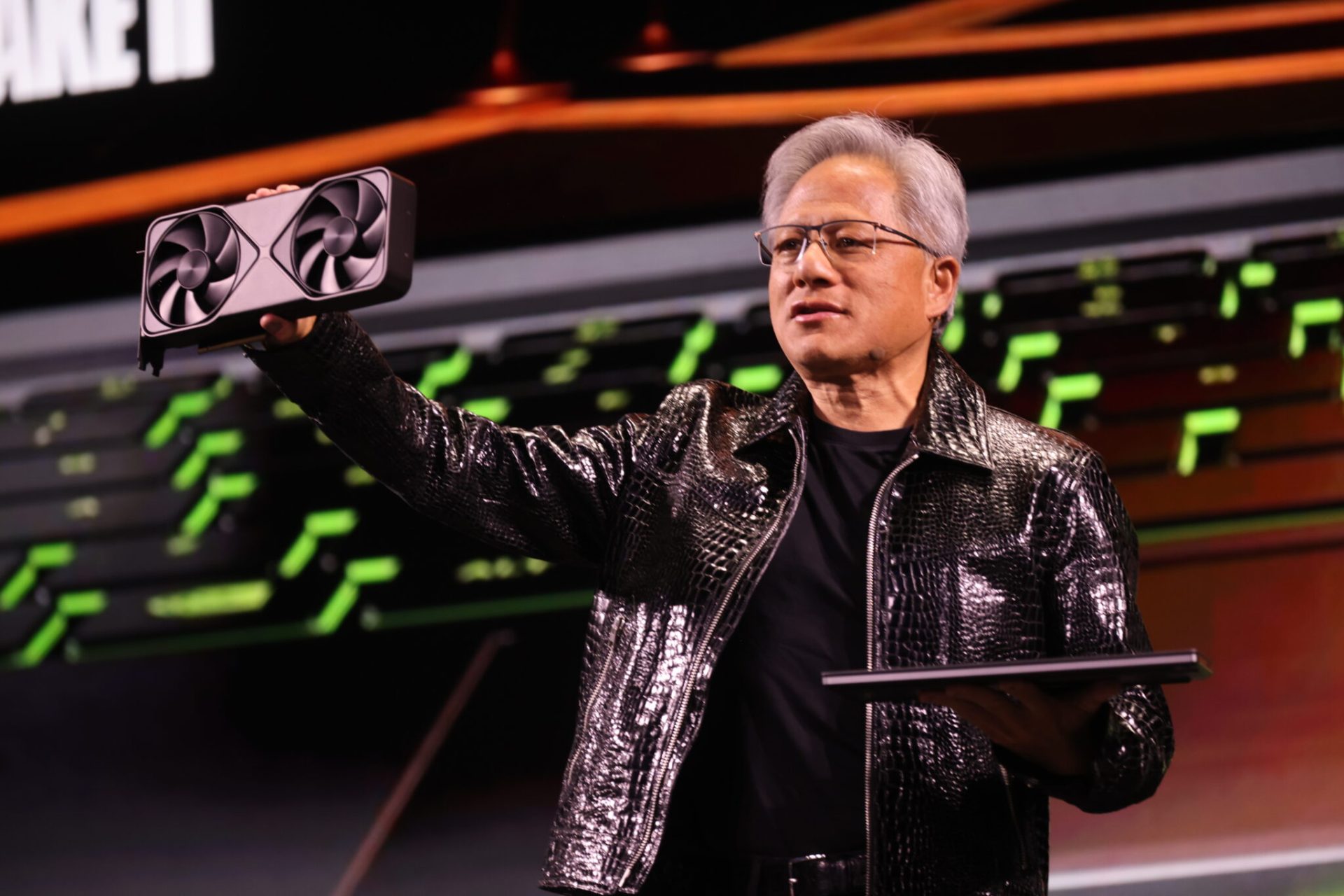

The partnership is framed as a response to Meta’s long-term AI ambitions, particularly in personalization and recommendation systems. NVIDIA’s Jensen Huang emphasized the scale of the challenge: No one deploys AI at Meta’s scale—combining frontier research with industrial-grade infrastructure to serve billions of users. For Meta CEO Mark Zuckerberg, the alliance represents a step toward personal superintelligence, leveraging NVIDIA’s Vera Rubin platform to optimize clusters for global AI deployment.

What changes for users

- Meta’s infrastructure upgrades will underpin AI-driven features across its platforms, including WhatsApp, where NVIDIA’s Confidential Computing ensures encrypted processing of user data. This privacy-focused approach is set to expand beyond WhatsApp to other Meta services.

- The deployment of Blackwell and Rubin GPUs—paired with Grace CPUs—will enable Meta to run more efficient, high-performance AI models. The Grace CPUs, in particular, mark the first large-scale adoption of NVIDIA’s Arm-based architecture, promising significant improvements in performance per watt.

- Unified architecture across on-premises and cloud environments will simplify operations for Meta’s engineering teams, while Spectrum-X networking delivers low-latency, high-utilization AI-scale connectivity.

What changes for admins and engineers

For Meta’s data center administrators, the partnership introduces a codesigned ecosystem. NVIDIA’s GB300-based systems will form the backbone of Meta’s AI infrastructure, with software optimizations tailored for Meta’s workloads. The collaboration extends to NVIDIA Vera CPUs, slated for large-scale deployment in 2027, further enhancing energy efficiency.

Engineering teams will benefit from deep integration between NVIDIA’s full-stack platform and Meta’s production models. This includes optimizations for state-of-the-art AI frameworks, ensuring higher performance and scalability for Meta’s core workloads. The partnership also addresses networking challenges with Spectrum-X, which provides predictable, low-latency performance critical for AI training and inference.

The immediate focus is on deploying Blackwell and Rubin GPUs in Meta’s hyperscale data centers, with Grace CPUs already in production. The Vera CPU rollout in 2027 will mark the next phase, potentially setting new standards for Arm-based AI compute. Meanwhile, NVIDIA’s Confidential Computing for WhatsApp will serve as a blueprint for privacy-enhanced AI across Meta’s portfolio.

This partnership underscores a broader trend: as AI demand surges, infrastructure alliances between hyperscalers and chipmakers are becoming the norm. For Meta, the collaboration is a critical enabler of its AI roadmap—one that could influence how other companies approach large-scale AI deployment.