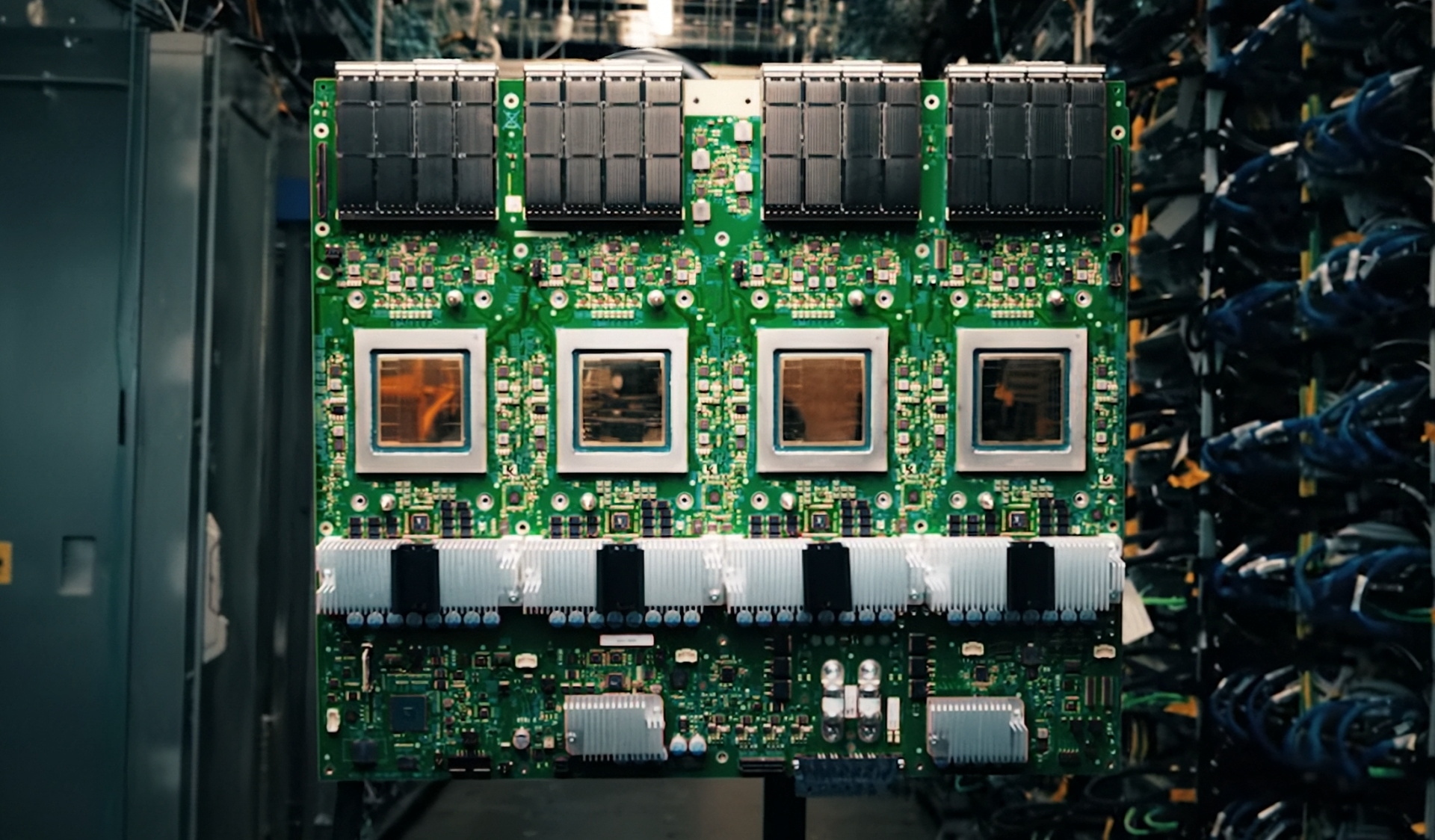

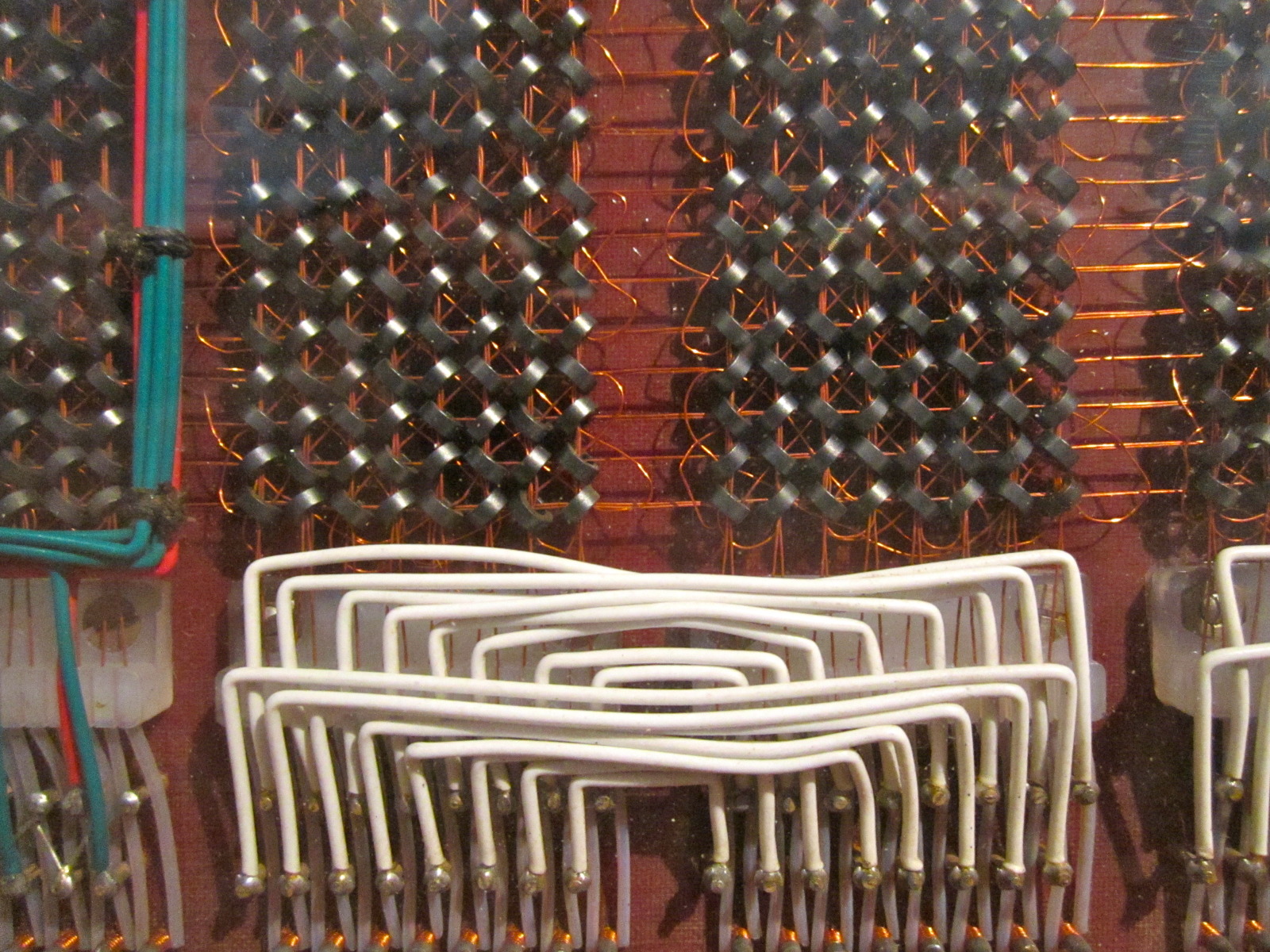

Enterprise AI infrastructure is experiencing a significant transformation, driven by the adoption of liquid cooling technology. This shift, which has long been associated with high-end gaming and specialized supercomputers, is now becoming a standard feature in mainstream server and workstation designs. The primary advantage of this technology lies in its ability to maintain stable temperatures during intense computational workloads, particularly those involving AI.

Unlike traditional air-cooled systems, liquid cooling loops can sustain high-performance computing without thermal throttling. This has been shown to reduce power consumption by up to 15% in some enterprise-grade configurations while maintaining computational throughput. The result is longer hardware lifespans and lower energy costs, both of which are crucial for organizations running large-scale AI training or inference tasks.

Who Benefits from Liquid Cooling?

The primary beneficiaries of this technology are enterprises with high-density server deployments or workstations that heavily rely on GPUs. For these users, liquid cooling is no longer a luxury but a necessity, offering stability in environments where thermal management was previously a bottleneck. However, the premium cost and requirement for custom hardware make it less viable for smaller operations or individual users, where air-cooled solutions remain sufficient.

Assessing the Impact

The question now is whether these efficiency gains will translate into tangible savings at scale. Data centers are already significant energy consumers, and even modest improvements in power consumption could yield substantial long-term benefits. However, the adoption of liquid cooling hinges on how seamlessly these systems integrate into existing infrastructure—a factor that remains untested for many organizations.

A New Priority for Enterprise AI

If liquid cooling becomes a standard rather than an exception, it signals a broader shift in enterprise AI infrastructure: thermal management is no longer an afterthought but a core consideration. The technology has been proven in high-performance computing for years, but its role in mainstream data centers is still unfolding. Whether this transition will accelerate depends less on performance benchmarks and more on real-world reliability over time.

For enterprises evaluating these solutions, the upfront investment must be weighed against potential long-term savings. The balance between cost and efficiency remains uncertain, but one thing is clear: liquid cooling is no longer an experimental feature—it’s a defining characteristic of next-generation AI infrastructure.