A new memory architecture is quietly reshaping the AI hardware landscape, and NVIDIA’s early lead may soon face competition. SOCAMM—a memory standard tailored for next-generation AI systems—is no longer exclusive to NVIDIA. AMD and Qualcomm are now exploring its integration, signaling a shift toward more flexible, high-capacity memory solutions in data centers and AI clusters.

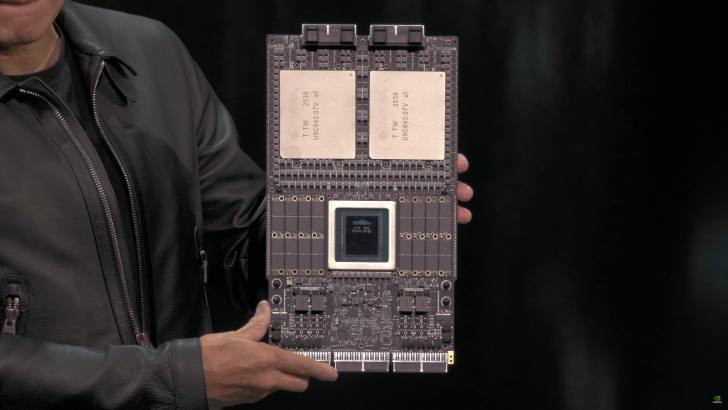

The stakes are high. As AI models grow more complex, memory bottlenecks have become a critical limiting factor. SOCAMM addresses this by offering a scalable, modular approach that pairs LPDDR5X with HBM (High Bandwidth Memory) to balance performance and cost. Unlike conventional DRAM, which is soldered onto motherboards, SOCAMM modules are designed to be upgraded independently, reducing hardware obsolescence and lowering operational costs over time.

Why SOCAMM Matters for AI

SOCAMM’s appeal lies in its dual role: it serves as a high-capacity, power-efficient alternative for storing large datasets while offloading less critical tasks from faster—but pricier—HBM. For AI applications, this means millions of tokens can remain active in memory without draining resources, a necessity for systems handling real-time agentic AI workloads.

NVIDIA has already committed to SOCAMM 2 for its upcoming Vera Rubin AI clusters, but the technology’s potential extends beyond its original design. AMD and Qualcomm are reportedly adopting a different module layout—a square configuration with dual DRAM rows—aimed at improving power management. By integrating a power management IC (PMIC) directly onto the module, they eliminate the need for complex motherboard circuitry, simplifying manufacturing and potentially boosting efficiency.

A Memory Standard in the Making

The adoption of SOCAMM by major chipmakers suggests a broader industry move toward standardized, upgradable memory solutions. While HBM remains the gold standard for high-speed AI processing, its high cost and limited scalability make it impractical for certain workloads. SOCAMM fills this gap by offering terabytes of addressable memory per CPU, bridging the performance gap between volatile DRAM and persistent storage.

However, SOCAMM isn’t without tradeoffs. Its throughput lags behind HBM, making it better suited for bulk data storage rather than high-speed computation. The technology’s true value lies in its flexibility: systems can dynamically allocate memory between SOCAMM and HBM based on workload demands, optimizing both performance and energy use.

What’s Next for SOCAMM?

With NVIDIA ramping up SOCAMM production—aiming for up to 800,000 modules this year—the pressure is on competitors to catch up. AMD and Qualcomm’s involvement hints at a future where SOCAMM becomes a mainstream choice for AI infrastructure, particularly in edge computing and personal AI supercomputers. If successful, it could reduce the reliance on proprietary memory solutions, fostering a more open ecosystem for AI hardware.

For now, SOCAMM remains a niche but rapidly evolving standard. Its adoption by AMD and Qualcomm underscores a critical trend: as AI demands outpace traditional memory architectures, innovation in scalable, modular solutions will define the next generation of computing.