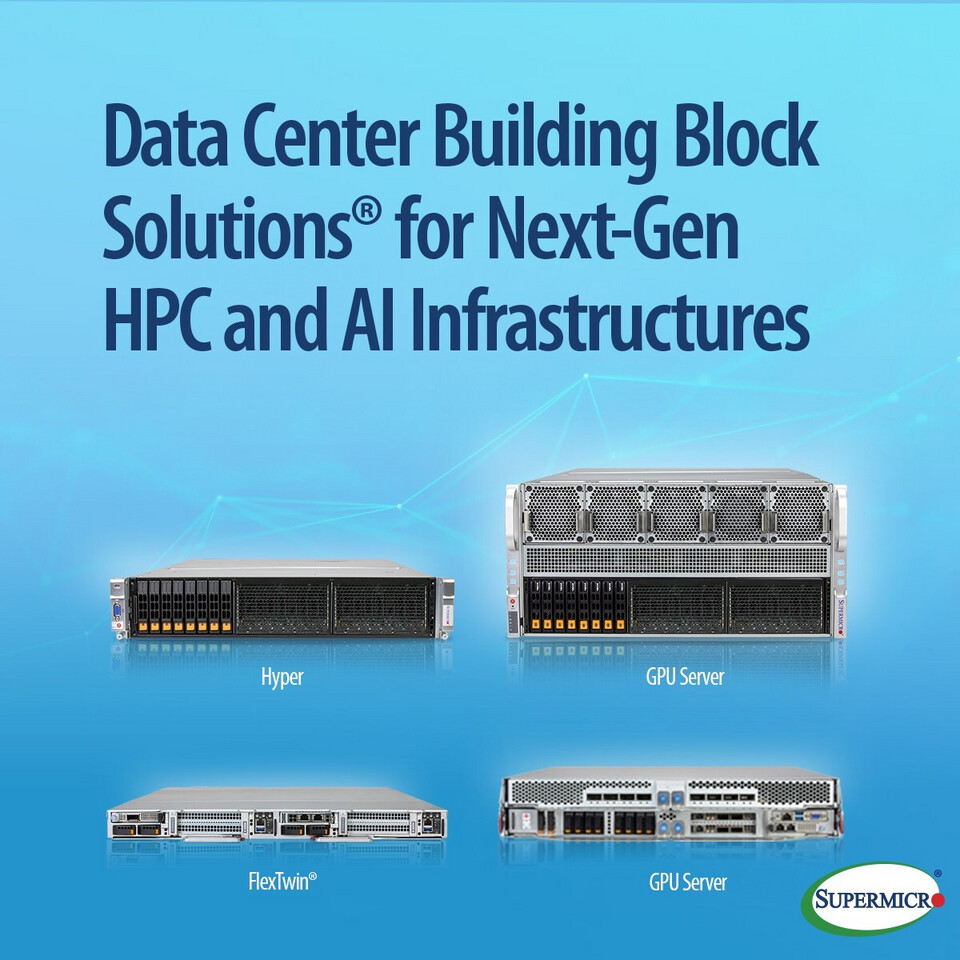

Data center operators now have a modular way to build high-performance AI infrastructure that balances compute density with energy savings. Supermicro has expanded its Data Center Building Block Solutions (DCBBS) portfolio with new Open Compute Project (OCP) ORv3-compliant racks and Arm-based server platforms designed for large-scale AI deployments.

At the core of these systems are two key tradeoffs: liquid cooling to handle thermal demands while maintaining compact form factors, and energy-efficient Arm Neoverse architectures that reduce power consumption without sacrificing performance. That’s the upside—here’s the catch. The new platforms require careful integration with existing AI ecosystems, particularly when pairing Arm-based CPUs with NVIDIA GPUs or other x86 components.

What It Plugs Into

The OCP ORv3 racks are built for high-density AI workloads, supporting configurations that include dual Intel Xeon 6 6700 series processors and the NVIDIA HGX B300 platform. The 2U GPU system features a 1400 A busbar for power distribution and DC-SCM support, ensuring stable operation in high-power environments.

Key Details

- A new 2U GPU system fits into 21-inch OCP ORv3 racks, supporting up to eight NVIDIA HGX B300 GPUs with 5th Generation NVLink for accelerated AI training and inference.

- The FlexTwin system combines two independent servers in a single 1-OU chassis, using Supermicro’s DLC-2 liquid cooling solution to reduce heat output by up to 90%. This system is designed for HPC and AI workloads, supporting both Intel and AMD CPUs while maintaining compatibility with future generations.

- Two new Arm-based systems—one in a compact 2U form factor and another in an expanded 5U configuration—feature the recently announced Arm AGI CPU. These platforms offer high core density (64, 128, or 136 Arm Neoverse V3 cores) and support for up to 6 TB of DDR5 memory across 24 DIMM slots.

Liquid cooling is a standard feature in these systems, addressing the thermal challenges of high-density AI workloads. The FlexTwin, for example, integrates CPU, memory, and VRM cooling into a single solution, reducing the need for bulky air-cooling setups while maintaining performance stability.

Who Benefits

The primary advantage lies in scalability and power efficiency. Arm Neoverse-based platforms are designed to deliver strong performance-per-watt metrics, making them ideal for cloud providers and enterprise data centers looking to minimize operational costs without sacrificing AI training speed. However, adoption will depend on how well these systems integrate with existing software stacks and GPU accelerators.

Market Impact

Supermicro’s move reinforces the trend toward open, modular data center solutions, particularly in the OCP ecosystem. The company now offers over 20 OCP-inspired systems, providing flexibility for operators to mix and match components based on workload requirements. This approach aligns with broader industry shifts toward energy-efficient AI infrastructure, where liquid cooling and heterogeneous architectures are becoming standard.

For gamers and AI enthusiasts, the practical takeaway is that these systems represent the backbone of next-generation data centers—but they won’t directly impact consumer hardware. The focus remains on enterprise-grade scalability, with Supermicro positioning itself as a key partner in building the foundation for agentic AI and high-performance computing.

What to watch: Availability timelines for the Arm-based platforms and further integration details with NVIDIA’s GPU ecosystem.