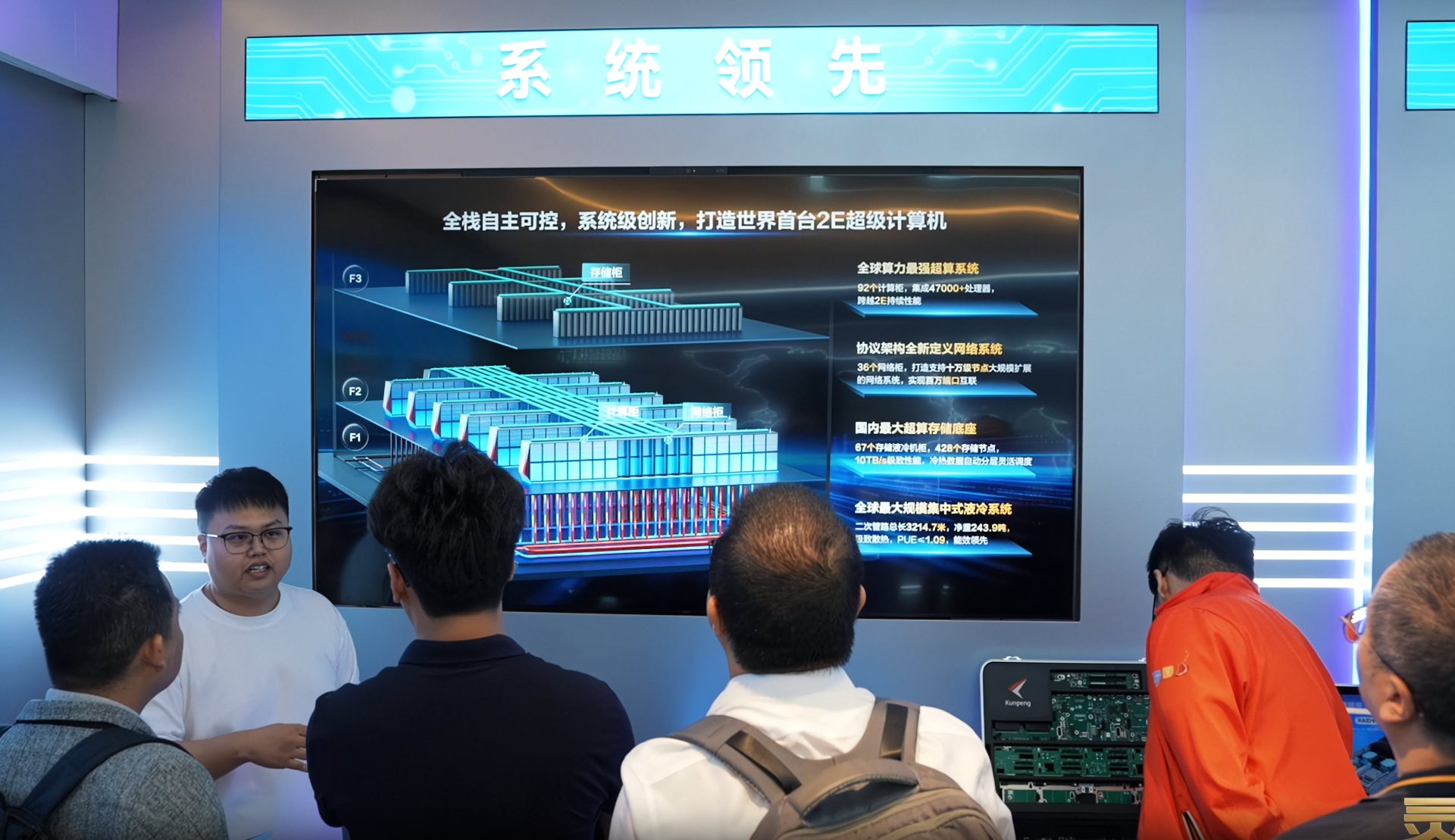

Supermicro has broken ground on what will become its largest U.S. facility—a sprawling 32.8-acre complex in Silicon Valley designed to accelerate the delivery of next-generation AI infrastructure. The new Data Center Building Block Solutions (DCBBS) campus, located near the company’s San Jose headquarters, spans over 714,000 square feet and will centralize advanced system design, manufacturing, testing, and distribution operations. This expansion brings Supermicro’s total Bay Area footprint to nearly four million square feet, positioning it as a key player in domestic AI innovation.

The facility is part of a broader strategy to strengthen U.S.-based production capacity, particularly for the increasingly complex compute workloads driving AI adoption. While details on specific hardware advancements remain under wraps, the move aligns with industry trends toward modular, rack-scale solutions that prioritize energy efficiency and rapid deployment—factors critical in large-scale AI deployments.

Key specs of the new campus include

- Location: San Jose, California (expanding Supermicro’s fourth Bay Area site)

- Size: 714,000 square feet across 32.8 acres

- Focus: AI infrastructure development, domestic manufacturing, and global distribution of DCBBS solutions

- Capacity: Supports hundreds of new roles in engineering, testing, and operations

The expansion is expected to bolster Supermicro’s ability to meet growing demand for high-performance computing (HPC) infrastructure, particularly in AI data centers. The company has emphasized its commitment to domestic innovation, suggesting that the campus will play a pivotal role in reducing dependency on overseas supply chains—a concern echoed by other major server vendors amid tightening global semiconductor availability.

What This Means for AI Data Centers

The new facility is not just an expansion; it’s a strategic pivot toward end-to-end, modular AI infrastructure. Supermicro’s DCBBS approach—ranging from individual GPUs and networking switches to complete rack solutions—aims to streamline deployment while improving energy efficiency. This could translate into faster Time-to-Online (TTO) for data center operators, a critical metric in AI workload scalability.

However, the real-world impact remains tied to how quickly Supermicro can integrate these capabilities into its product roadmap. While the company has not announced new hardware specifications yet, industry observers note that the focus on DDR4 memory and high-capacity modules (such as the recently mass-produced 192GB SOCAMM2 chips) suggests a continued emphasis on performance-per-watt optimization—a cornerstone of AI data center design.

Looking ahead, Supermicro’s expansion signals a shift toward more localized, U.S.-based production for AI infrastructure. Whether this translates into tangible improvements in cost or efficiency for customers remains to be seen, but the move underscores the company’s intent to compete head-on in an increasingly crowded field. For IT teams evaluating upgrade paths, the new campus could mean faster access to validated, rack-scale solutions—though adoption will depend on how aggressively Supermicro doubles down on energy-efficient designs in its next-generation products.