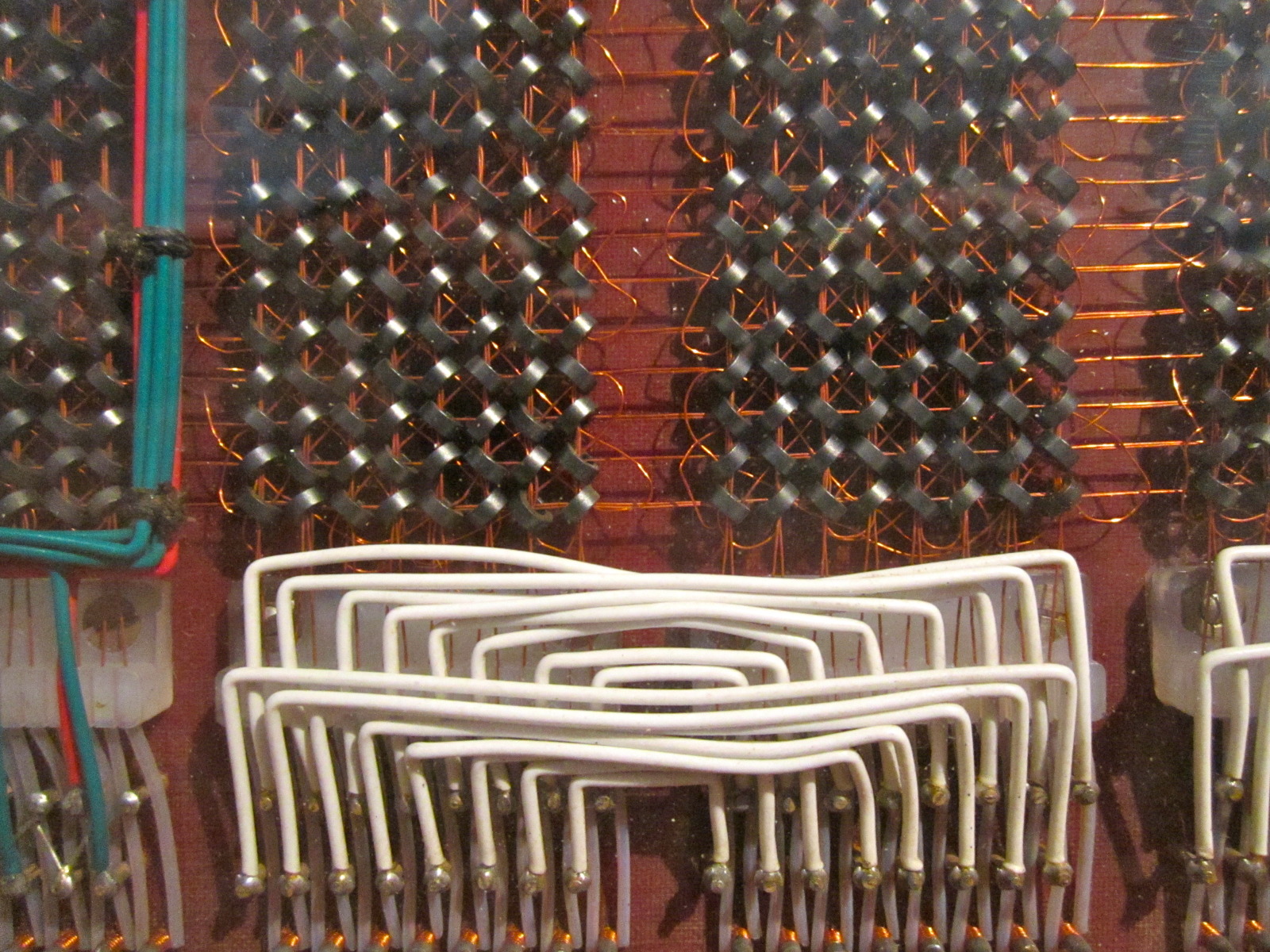

The AI hardware landscape is about to undergo a significant transformation. Qualcomm and AMD are preparing to integrate SOCAMM2 memory into their AI product lines, mirroring NVIDIA’s groundbreaking approach with its Vera CPU. This move signals a shift toward modular, high-bandwidth memory architectures designed to handle the demands of modern AI workloads.

At the heart of this change is the need for memory solutions that can keep pace with AI accelerators. NVIDIA’s implementation of LPDDR5X on SOCAMM—delivering up to 1.2 TB/s of bandwidth and supporting configurations as large as 1.5 TB—has proven effective in reducing latency by storing entire models in memory rather than relying on slower SSD transfers. Qualcomm and AMD now aim to replicate this efficiency, though with their own twists.

For AMD, the transition could begin with its Instinct MI accelerators paired with EPYC CPUs. The company may either adapt existing systems or develop entirely new architectures to accommodate SOCAMM2. Meanwhile, Qualcomm’s AI200 and AI250 inference accelerators—already capable of housing up to 768 GB of LPDDR5—could soon benefit from SOCAMM2’s modular expansion. This would eliminate the need for custom PCB soldering, allowing manufacturers to scale memory capacity simply by adding or removing modules.

A Memory Revolution for AI Systems

The adoption of SOCAMM2 represents a departure from traditional memory integration. Instead of rigid, soldered configurations, AI systems could now support dynamic upgrades—adding or removing memory modules as needed without redesigning the entire platform. This flexibility is particularly valuable in data centers, where AI workloads demand both speed and scalability.

While NVIDIA’s Vera CPU surrounds its processor with multiple SOCAMM modules, Qualcomm and AMD’s implementations may vary. AMD’s focus on EPYC and Instinct MI suggests a hybrid approach, potentially combining high-bandwidth memory with existing HBM stacks. Qualcomm, on the other hand, may prioritize inference-focused systems where modularity aligns with cost efficiency.

Industry Reactions and Long-Term Implications

Early discussions among hardware developers highlight a growing consensus: SOCAMM2 could become a standard for next-generation AI systems. The shift away from soldered memory isn’t just about performance—it’s about adaptability. Data centers with evolving AI needs may no longer require costly hardware overhauls to scale memory capacity.

Yet challenges remain. The transition to SOCAMM2 will depend on memory manufacturers’ ability to produce modules at scale, particularly as demand for high-capacity solutions like DDR6 and LPDDR5X continues to rise. With NVIDIA already dominating the AI accelerator market, Qualcomm and AMD’s adoption of SOCAMM2 could accelerate competition—but only if the technology delivers on its promise of flexibility and speed.

The implications extend beyond enterprise AI. If SOCAMM2 proves viable for consumer-grade AI hardware, it could influence everything from workstations to edge devices. For now, however, the focus remains on data centers, where the demand for scalable, high-performance memory is most urgent.

Availability for Qualcomm and AMD’s SOCAMM2-integrated systems remains unconfirmed, but leaks suggest a push toward modular AI architectures in 2026 and beyond. As the industry races to meet AI’s insatiable appetite for memory bandwidth, SOCAMM2 may well redefine how data moves—and how systems scale.