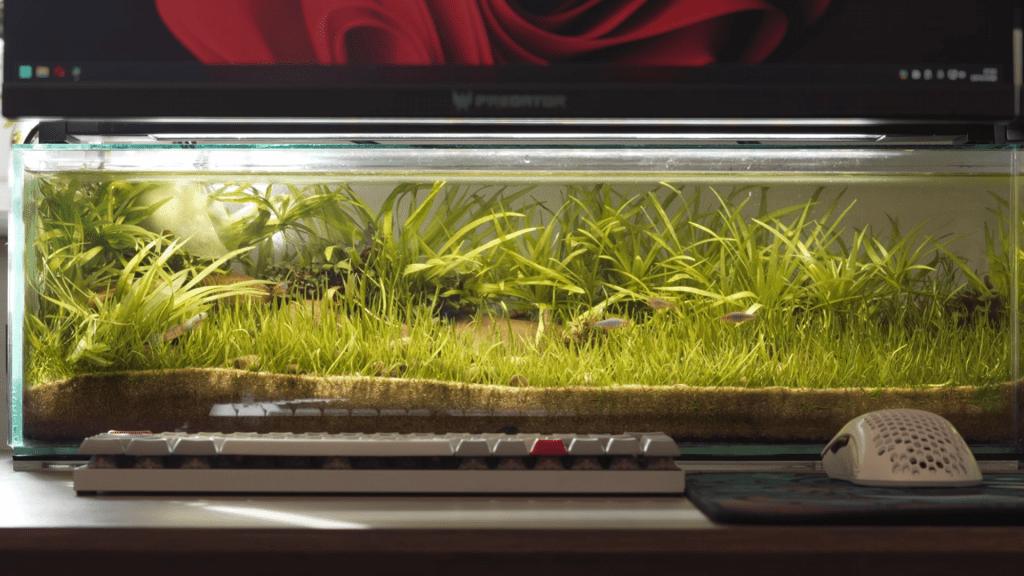

Imagine a system that doesn't wait for commands but acts before they're given—a server processing synthetic data streams with minimal fan noise, operating at peak efficiency without breaking a sweat. This isn't just a benchmark; it's a preview of how future AI tools might function under real-world demands.

The current generation of AI assistants thrives on reactivity: executing tasks when explicitly directed or managing schedules dynamically. The next leap, however, involves AI that anticipates needs without waiting for direction. Instead of reacting to user input, these systems monitor context, identify inefficiencies, and intervene automatically. This shift requires not only advanced algorithms but also hardware capable of sustaining high-intensity workloads without degradation.

The Assumption: Effortless Automation

Users might expect proactive AI to seamlessly integrate into workflows, reducing manual intervention to near-zero while maintaining flawless accuracy. The promise is for systems that preemptively offload tasks, suggest optimizations, and manage resources without user oversight. Early implementations show promise, with some systems reducing idle time by up to 30% compared to reactive counterparts. However, these gains come with trade-offs, particularly in thermal management and long-term stability.

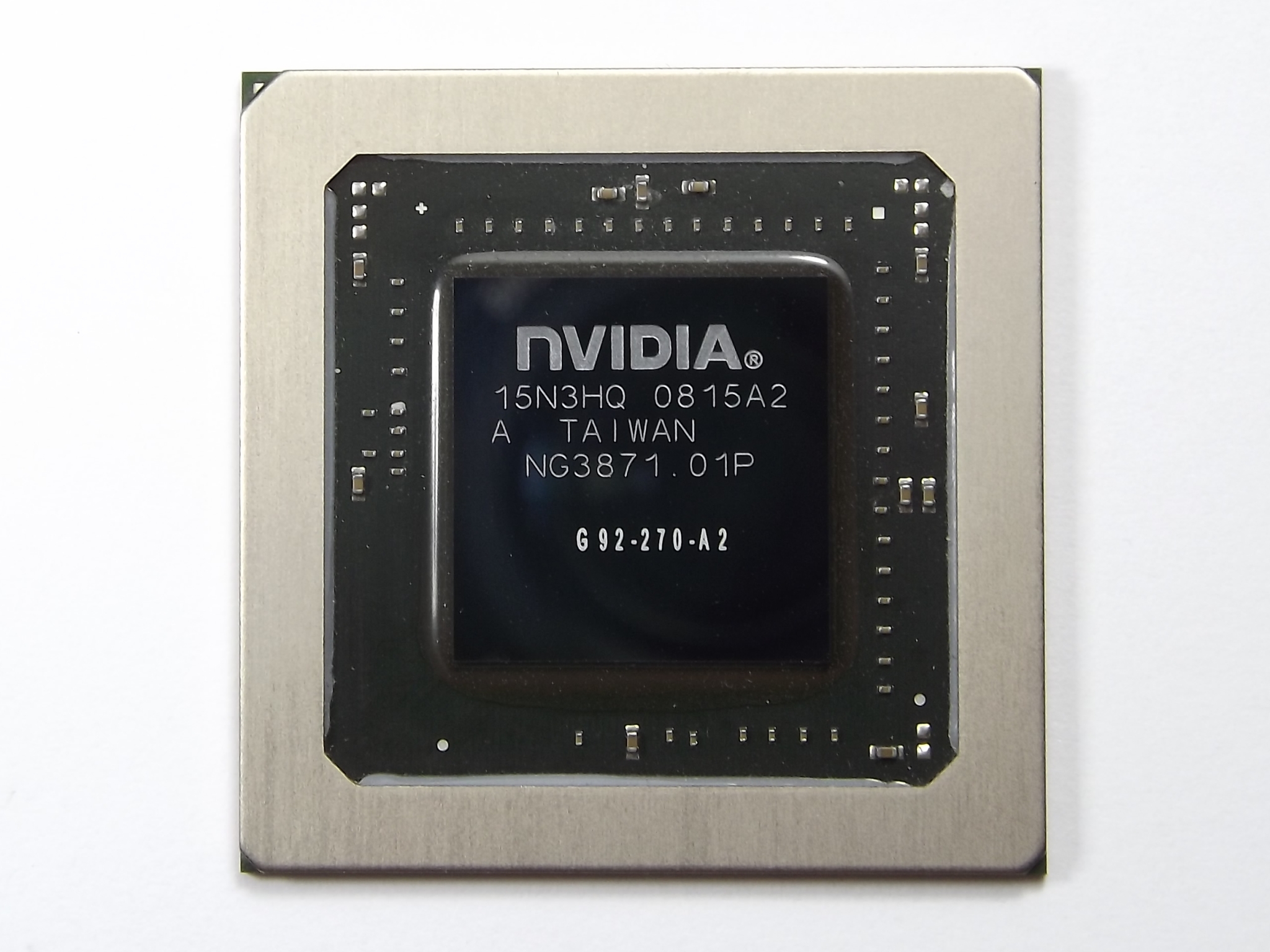

The Challenge: Heat and Durability

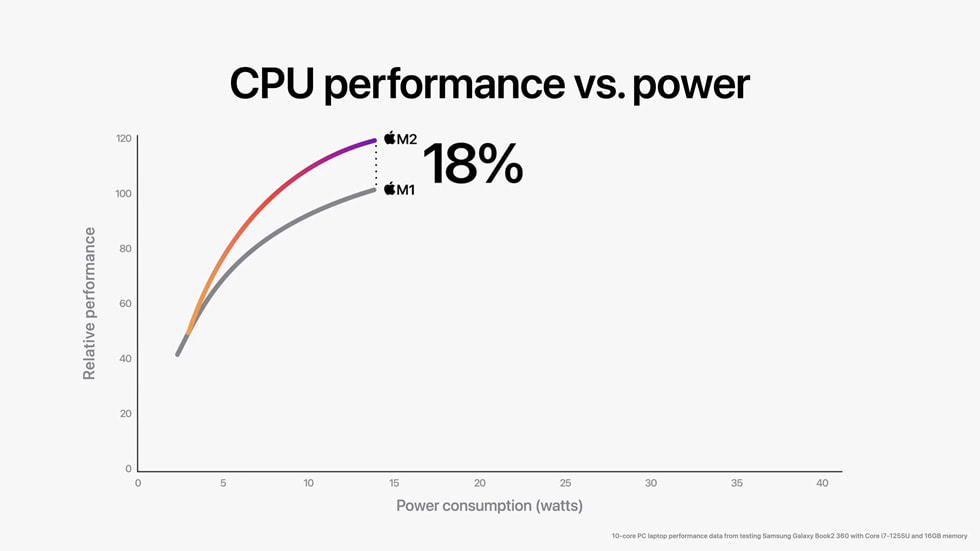

Proactive AI isn't just about smarter software—it demands hardware built for continuous high-intensity workloads. Benchmarks reveal that systems running proactive agents experience temperature increases of 5-8°C under sustained load, often pushing configurations to the limits of their thermal envelopes. This creates a delicate balance between performance and heat dissipation, which could limit real-world adoption if not addressed.

There's also the challenge of maintaining accuracy over time. Reactive AI relies on clear user intent; proactive AI must infer intent from ambiguous or partial data. Early tests suggest a trade-off between how often the system acts (aggressiveness) and how accurately it predicts needs (precision). Users who prefer control may find proactive agents intrusive, while those in fast-paced environments could benefit significantly from automation.

Transforming Data Workloads

The most immediate benefits will be seen in data-intensive workflows, such as research, development, and large-scale AI training. A proactive agent could automatically balance workloads across multi-node clusters, preemptively cache frequently accessed datasets in faster memory tiers like HBM, or dynamically adjust cooling fan curves to extend runtime in edge deployments. The potential reduction in cognitive load for developers and data scientists is substantial, as manual resource management becomes unnecessary.

However, this efficiency comes with a critical requirement: hardware must sustain high throughput without thermal throttling. This balance hasn't been proven at scale yet, posing a significant hurdle to widespread adoption. Early adopters may see prototypes within the next 12-18 months, but mainstream use will depend on whether software stacks mature enough and hardware vendors design systems with sufficient thermal headroom.

The Future of Proactive AI

The future of proactive AI isn't just about its capabilities—it's about delivering reliability when the stakes are high. The transition from reactive to proactive systems represents a fundamental shift in how users interact with technology, but its success will be measured by performance under pressure and the ability to adapt without compromising stability.