NVIDIA has introduced a new open multimodal model designed to streamline AI agent workflows by combining vision, audio, and language processing into a single system. The Nemotron 3 Nano Omni model eliminates the need for separate models, reducing latency and improving context retention during task execution.

The model is built on NVIDIA's existing Nemotron architecture but includes optimizations specifically for multimodal tasks. It supports simultaneous vision, audio, and language processing, which could significantly enhance the performance of AI agents in real-world applications where multiple input types are involved.

Key Specifications

- Model Architecture: Nemotron 3 Nano Omni (multimodal variant)

- Input Types: Vision, audio, and language (unified processing)

- Efficiency Claims: Up to 9x more efficient than separate models in certain tasks

- Use Cases: AI agents requiring multimodal context awareness

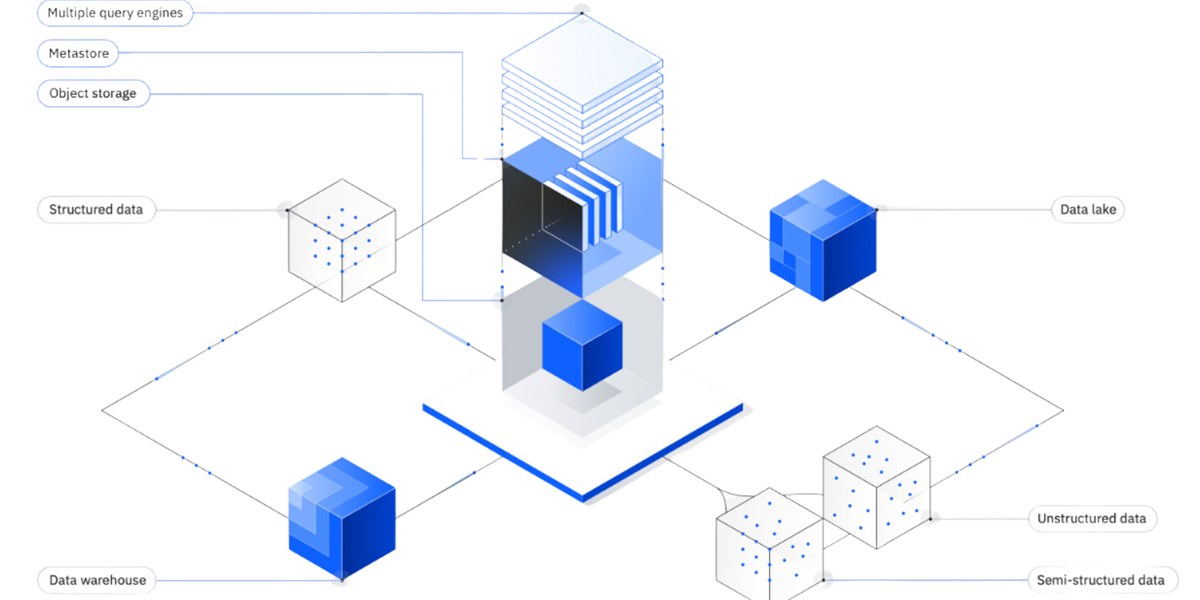

The model's efficiency gains come from its ability to handle multiple input types without the need for data handoffs between specialized sub-models. This is particularly beneficial in scenarios where AI agents must process visual, auditory, and textual inputs simultaneously, such as in robotics or smart environment applications.

Performance Implications

The unified approach could lead to faster decision-making in AI systems, as there's no delay associated with passing data between separate vision, audio, and language models. This is a notable advancement for AI agents operating in complex environments where real-time processing is critical. However, the actual performance benefits will depend on how well the model handles the specific requirements of different applications.

While NVIDIA has highlighted the efficiency improvements, details about deployment requirements, such as hardware specifications or power consumption, have not been released. These factors will be crucial for developers looking to integrate the model into their systems.

The Nemotron 3 Nano Omni represents a significant step toward more cohesive AI agent architectures. By unifying these capabilities, NVIDIA aims to address one of the longstanding challenges in multimodal AI development: the inefficiency and context loss that comes with separate models. Whether this approach will become the industry standard remains to be seen, but it certainly opens new possibilities for how AI agents can be designed and deployed.