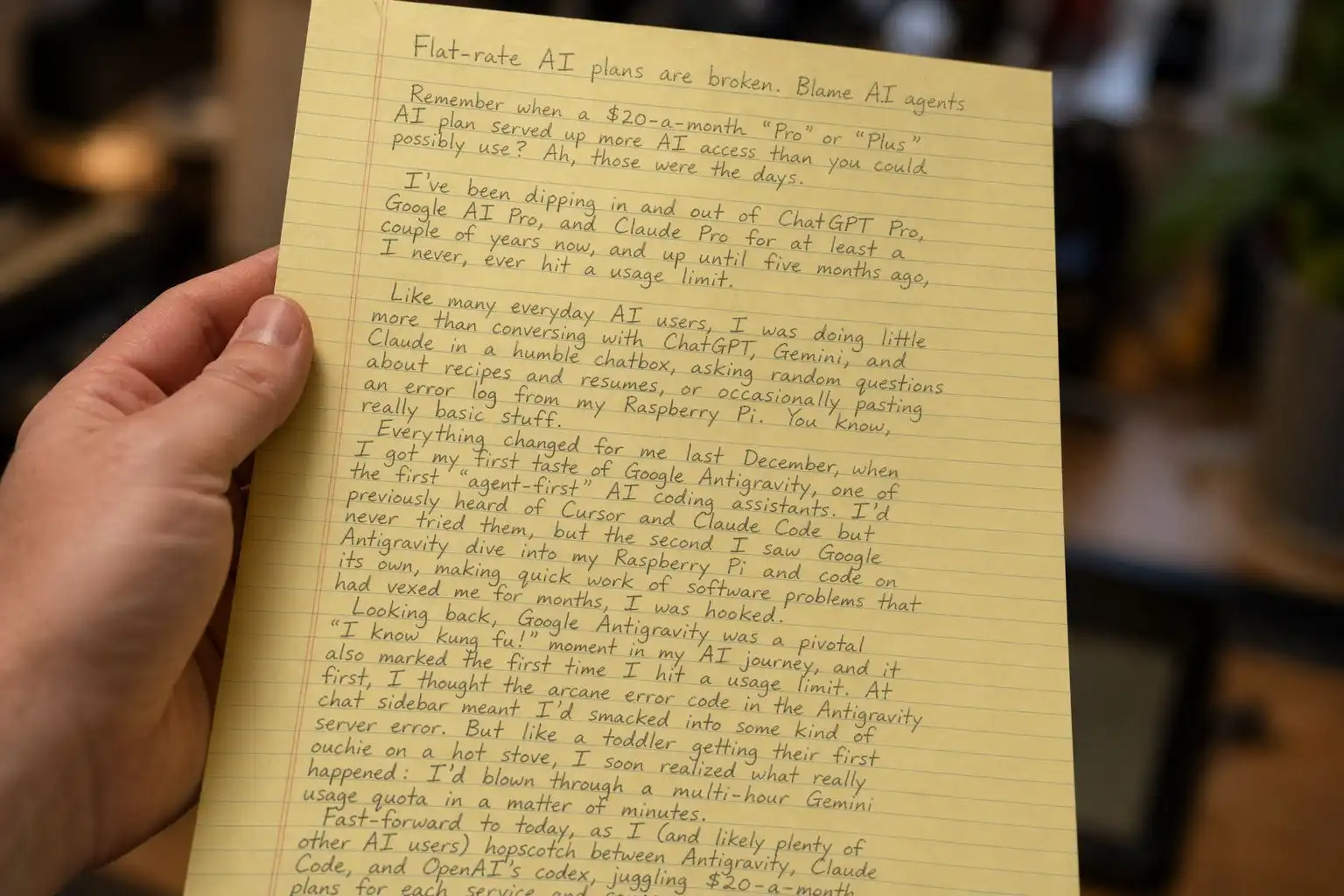

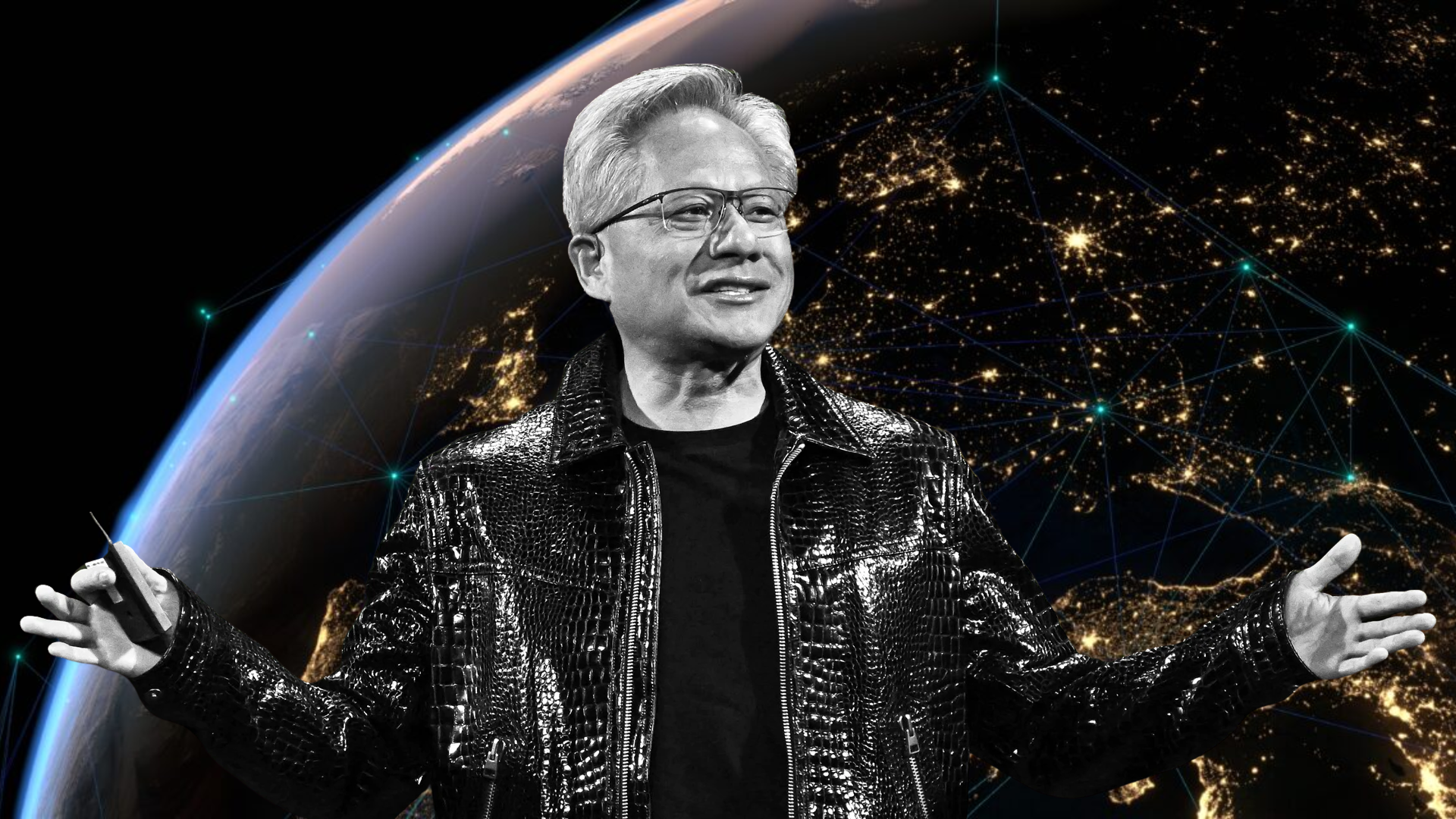

The tech industry is in the midst of an unprecedented AI spending frenzy, with companies like Amazon, Google, Meta, and Microsoft committing a staggering $660 billion to infrastructure—far exceeding earlier projections. Yet skepticism lingers: Is this a calculated bet on the future, or a bubble waiting to burst? NVIDIA’s CEO Jensen Huang has a clear answer: ‘This is sustainable.’

Speaking recently, Huang framed the wave of capital expenditure as inevitable, driven by AI’s evolution from a niche curiosity into a ‘profoundly useful’ force reshaping industries. The company’s own GPUs—particularly the RTX 50-series—are at the heart of this shift, powering everything from generative AI models to agentic workflows in tools like Vercel and OpenClaw. Huang’s confidence hinges on a broader thesis: software is no longer just a tool, but a system that uses tools. The implications are staggering—if AI-driven automation delivers on its promise, the economic upside could dwarf even the cloud computing revolution.

But the path isn’t without risks. Critics compare the current AI rush to the dark fiber mania of the dot-com era, where speculative spending outpaced tangible returns. Huang counters that AI’s trajectory differs fundamentally: ‘We’re not just building infrastructure for the sake of it. We’re building the foundation for the next era of software.’ The question remains whether the mass market will adopt these changes quickly enough to justify the billions being poured in.

What’s driving the spending?

- AI’s inflection point: Companies like Anthropic and OpenAI are now profitable, proving AI’s commercial viability beyond hype.

- Enterprise adoption: Tools leveraging agentic AI—where systems autonomously chain tasks (e.g., debugging code, optimizing supply chains)—are entering production environments.

- NVIDIA’s dominance: The company’s GPUs are the de facto standard for training and deploying AI models, giving it leverage in negotiations with hyperscalers.

The debate over sustainability hinges on two factors: how quickly AI becomes indispensable and whether the current wave of spending will yield measurable ROI. Huang’s bet is that history won’t repeat the dot-com mistakes—because this time, the software itself is being rewritten.

While Big Tech debates infrastructure, NVIDIA’s consumer GPU lineup is facing its own challenges. The RTX 50-series—launched as a response to AMD’s aggressive pricing—has seen a sharp uptick in Steam Hardware Survey adoption, with the RTX 5060 and RTX 5070 gaining traction among budget-conscious gamers. Yet the RTX 5080 and RTX 5090 remain outliers: reports suggest some buyers are securing these cards at half their $660 and $1,000 MSRPs, though official pricing hasn’t been confirmed.

This disparity reflects a fragmented market. The RTX 5060 ($300) and RTX 5070 ($400) are positioned as ‘gaming-focused’ alternatives to AMD’s Radeon GPUs, while the high-end RTX 5080 Super (a $100 upgrade over the original) and RTX 5090 target creators and AI enthusiasts. The challenge for NVIDIA is balancing supply with demand: if the AI-driven server market continues to absorb most of its manufacturing capacity, consumer prices could remain volatile.

Key specs: RTX 50-series lineup

- GeForce RTX 5060: 8GB GDDR6, 16 CUDA cores, 160 Tensor cores, 200W TDP.

- GeForce RTX 5070: 12GB GDDR6, 24 CUDA cores, 240 Tensor cores, 220W TDP.

- GeForce RTX 5080: 16GB GDDR6X, 32 CUDA cores, 480 Tensor cores, 320W TDP.

- GeForce RTX 5080 Super: 16GB GDDR6X, 32 CUDA cores, 512 Tensor cores, 320W TDP.

- GeForce RTX 5090: 24GB GDDR6X, 48 CUDA cores, 768 Tensor cores, 450W TDP.

For gamers, the RTX 5060 and 5070 offer DLSS 3 and Frame Generation at accessible price points, while the 5080 Super and 5090 push performance into AI workloads and 4K gaming. The tradeoff? Power draw and heat output escalate with each tier, requiring robust cooling setups. Whether these cards will stabilize in price depends on NVIDIA’s ability to meet demand without sacrificing server GPU allocations.

The broader picture is clear: AI is no longer a speculative bet but a multi-trillion-dollar infrastructure play. For NVIDIA, the question is whether its GPUs will remain the linchpin of that future—or if competitors will force a reckoning in both the data center and living room.