Enterprises looking to integrate AI into robotic systems now have a clearer path from simulation to production, thanks to NVIDIA's latest advancements. The new models and frameworks aim to close the gap between training environments and real-world robotics, reducing deployment risks.

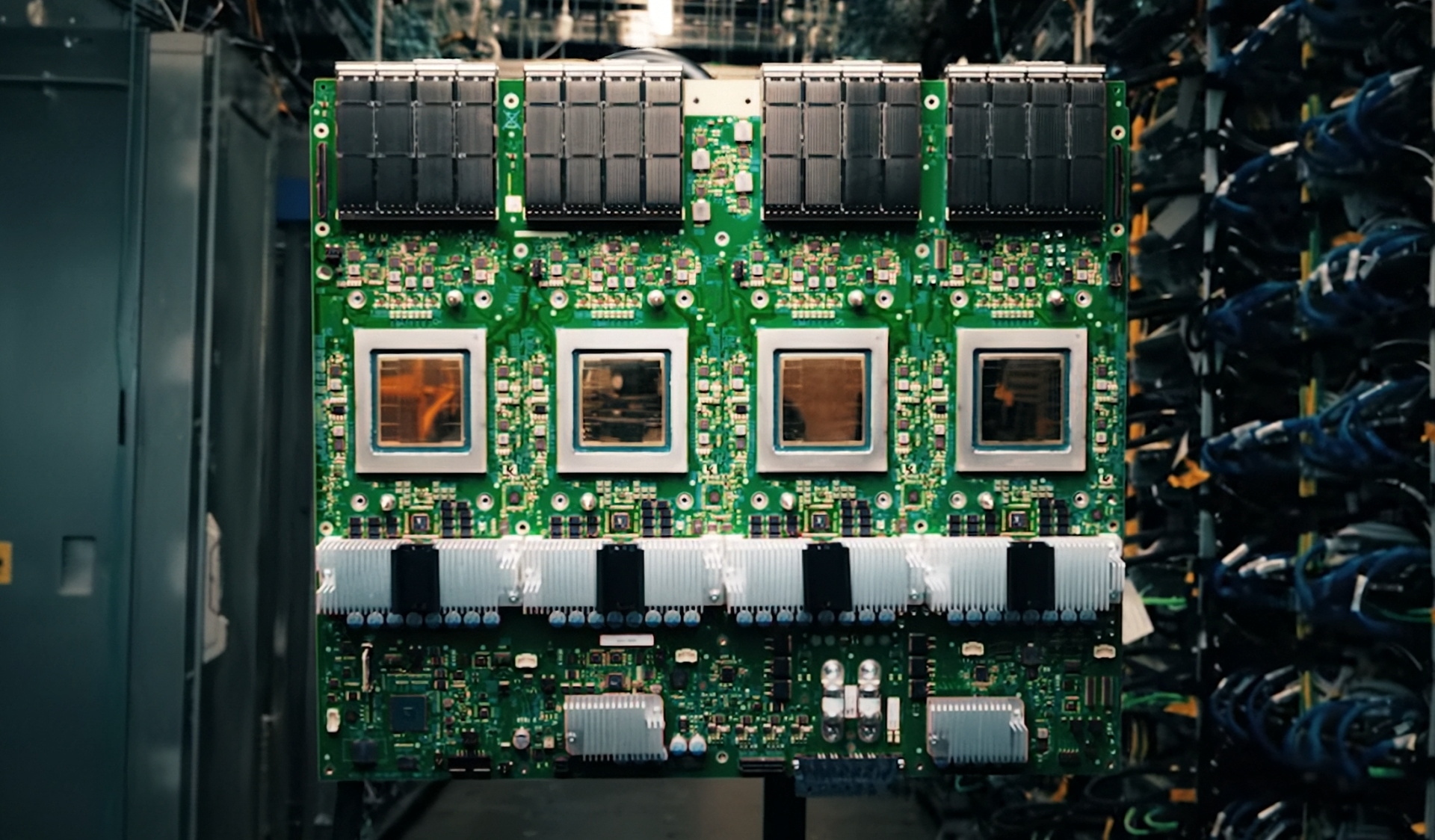

The focus is on embedded compute capabilities that allow robots to process data locally while maintaining performance. This shift addresses a long-standing bottleneck in robotic AI development: the need for high-speed inference without relying solely on cloud connectivity.

Key Advancements

- Simulation-to-Deployment Workflow: NVIDIA's new tools integrate seamlessly with existing simulation platforms, allowing developers to test and refine robot behaviors in virtual environments before transitioning to physical hardware. This reduces the time and cost associated with real-world testing.

- Embedded AI Processing: The suite includes optimized models for edge devices, enabling robots to perform complex tasks without constant cloud dependencies. This is critical for industrial applications where latency is a concern.

- Open Frameworks: The frameworks are designed to be open-source, fostering collaboration and customization across industries. Enterprises can tailor the solutions to their specific robotic use cases.

The introduction of these tools marks a significant step toward making AI-driven robotics more accessible for enterprise buyers. By addressing compatibility risks—such as ensuring that simulated training translates effectively to real-world scenarios—NVIDIA is positioning itself as a key enabler in the field.

Decision Guide

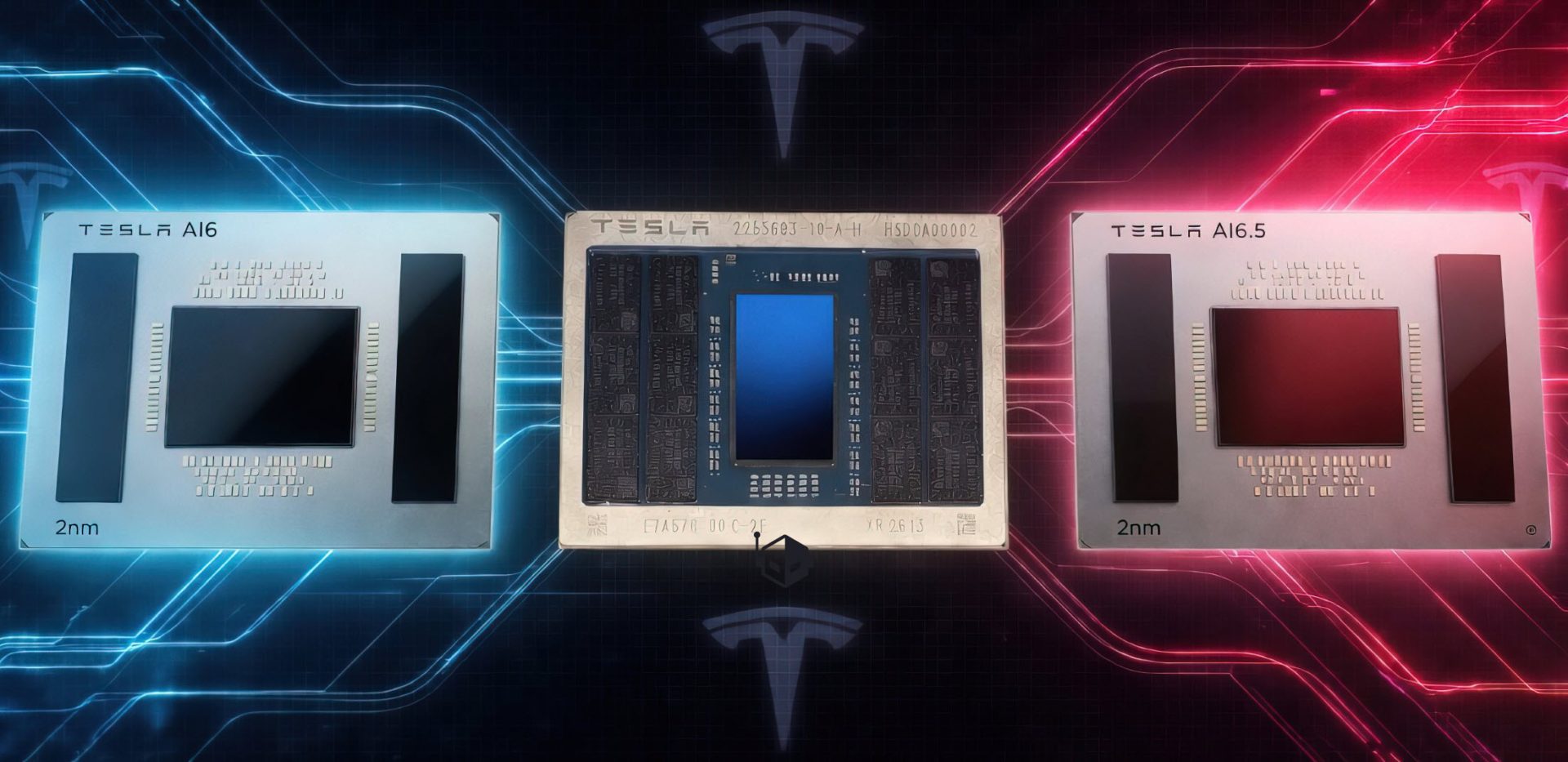

- What's New: A suite of open models and frameworks that bridge simulation and deployment, with embedded compute optimizations for edge devices. Pricing details are not yet available, but the focus is on reducing development friction.

- What to Consider: Enterprises should evaluate whether their current robotic infrastructure can integrate these tools without significant overhauls. Compatibility with existing simulation platforms will be a critical factor.

- Who It's For: Primarily targeting industries like manufacturing, logistics, and automation where real-time AI processing is essential. Smaller enterprises may need to assess the learning curve for adoption.

- What Remains Unclear: Long-term support structures and how these tools will handle updates or patches in production environments. There's also uncertainty around scalability for large-scale deployments beyond pilot phases.

The suite does not introduce groundbreaking hardware but instead leverages existing NVIDIA platforms, such as GPUs and AI accelerators, to enhance robotic workflows. This approach minimizes risk while providing immediate value for enterprises looking to modernize their robotics pipelines.

Industry Impact

The real innovation lies in the workflow optimization rather than the hardware itself. By streamlining the transition from simulation to production, NVIDIA is addressing a core pain point: ensuring that AI-trained robots behave reliably in unstructured environments. This is particularly important for industries where precision and consistency are non-negotiable.

For buyers, the key question will be whether these tools can integrate smoothly into existing systems without requiring extensive retraining or infrastructure changes. Early adopters may find themselves at an advantage as they refine best practices for deployment.

Closing Considerations

The new models and frameworks are confirmed to work with NVIDIA's existing AI platforms, but details on third-party compatibility—such as integration with non-NVIDIA hardware—are still under development. Enterprises should prioritize testing these tools in controlled environments before scaling.

While the suite does not solve all challenges in robotic AI, it provides a significant leap forward in reducing deployment friction. The next phase will likely focus on refining these workflows and expanding support for edge-case scenarios in production.