For PC builders and enterprise IT teams, the latest collaboration between NVIDIA and T-Mobile signals a shift in how AI-driven workloads are handled at scale. The integration of physical AI applications on an AI-ready Radio Access Network (AI-RAN) infrastructure means that high-performance computing tasks—once confined to data centers—can now be distributed across mobile networks with unprecedented efficiency.

This partnership represents more than just a technical milestone; it’s a strategic move to future-proof network operations. By leveraging NVIDIA’s AI platforms, T-Mobile is positioning itself to handle the growing demand for low-latency, high-throughput applications—such as autonomous vehicles and smart manufacturing—without overhauling existing infrastructure. The key here isn’t just speed; it’s the ability to process complex AI models in real time while maintaining network stability.

Why This Change Matters Now

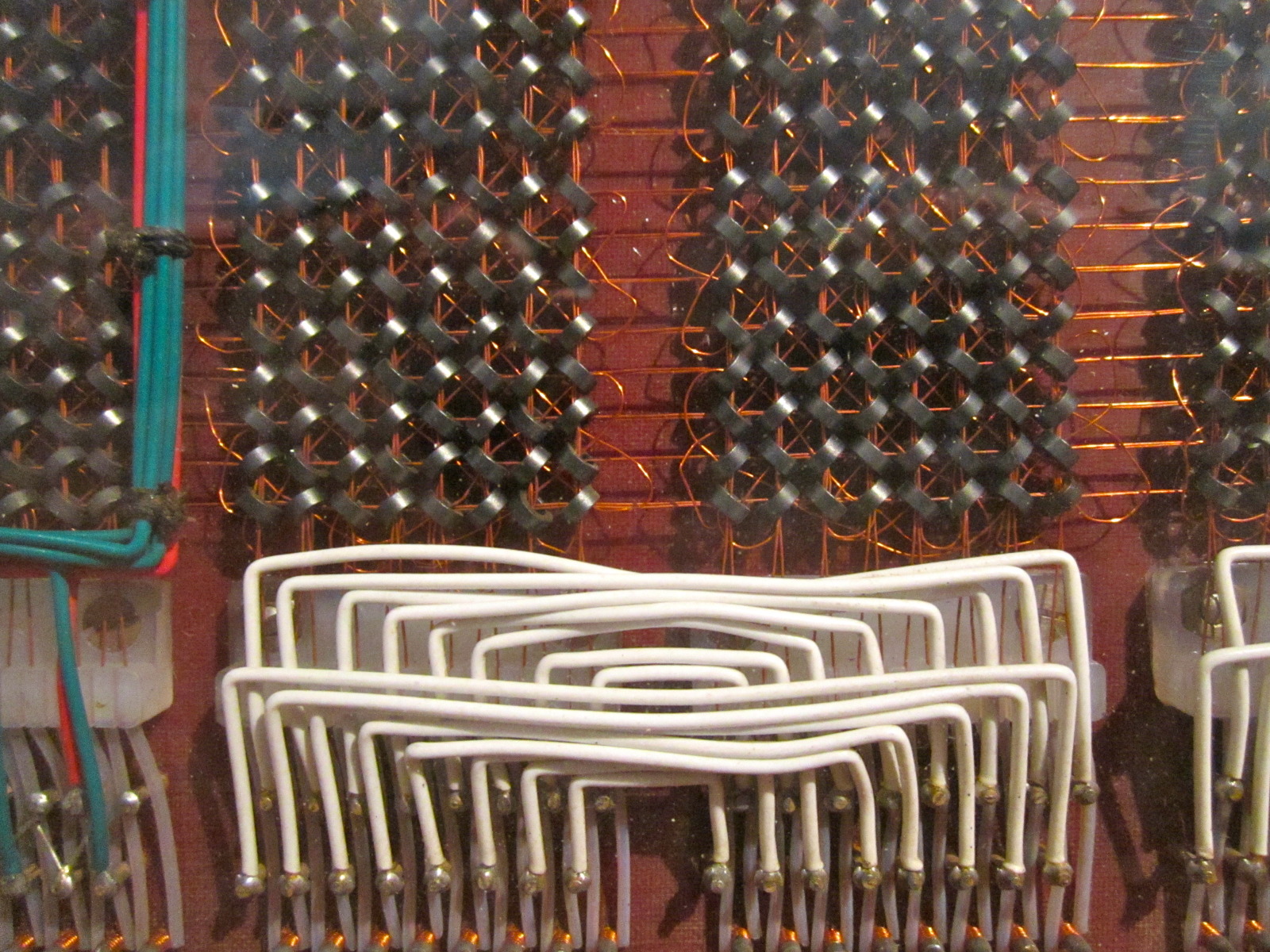

The telecom industry has long relied on centralized cloud processing, but that model is breaking down under the weight of emerging use cases. Autonomous systems, for example, require split-second decision-making that can’t be delayed by round-trip latency to a remote data center. NVIDIA’s AI-RAN approach addresses this by pushing AI workloads closer to the edge—directly into the network hardware itself.

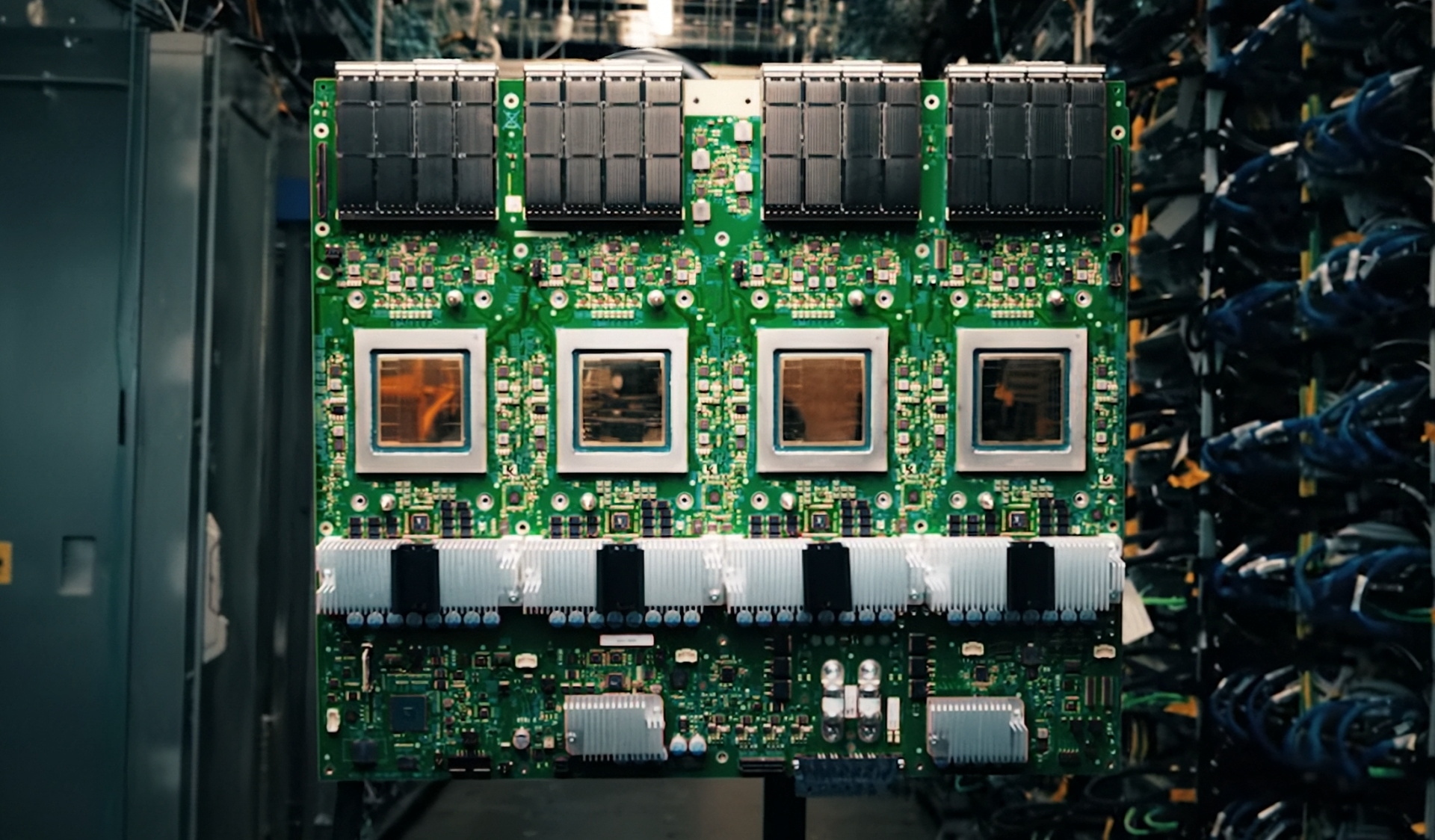

For PC builders and system designers, this means that the components they choose today will need to support distributed AI workloads tomorrow. GPUs with accelerated inferencing capabilities, like NVIDIA’s A100 or H100 series, are no longer just for training models; they’re becoming the backbone of real-time AI processing in edge devices and network equipment alike.

Key Technical Breakdown

- AI-RAN Infrastructure: The Radio Access Network (RAN) is being optimized with NVIDIA’s AI accelerators, allowing for on-device AI model execution without relying solely on cloud resources. This reduces latency and improves response times for applications like predictive maintenance or real-time analytics.

- Physical AI Applications: Unlike traditional cloud-based AI, these applications run directly on network hardware, enabling use cases such as autonomous driving (where vehicles need to process sensor data locally) or smart grid management (where edge devices analyze energy consumption patterns without central coordination).

- Performance Metrics: Early benchmarks suggest that this infrastructure can handle up to 10 times more AI workloads per second compared to conventional cloud-only setups, with latency dropping from milliseconds to microseconds in some scenarios.

The partnership also introduces a new layer of interoperability. T-Mobile’s network is now compatible with NVIDIA’s EGX platform, which allows for seamless deployment of AI models across edge servers, RAN sites, and even mobile devices. This flexibility is critical for industries where hardware diversity is the norm—such as manufacturing or logistics—where different environments require tailored AI solutions.

What Builders Need to Consider

For those assembling high-performance systems, the takeaway is clear: future-proofing requires more than just raw power. It demands components that can adapt to distributed AI workloads. This includes GPUs with dedicated AI accelerators, network interfaces that support low-latency processing, and software stacks optimized for edge AI.

However, not all changes are confirmed yet. While NVIDIA and T-Mobile have outlined the vision, the full integration—particularly how this will translate to consumer or enterprise hardware—remains in development. What is certain is that the bar for real-time AI processing has been raised, and systems built today must account for this shift if they’re to remain relevant in the coming years.

The collaboration also hints at a broader trend: telecom networks evolving from mere data pipelines into intelligent platforms capable of running complex computations. For PC builders, this means that the next generation of hardware will need to bridge the gap between traditional computing and AI-driven network processing—a challenge that will define the industry’s roadmap for years to come.