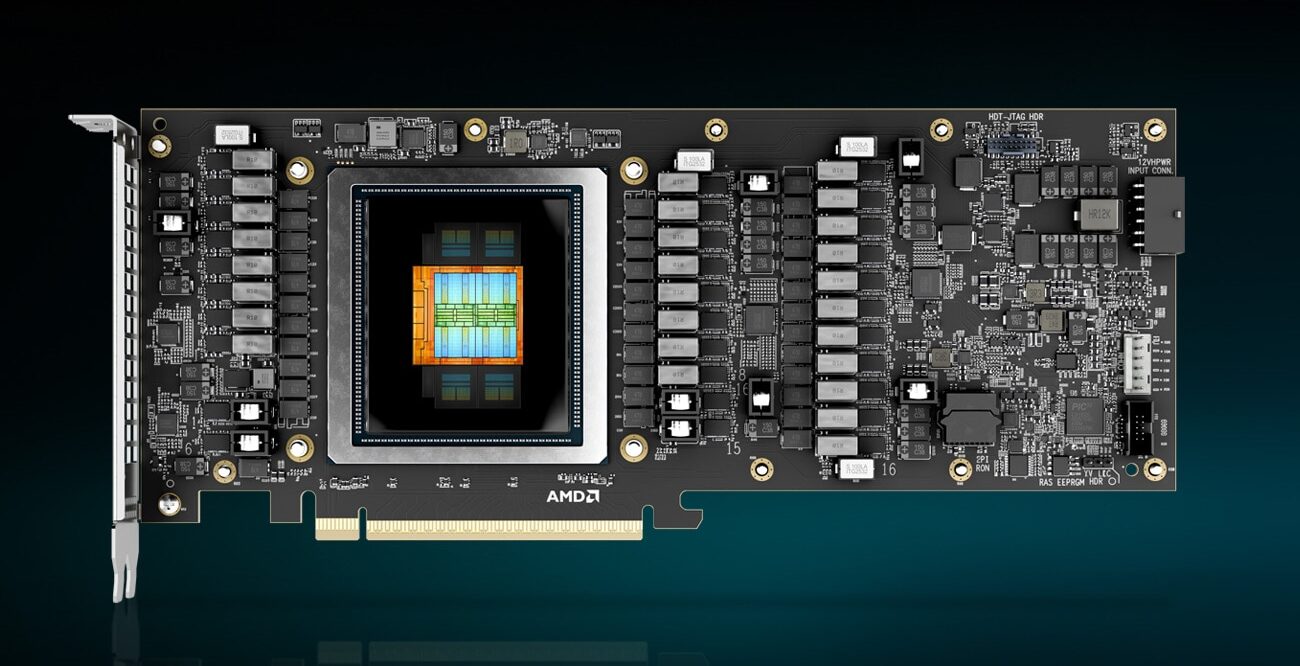

Enterprise AI workloads are shifting from cloud to local, but power efficiency remains a critical bottleneck. A new PCIe accelerator card now offers a potential solution: it can run models with up to 700 billion parameters while consuming only 240 watts—less than half the power of comparable GPU-based cards.

This performance leap is driven by a combination of high-bandwidth memory (HBM) and an optimized AI-focused architecture. The card’s 384 GB of HBM memory, paired with a custom compute design, allows it to handle large-language model inference tasks without the thermal or power constraints seen in traditional GPUs.

Camera-first approach

The card’s memory capacity is particularly notable—384 GB of HBM, which is nearly double what high-end GPU solutions typically offer. This enables local processing of models that would otherwise require distributed systems or cloud connections. The 240-watt TDP further reduces data center cooling demands, a key factor for enterprise deployments.

Core hardware

The underlying architecture is built around AI acceleration, with dedicated tensor cores and memory bandwidth optimized for inference tasks. Unlike general-purpose GPUs, this card focuses on reducing latency and power consumption specifically for large-language models (LLMs).

- Memory: 384 GB HBM2e, 1.6 TB/s bandwidth

- Compute: Custom AI accelerator with tensor cores

- Power: 240W TDP (peak)

- Connectivity: PCIe 5.0 x16 interface

This configuration suggests a card designed for edge and enterprise environments where power efficiency is critical. The 384 GB of memory, for example, allows for local processing of models that would typically require cloud connections or distributed systems. Meanwhile, the 240-watt TDP significantly reduces cooling requirements compared to high-end GPUs.

However, real-world adoption will depend on software support and ecosystem maturity. While the hardware specifications are impressive, the practical benefits for enterprise users hinge on how well these capabilities integrate with existing AI workflows.

Market impact

The card’s power efficiency—less than half that of competitive solutions like the RTX PRO 6000 Blackwell—could accelerate the shift toward local LLM processing. For enterprises, this means lower operational costs and reduced reliance on cloud services, which is particularly valuable in regions with high latency or limited cloud infrastructure.

The next step for adoption will be software optimization and driver support. If developers can leverage these capabilities effectively, the card could redefine how large-language models are deployed at scale.