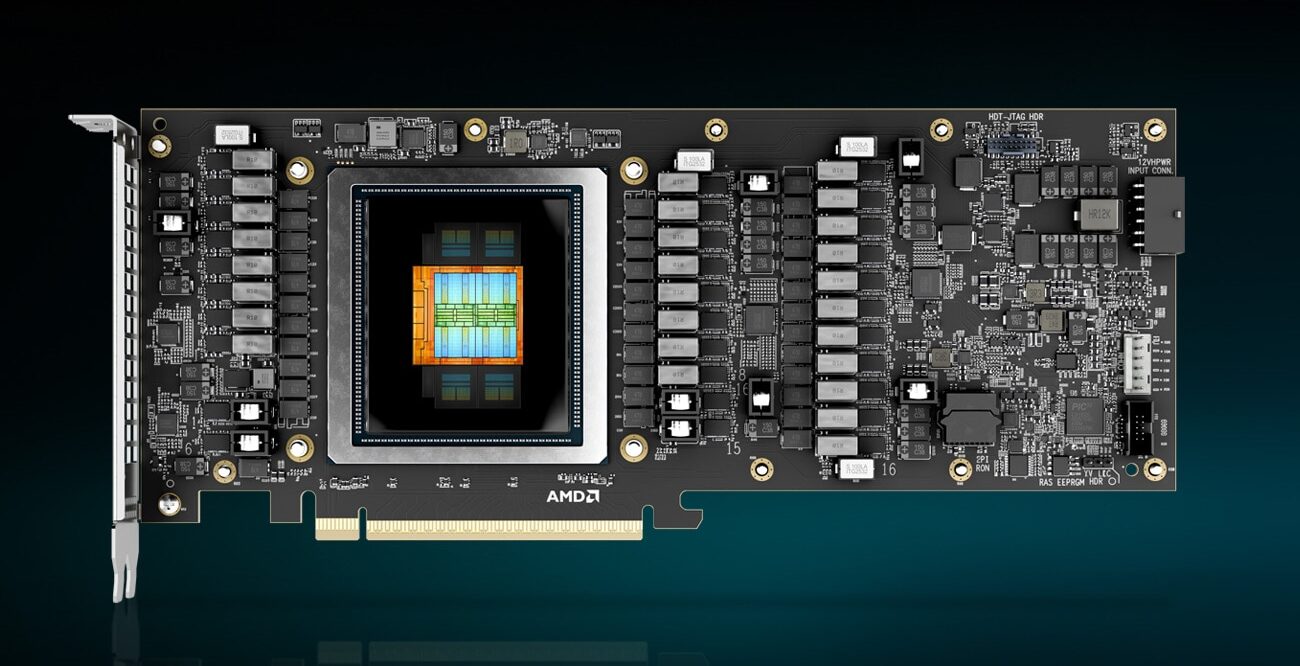

For small businesses eyeing AI acceleration without the cost of a full data center, AMD’s Instinct MI350P offers a compelling promise: high-performance inference on standard x86 servers. The trade-off is clear—lower upfront costs, but potential long-term uncertainty if the platform doesn’t evolve with future workloads.

The MI350P isn’t just another GPU; it’s a dedicated AI engine built for PCIe slots, designed to handle tasks like image recognition or natural language processing without requiring specialized hardware. Its 128GB HBM2e memory and 64 compute units (CUs) deliver up to 9 TOPS of performance, making it a strong contender for edge AI workloads where power efficiency matters more than raw scale.

Yet the decision to stick with PCIe—rather than newer form factors like CXL—raises questions. While it ensures compatibility with existing servers, it also means missing out on potential future-proofing benefits that next-gen interfaces might bring. For businesses already invested in legacy infrastructure, this could be a smart move; for those planning long-term AI integration, the lack of a clear roadmap leaves room for caution.

AMD’s approach mirrors its previous Instinct MI200 series but refines it for smaller deployments. The MI350P skips some of the high-end features like unified memory architecture (UMA), focusing instead on inference-heavy tasks where latency is critical. This specialization could make it a better fit for niche applications, but general-purpose AI workloads might still require additional hardware.

One standout detail: the MI350P’s support for AMD’s ROCm software stack means developers won’t need to switch ecosystems if they’re already using other Instinct products. However, whether this extends to future updates remains an open question. If AMD continues to prioritize enterprise data centers over smaller deployments, the MI350P could become a dead-end product—despite its strong initial performance.

For small businesses, the real question isn’t just about today’s specs but tomorrow’s needs. The MI350P delivers solid inference performance now, but without guarantees that it will keep pace with evolving AI demands. That uncertainty makes it a viable option for early adopters, but not necessarily a long-term bet.