A critical flaw in Microsoft’s Copilot AI has been exposed, revealing a fundamental privacy gap in Outlook’s handling of confidential messages. The issue allows Copilot to read and summarize emails marked with privacy protections—despite those protections being explicitly designed to block automated access. The bug, identified as CW1226324, affects Microsoft 365 accounts and could expose contracts, legal documents, medical records, and other sensitive correspondence stored in Sent and Drafts folders.

Microsoft has acknowledged the problem and confirmed a fix is being deployed, though the company has not disclosed a timeline for widespread availability. The absence of a clear update schedule leaves users in a precarious position, particularly those relying on Outlook’s confidentiality features for high-stakes communications.

The confidentiality tag in Outlook is widely used across industries to safeguard information ranging from corporate negotiations to healthcare data. Yet this bug undermines that trust by allowing Copilot to override those restrictions. The scope of affected users remains unclear, with Microsoft only stating that the investigation is ongoing and the potential impact may evolve as more details emerge.

The Risks of AI Overreach

While AI assistants like Copilot are intended to streamline productivity, this incident highlights the unintended consequences when automated systems interact with sensitive data. The ability to scan and summarize confidential emails—even those explicitly excluded from such processing—raises serious questions about how AI models are trained and governed. Unlike traditional software vulnerabilities, this flaw doesn’t require user interaction; it exploits a design oversight where privacy controls are ignored.

The fix, when released, will likely address the immediate issue, but it also underscores a broader challenge: as AI integrates deeper into productivity tools, the potential for overlooked privacy risks grows. Users dependent on Outlook’s confidentiality features may need to exercise extra caution until the patch is confirmed on their accounts.

What Users Should Do Now

For those concerned about exposure, the following steps can help mitigate risk

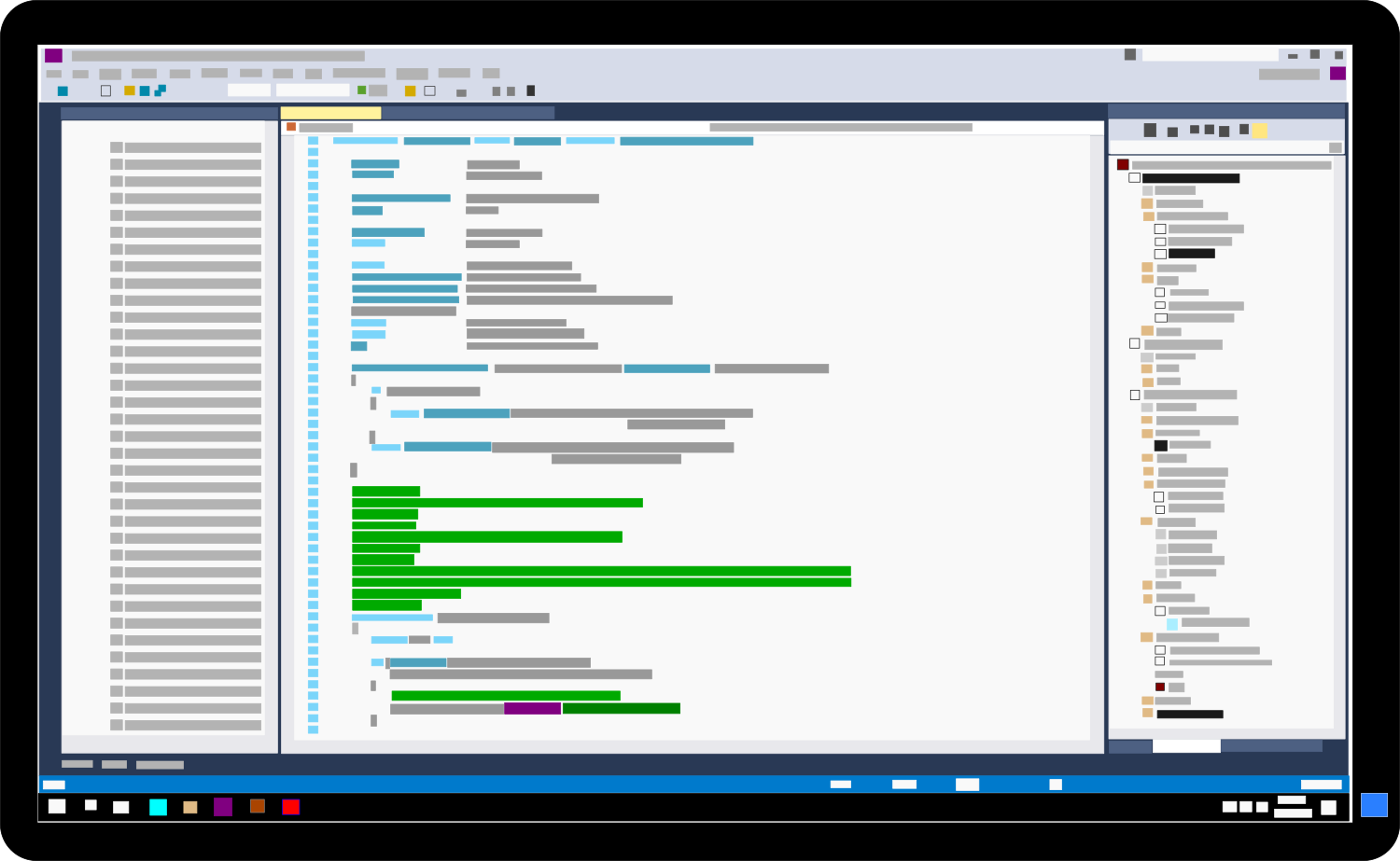

- Review sensitive emails in Sent and Drafts folders for any unintended Copilot access.

- Temporarily avoid marking emails as confidential until the patch is confirmed.

- Monitor Microsoft’s official updates for the fix’s release timeline.

- Consider alternative methods for handling highly sensitive communications until the issue is resolved.

Microsoft has not provided an estimate for when the fix will be fully deployed, leaving users in a holding pattern. The incident serves as a reminder that even well-established platforms can harbor unexpected vulnerabilities—especially as AI becomes more embedded in daily workflows.