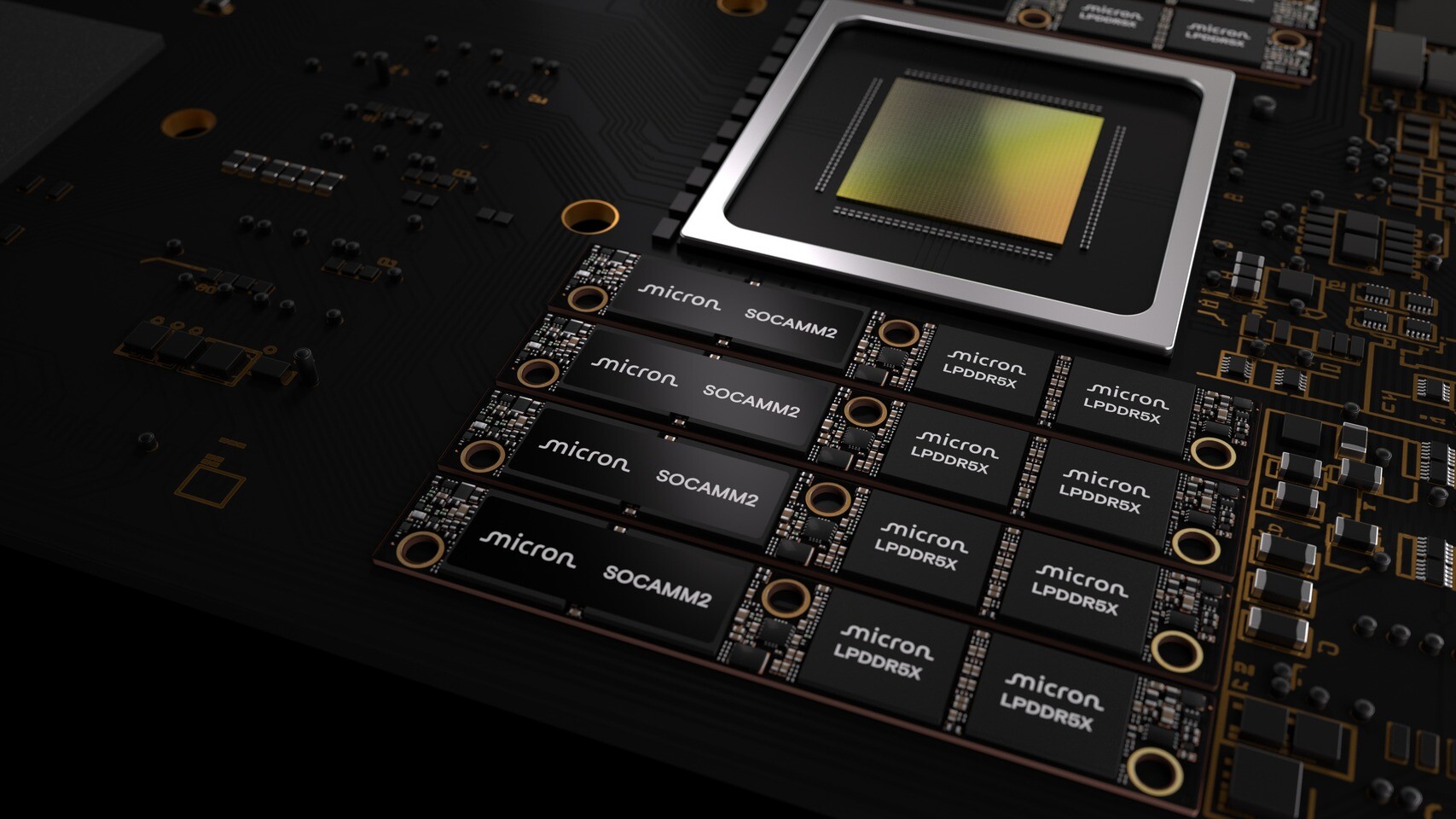

Micron has introduced the world's first high-capacity 256 GB LPDRAM SOCAMM2 module, marking a significant advancement in low-power server memory. This milestone is set to redefine the landscape of AI data centers by delivering unprecedented memory capacity and power efficiency.

The 256 GB SOCAMM2 module, built on Micron's first monolithic 32 Gb LPDDR5X design, addresses the growing demands of AI workloads. These include large model parameters, expansive context windows, and persistent key value (KV) caches, which are reshaping data center system architectures. Memory capacity, bandwidth efficiency, latency, and power efficiency have become critical constraints in modern AI systems, directly influencing performance, scalability, and total cost of ownership.

LPDRAM's unique combination of these attributes positions it as a cornerstone solution for both AI and core compute servers in increasingly power and thermally constrained environments. Micron's collaboration with NVIDIA underscores the importance of this development, aiming to co-design sophisticated memory solutions tailored for advanced AI infrastructure.

The 256 GB SOCAMM2 module offers one-third more capacity than its predecessor, providing 2 TB of LPDRAM per 8-channel CPU. This expansion supports larger context windows and complex inference workloads. Additionally, it consumes one-third less power compared to equivalent RDIMMs while using only one-third of the footprint, thereby enhancing rack density and reducing total cost of ownership.

In terms of performance, the module improves time to first token by more than 2.3 times for long context, real-time LLM inference when used for KV cache offload compared to currently available solutions. In standalone CPU applications, it delivers more than three times better performance per watt than mainstream memory modules for high-performance computing workloads.

The modular design of the SOCAMM2 module also improves serviceability and supports liquid-cooled server architectures. It enables future capacity expansion as AI and core compute memory requirements continue to grow. This adaptability is crucial in meeting the evolving needs of advanced AI infrastructure, which demands incredible optimization at every layer to maximize performance and efficiency.

Micron's leadership in low-power memory solutions for data center applications is further highlighted by its role in defining the JEDEC SOCAMM2 specification. The company maintains deep technical collaborations with system designers, driving industry-wide improvements in power efficiency and performance for next-generation data center platforms.

The 256 GB SOCAMM2 module is now available in customer samples, offering the industry's broadest data center LPDRAM portfolio. This portfolio spans from 8 GB to 64 GB components and includes 48 GB to 256 GB SOCAMM2 modules, catering to a wide range of AI and core compute applications.

For IT teams, this development represents a significant step forward in addressing the power and thermal constraints that have become increasingly challenging in modern data centers. The 256 GB LPDRAM module not only enhances performance but also reduces energy consumption, making it an attractive solution for those looking to optimize their AI infrastructure without compromising on efficiency.

In summary, Micron's 256 GB SOCAMM2 module stands as a testament to the company's commitment to innovation and collaboration in the field of low-power memory solutions. It promises to unlock new system architectures and set new benchmarks for power efficiency and performance in AI data centers.