Dance choreography has always been a visual art, but for Kyle Hanagami, the tools behind the scenes have become just as critical as the movement itself. Since transitioning from pre-med studies at UC Berkeley to a dance crew rehearsing outside with boom boxes and window mirrors, Hanagami’s work has evolved into a global phenomenon—with credits spanning eight years of choreography for BLACKPINK, the 2024 film Mean Girls, and Disney’s upcoming Zootopia* stage adaptation.

Yet for all his influence, Hanagami remains a behind-the-scenes figure. His 2017 YouTube video, set to Adele’s Love in the Dark, features dancers manipulating glowing orbs of light in a dimly lit stage—a piece he describes as a fragment of my heart left on the internet. Rarely appearing onscreen himself, Hanagami’s presence is felt through the precision of his collaborators’ movements, a testament to his meticulous process.

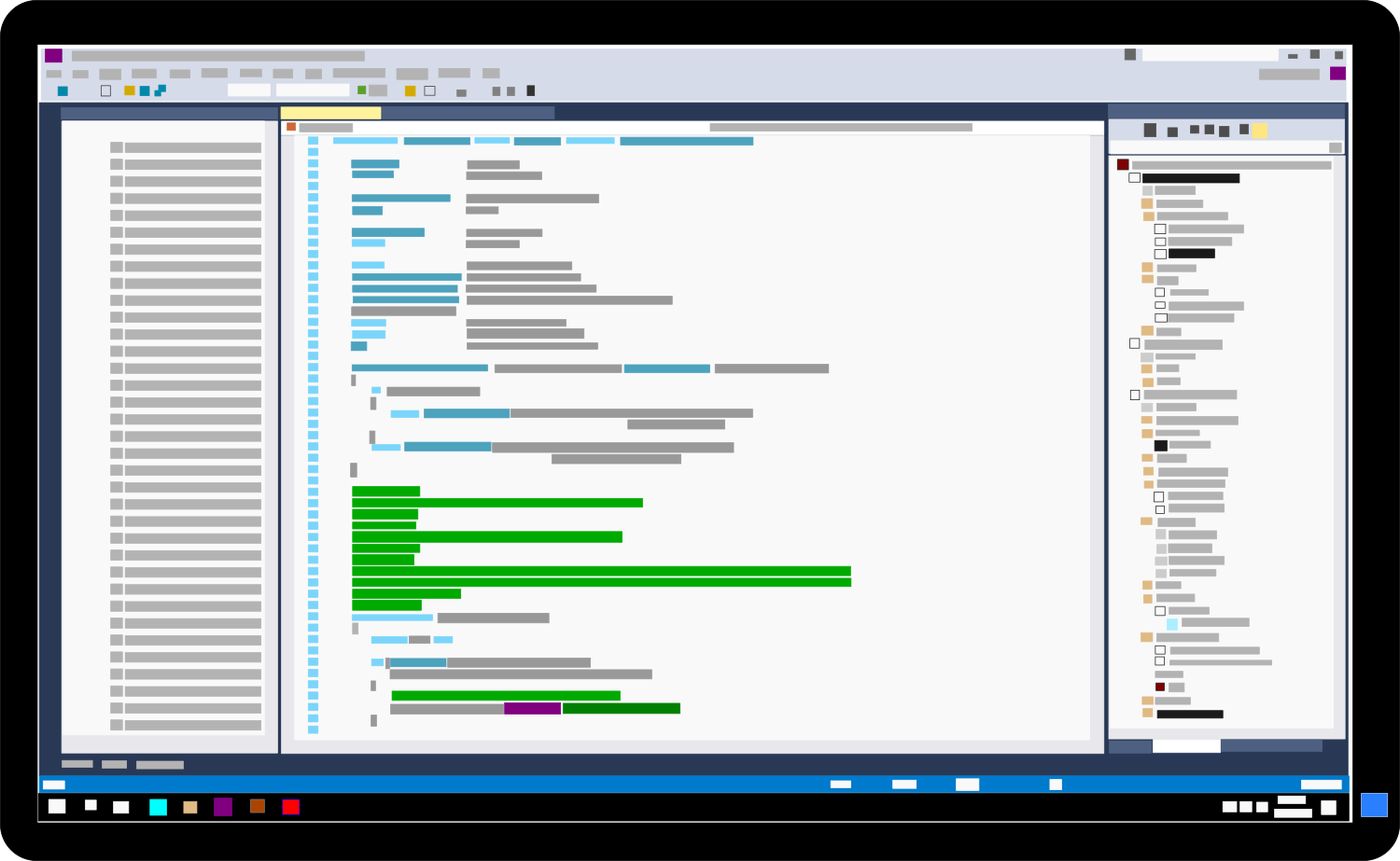

At the heart of that process is Final Cut Pro, the editing software he adopted in 2009 after self-teaching on iMovie. The shift wasn’t just about technical capability; it was about unlocking creative possibilities. It allowed me to think beyond what the camera captured, he notes. For Hanagami, editing isn’t a post-production step—it’s an extension of choreography, a way to refine angles, pacing, and storytelling before a single frame is locked.

The Evolution of a Workflow

Hanagami’s early days in dance were analog in the most literal sense: rehearsals with a boom box, windows as makeshift mirrors, and a digital point-and-shoot camera to document progress. Today, his workflow is a seamless blend of Apple’s hardware and software. An iPhone is always within reach, ready to capture spontaneous ideas or test choreography on location. The iPad becomes a sketchpad for refining movements, while Final Cut Pro—now with AI-driven enhancements—transforms raw footage into polished visual narratives.

Key to this evolution are features designed to eliminate the grunt work of editing. Magnetic Mask, for instance, automates complex masking tasks that once required frame-by-frame rotoscoping. Smart Conform streamlines the process of adapting landscape footage for social media cuts, saving hours of manual adjustments. But perhaps the most transformative addition is Beat Detection, an AI tool that analyzes music tracks and generates a beat grid. For a choreographer who edits to the rhythm of the music, this means aligning cuts to the beat instantly—no more manually inching waveforms into place.

Key Features in Final Cut Pro and Apple Creator Studio

- Beat Detection: AI-generated beat grids for precise music synchronization in edits.

- Magnetic Mask: Automates complex masking for effects and compositing.

- Smart Conform: Adjusts aspect ratios for social media without manual cropping.

- Live Multicam: Syncs multiple angles in real time for dynamic editing (available in Final Cut Camera and Final Cut Pro for iPad).

- Logic Pro Integration: Stem Splitter and Mastering Assistant for music production workflows.

- Pixelmator Pro: Vector and typography tools for graphic customization across Mac and iPad.

These tools aren’t just time-savers; they’re enablers of creativity. For Hanagami, the ability to shoot a sequence on iPhone ahead of Mean Girls* production allowed him to test angles and eyelines without bulky equipment. The result? A clearer vision for the studio, delivered through a single example from the set. Editing is as much a part of choreography as the movement itself, he observes. Limiting yourself to a static perspective restricts what’s possible.

A Tightly Integrated Ecosystem

Hanagami’s reliance on Apple’s ecosystem reflects a broader trend: the fusion of hardware and software designed to work in tandem. Sketching on iPad, filming on iPhone, editing on Mac or iPad, and sharing across platforms creates a loop where inspiration can be captured, refined, and distributed without friction. The iPhone, in particular, has been a game-changer. It’s always with me, he says. Inspiration hits, and I’m ready to film.

This integration extends beyond tools to mindset. For Hanagami, the ecosystem provides the reliability he needs in a fast-paced career. Whether it’s the instant feedback of Live Multicam in the studio or the precision of Beat Detection for class videos, these features allow him to focus on what matters: the artistry of movement.

For creators like Hanagami, the latest updates to Final Cut Pro and Apple Creator Studio aren’t just incremental improvements—they’re a redefinition of how choreography, filmmaking, and music production intersect. The result? A workflow that feels less like a series of tasks and more like an extension of the creative process itself.