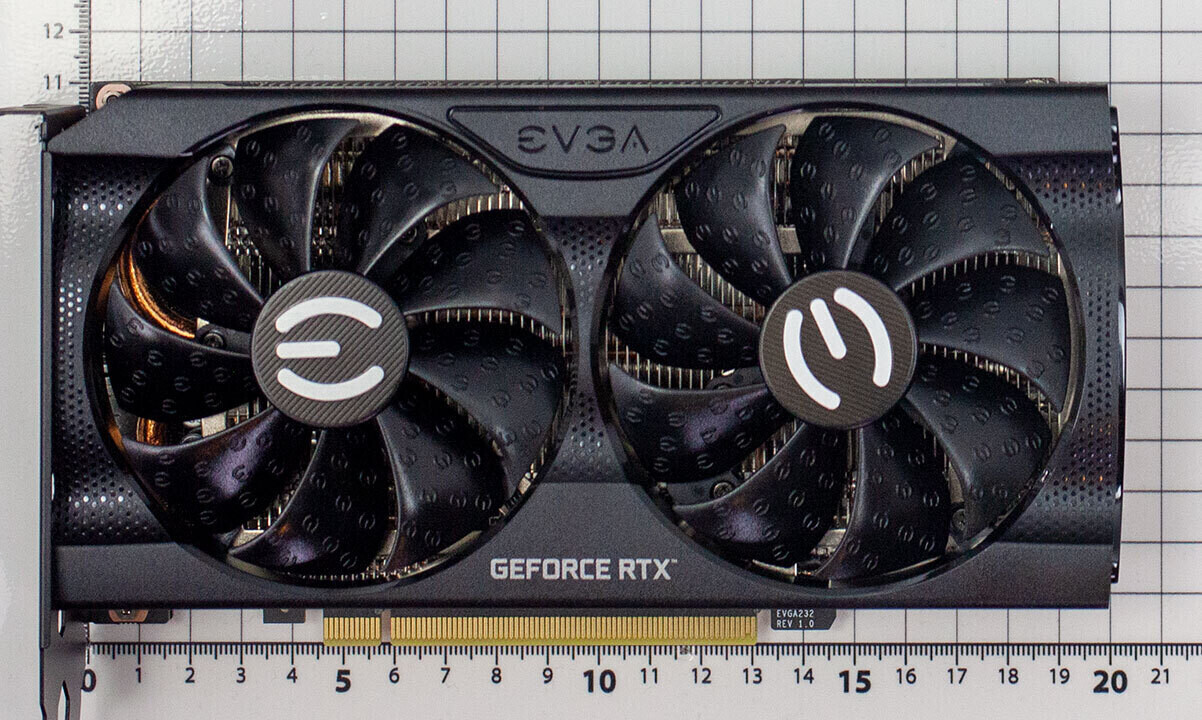

DLSS 5 is set to push the boundaries of real-time rendering, but whether it lives up to its potential will depend on how deeply it integrates with hardware pipelines. Unlike previous versions that relied on Tensor cores for upscaling, DLSS 5 is designed to rework GPU processing at a fundamental level, promising significant performance gains without sacrificing image quality.

At a glance:

- DLSS 5 could double rendering performance while maintaining near-lossless quality.

- Hardware integration is key—current implementations are still limited, raising questions about scalability.

- Enterprise users will demand stability and power efficiency over raw speed.

- Full adoption requires support across NVIDIA’s next-gen architectures, including Blackwell.

The transition from software to hardware acceleration is the critical factor. While DLSS 3 already used Tensor cores for upscaling, DLSS 5 takes a more aggressive approach by integrating directly into the rendering pipeline. This isn’t just about faster computations—it’s about redefining how GPUs handle data, from ray tracing to path tracing.

For enterprise buyers, the implications are substantial. A GPU that renders complex scenes twice as fast while consuming less power could revolutionize data centers and workstations. However, early benchmarks suggest DLSS 5 may deliver on its promises, yet real-world adoption remains uncertain. The challenge isn’t just technical—it’s about convincing developers to adopt a fundamentally different approach to rendering.

integration isn’t as simple as adding a new feature to existing chips. It requires a complete overhaul of how GPUs process workloads, which means DLSS 5 won’t be a quick fix. NVIDIA will need broad support across its ecosystem, including next-gen architectures like Blackwell, to ensure seamless compatibility and performance gains.

The real question is whether the benefits outweigh the complexity. For enterprise users, this could be a breakthrough or just another step in the evolution of upscaling technology. If DLSS 5 succeeds, it could redefine what’s possible in real-time rendering—but only if it works everywhere and delivers on its promises without compromise.