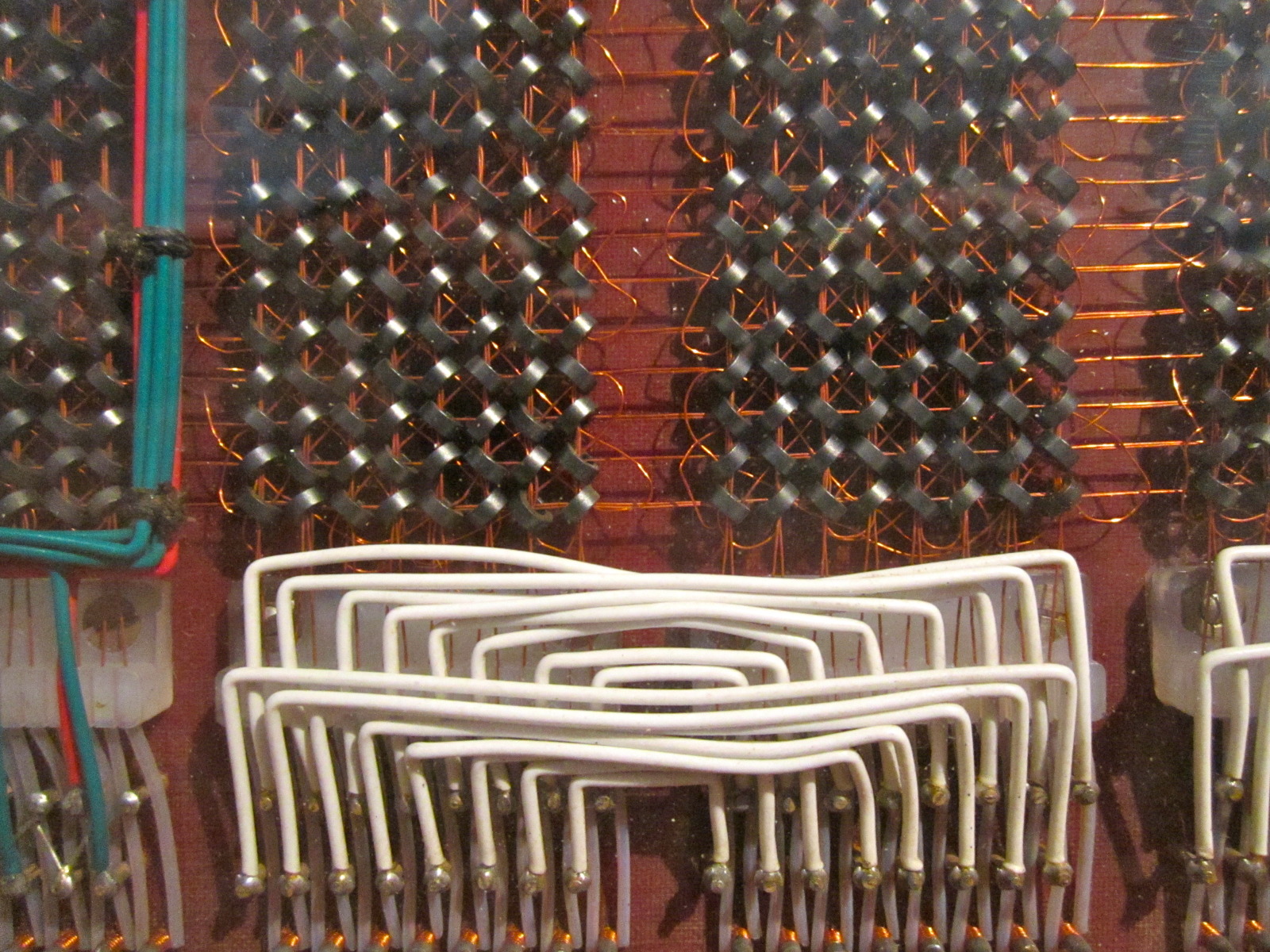

Enterprises seeking to deploy large-scale AI workloads now have a new option: a fully liquid-cooled infrastructure that promises unmatched efficiency and scalability. ASUS, in collaboration with NVIDIA, has unveiled a range of solutions built around the NVIDIA Vera Rubin platform, aiming to address the growing demand for high-performance AI clusters while reducing power consumption and total cost of ownership (TCO).

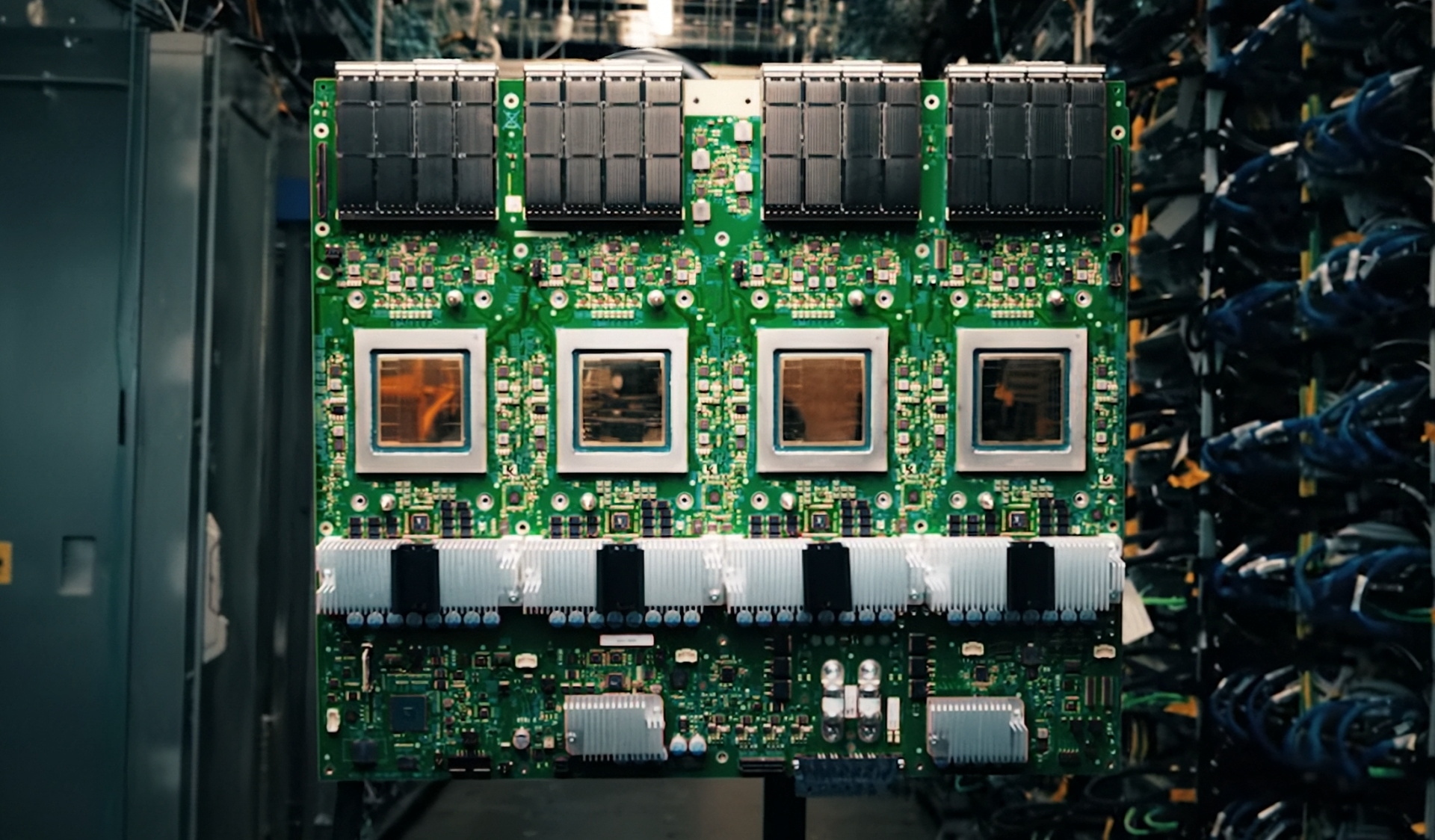

The partnership introduces several key products, including the ASUS AI POD, which is designed as a rack-scale powerhouse capable of handling massive AI workloads. This system, built on the NVIDIA Vera Rubin NVL72 platform, offers a TDP of up to 227 kW (MaxP) or 187 kW (MaxQ), delivering significant performance improvements per watt compared to traditional setups.

Key Features and Specifications

- ASUS AI POD: A liquid-cooled, rack-scale system with a TDP of up to 227 kW (MaxP) or 187 kW (MaxQ).

- NVIDIA Vera Rubin NVL72 platform: Supports up to 10X higher performance per watt.

- Hybrid-cooled XA NR1I-E12L and fully liquid-cooled XA NR1I-E12LR solutions for seamless transition to advanced cooling.

- ASUS ExpertCenter Pro ET900N G3: A deskside supercomputer powered by the NVIDIA Grace Blackwell Ultra platform, featuring 748 GB of coherent unified memory and NVLink-C2C interconnects.

The solution also includes a robust data ecosystem with partnerships with NVIDIA-Certified storage providers such as IBM, DDN, WEKA, and VAST Data. This ensures scalability and resilience for memory-intensive AI workloads, from edge to cloud.

Impact on AI Development and Deployment

The new infrastructure is designed to support a complete workflow for AI development and deployment, from initial model training to real-time inference. The ASUS Ascent GX10, powered by the NVIDIA Grace Blackwell Superchip, offers agile petaflop-scale performance ideal for rapid model iteration. Meanwhile, the PE3000N, a ruggedized inference engine powered by NVIDIA Jetson Thor, delivers 2,070 TFLOPS for real-time compute needs such as sensor fusion and autonomous navigation.

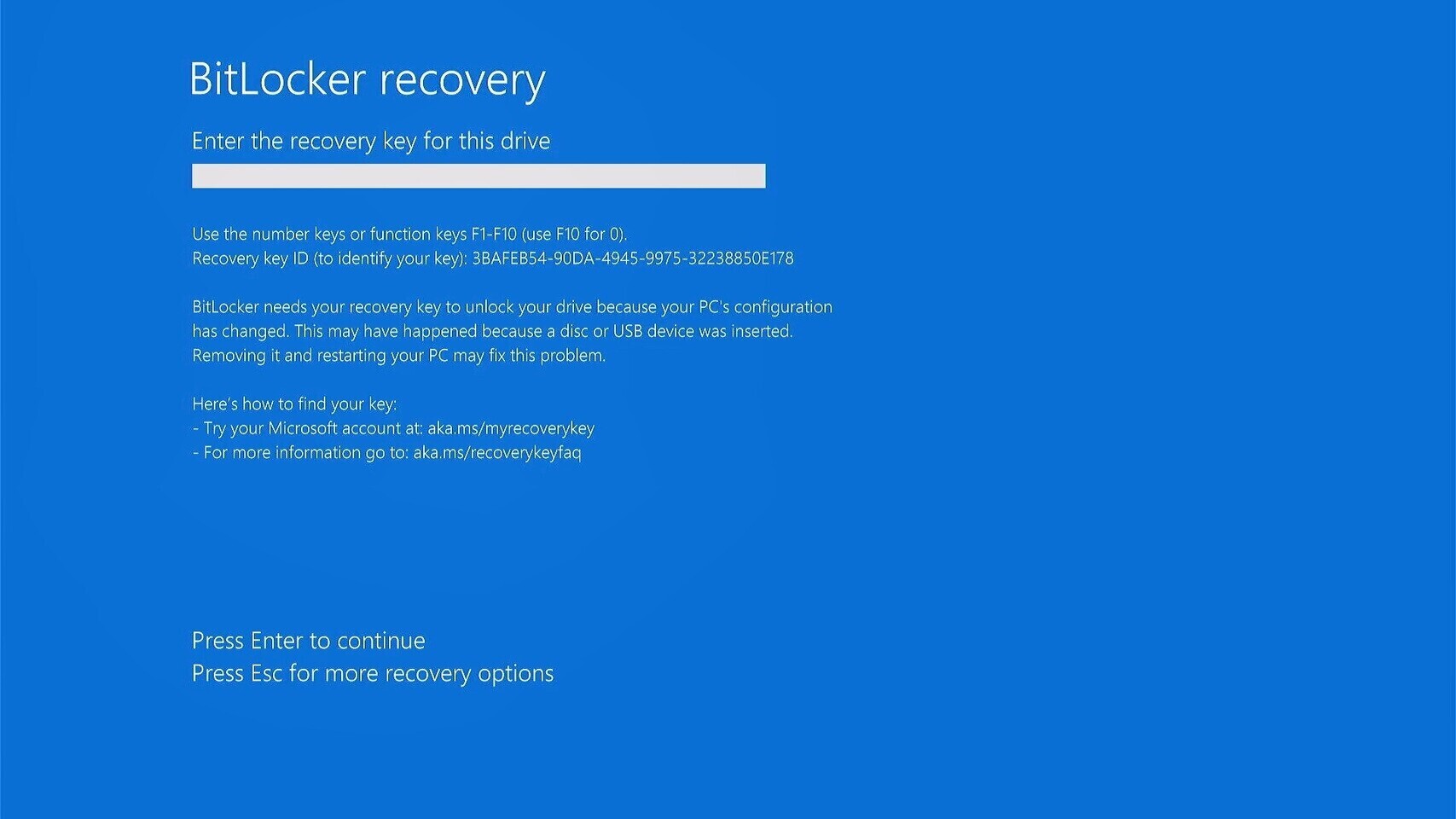

For enterprise AI applications, ASUS introduces the ASUS AI Hub, a turnkey on-premises platform optimized with ESC8000-series servers. This platform leverages open-source LLMs like NVIDIA Nemotron and Gemma, enabling enterprises to build custom AI assistants and implement RAG-enhanced document intelligence while maintaining data sovereignty.

Sustainability is a key focus of the partnership, with ASUS servers featuring Thermal Radar 2.0, which uses up to 56 sensors to optimize fan performance, cutting power consumption by up to 36% and saving approximately $29,000 annually in a 1,000-node cluster. Additionally, the ASUS Control Center (ACC) Data Center Edition includes automated carbon emissions tracking to support enterprises in achieving their ESG goals.

The collaboration between ASUS and NVIDIA represents a significant step forward in the development of high-performance, energy-efficient AI infrastructure. By combining advanced liquid cooling with scalable, resilient storage solutions, the partnership aims to provide enterprises and cloud providers with a robust pathway to innovation while addressing the growing demands of large-scale AI workloads.