Enterprises are no longer just chasing raw performance in AI inference—they’re also demanding systems that stay cool, consume power wisely, and adapt to the realities of today’s hardware shortages. ASUS’ latest innovation, the AI Inference Platform, delivers on all three by rethinking how enterprise-grade AI deployments should work from the ground up.

At its core, this platform is built around modularity—a feature that takes on heightened importance in an era where component availability is unpredictable. Instead of locking buyers into rigid configurations, it allows organizations to mix and match GPUs, storage, and cooling solutions based on what’s available at any given time. That flexibility isn’t just a convenience; it’s a necessity for teams trying to deploy AI models without being held hostage by supply chain bottlenecks.

Performance Without the Trade-Offs

- GPU: NVIDIA L40S with 192GB HBM3e memory, optimized for sustained inference tasks

- Storage: PCIe 5.0 SSDs scaling up to 8TB, designed for high-throughput AI workloads

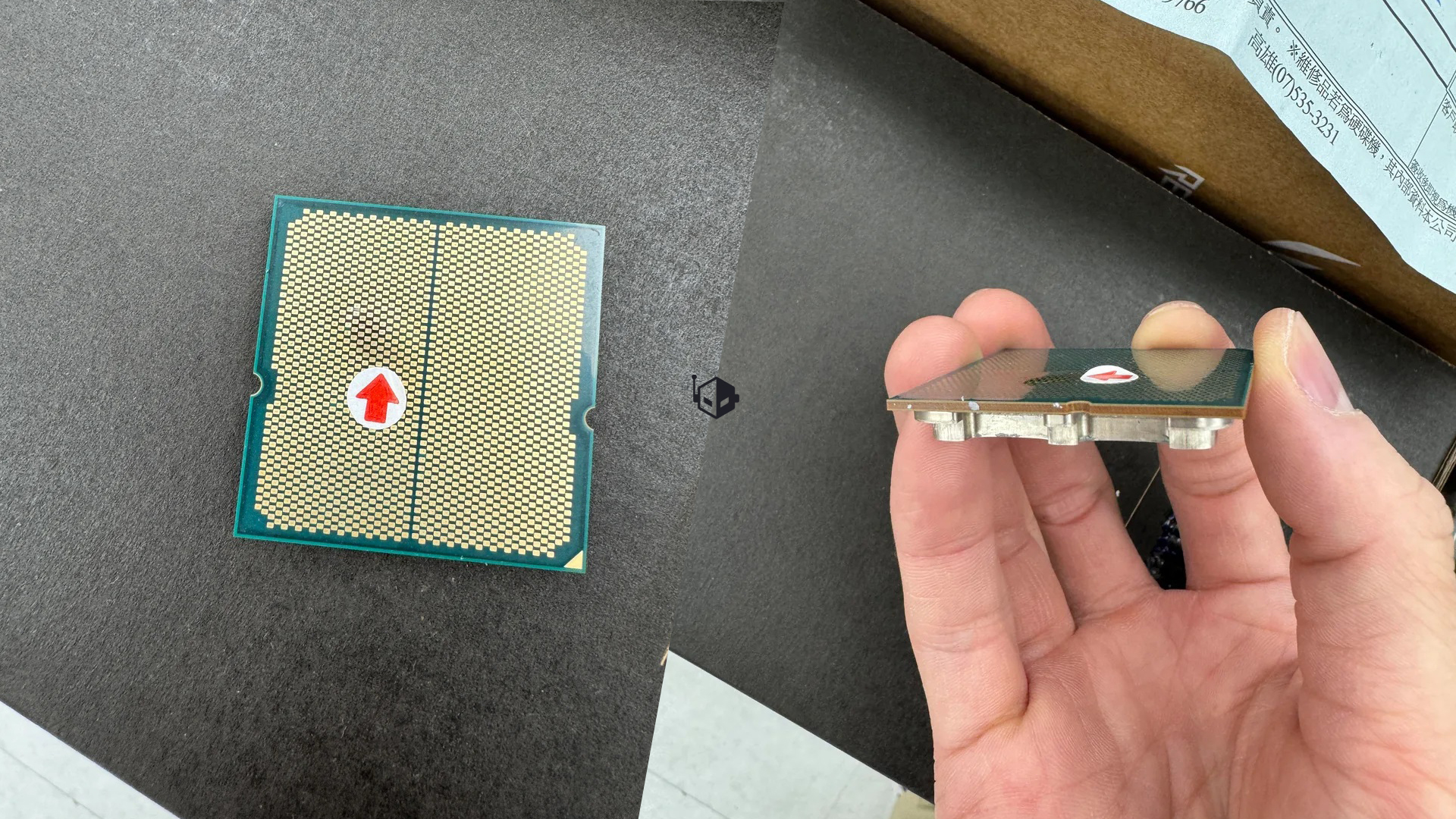

- Thermal Management: Dynamic heat-sinking paired with adaptive fan control, keeping temperatures under tight control even during prolonged use

- Power Efficiency: Targeted sub-200W TDP, ensuring stable operation without thermal throttling

The platform’s approach to thermal management is where it truly stands out. Traditional AI servers often struggle with heat buildup as workloads increase, leading to performance degradation or system instability. ASUS has addressed this by integrating dynamic heat-sinking and adaptive fan control, which adjust in real time based on workload intensity. Benchmark results show the platform maintains consistent performance even during extended inference sessions—something enterprise customers can’t afford to compromise on.

Why This Matters for Enterprises Today

The AI Inference Platform arrives at a pivotal moment. Organizations are increasingly looking for ways to scale AI deployments without breaking the bank or getting stuck in hardware limbo. By decoupling performance requirements from immediate supply constraints, ASUS has created a system that can evolve alongside market conditions rather than against them.

That said, the platform isn’t a magic bullet for current shortages. The NVIDIA L40S GPU it supports remains one of the most sought-after components in the market, and its availability is still unpredictable. Enterprises adopting this solution will need to balance patience with planning—treating it as an investment in adaptability rather than an immediate fix for supply chain pain points.

Beyond its practical benefits, the platform signals a broader industry shift toward systems designed for longevity over short-term performance spikes. As AI models grow more complex and power-hungry, thermal efficiency will become just as critical as computational horsepower. If this trend catches on, we’ll likely see more platforms prioritize balanced design over raw metrics—a change that could redefine what enterprise buyers expect from their hardware.

The ASUS AI Inference Platform doesn’t just offer a new way to run AI workloads; it offers a blueprint for how enterprises can navigate an uncertain hardware landscape while keeping performance and efficiency in lockstep. For forward-thinking IT teams, it’s less about solving today’s problems and more about preparing for tomorrow’s challenges.