Google’s Cloud Next event this year has been a quiet turning point for Apple’s Siri. While the tech world often focuses on new hardware launches or software updates, the real story lies beneath the surface: the slow but steady migration of Siri toward Google’s Gemini AI framework.

The shift is subtle, but its implications are far-reaching. For years, Siri has been Apple’s flagship AI assistant, deeply integrated into iPhones, iPads, and HomePods. Yet behind the scenes, Apple has been working to align Siri with Google’s Gemini, a move that could reshape how AI assistants function across platforms.

One of the most noticeable changes for users is the way Siri processes queries. Previously, Siri relied on its own neural networks and data pipelines. Now, it increasingly leverages Google’s infrastructure, particularly in natural language understanding and response generation. This means faster, more accurate responses—but also a closer dependency on Google’s backend systems.

For buyers, the transition raises practical questions. Will Siri remain as responsive as before? How will this shift affect device performance, especially in regions where Google’s cloud infrastructure is less robust? And what does it mean for Apple’s long-term strategy in AI?

Supply Chain Ripples

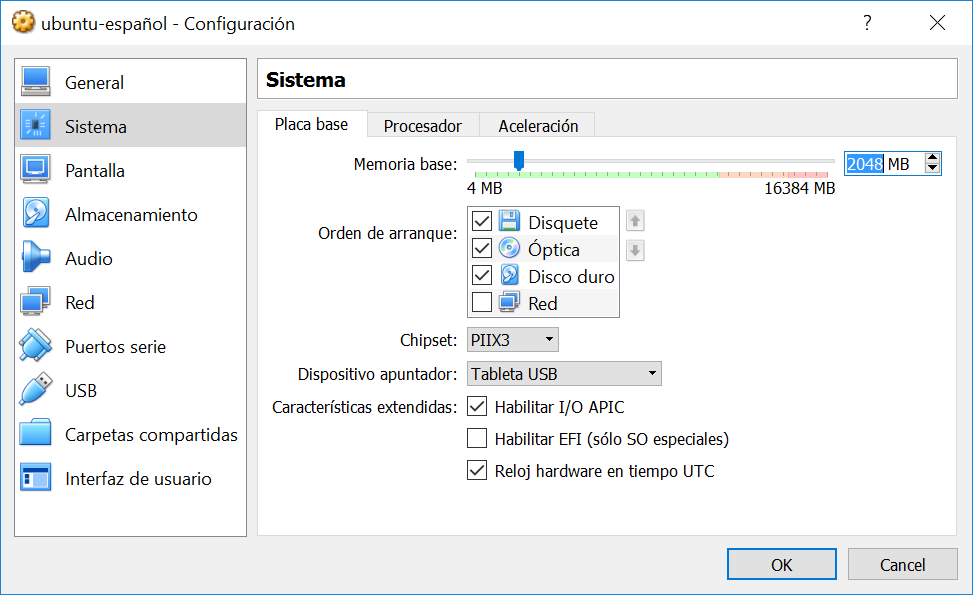

The move also sends ripples through the supply chain. Apple has historically been a vertically integrated company, controlling much of its own software and hardware development. By outsourcing parts of Siri’s AI processing to Google, Apple is effectively decentralizing one of its core technologies.

This isn’t just about performance, though. It’s also about scalability. Google’s Gemini is designed to handle massive volumes of data, which could allow Siri to improve more quickly and adapt to new languages or dialects without Apple bearing the full development burden. However, this shift introduces a new layer of complexity: users may notice slight variations in how Siri behaves depending on their location or network conditions.

Comparing the Ecosystems

When compared to other AI assistants, Siri has always been a unique player. Unlike Google Assistant or Amazon’s Alexa, which are tightly integrated with their respective ecosystems (search and smart home devices), Siri was built from the ground up as Apple’s own creation. Now, that independence is fading.

- Google Assistant: Deeply tied to search, with real-time data updates and a vast knowledge graph.

- Amazon Alexa: Focused on smart home automation, with strong third-party skill integration.

- Apple Siri (now Gemini-backed): Balancing Apple’s ecosystem with Google’s AI capabilities, aiming for precision in voice commands and device control.

The question for buyers is whether this transition will make Siri more powerful or more vulnerable. On one hand, leveraging Google’s infrastructure could accelerate innovation and improve accuracy. On the other, it introduces a dependency that Apple has never had before—one that could affect both performance and data privacy concerns.

What Buyers Need to Know

The shift to Gemini is still in its early stages, but users should expect some changes in how Siri operates. For example, responses may feel slightly more dynamic, with better context-aware follow-ups. However, those who rely on Siri’s deep integration with Apple devices—like HomeKit or iCloud reminders—shouldn’t see major disruptions.

For now, the biggest impact is likely to be under the hood. Apple remains committed to its ecosystem, but the collaboration with Google signals a new era where AI assistants are no longer siloed within single-company frameworks. This could mean faster updates, broader compatibility, and even cross-platform features in the future.

As the transition continues, buyers should keep an eye on how it affects device availability and performance. While Siri’s core functionality will likely remain intact, the shift to Gemini introduces a layer of uncertainty that Apple has never faced before. For those who value control over their AI experience, this may be worth monitoring closely.