Energy consumption in AI-driven infrastructure is set to more than double, reaching 120 terawatt-hours annually by 2027. This projection, based on current trends in model training and deployment, underscores the scale of the challenge ahead. Unlike previous technological shifts, this increase isn't just about raw power—it's about redefining how energy is generated, distributed, and utilized to support AI workloads.

The five-layered architecture serves as the blueprint for this transformation. The first layer, energy infrastructure, must evolve to accommodate the exponential growth in demand. This involves not only expanding renewable energy sources but also integrating smart grids capable of dynamically adjusting supply based on real-time computational needs. The second layer, computing infrastructure, is already undergoing a radical redesign, with data centers shifting from traditional server-based models to more modular, liquid-cooled systems optimized for AI workloads.

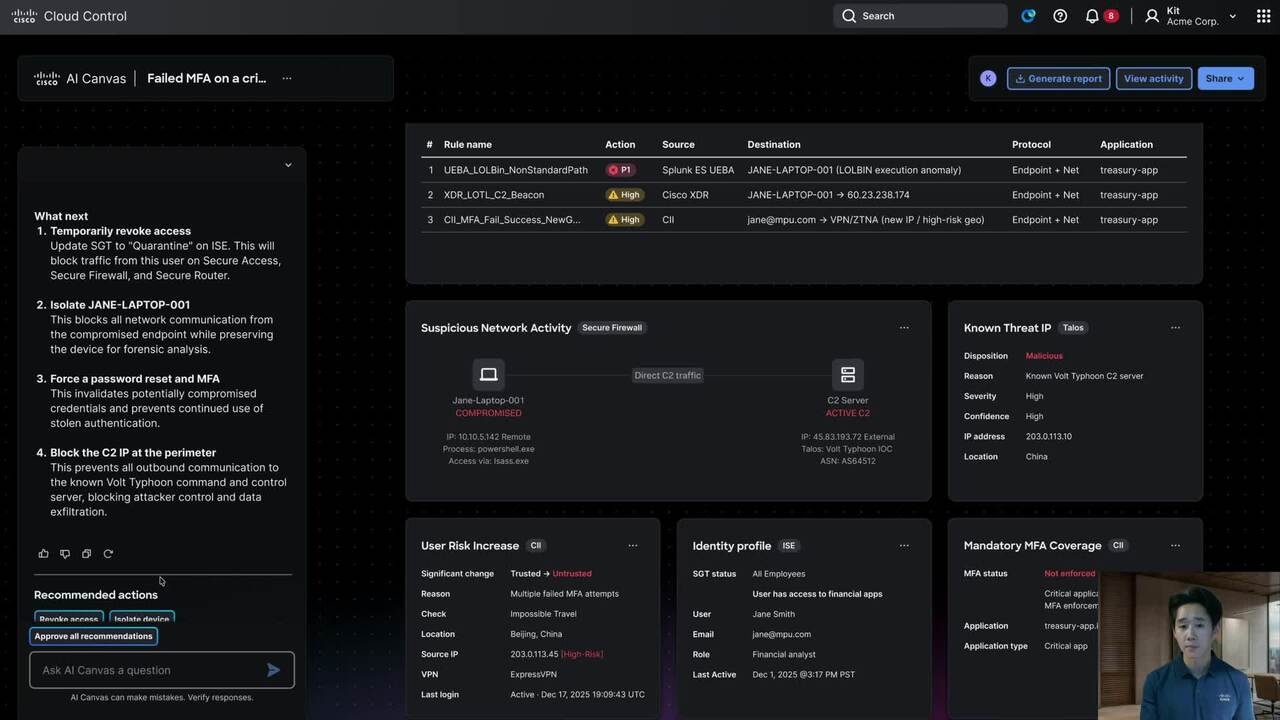

Parallel to these developments, the third and fourth layers—software stacks and network topologies—are being re-engineered to support the distributed nature of modern AI applications. This includes advancements in software-defined networking, which allows for more efficient data flow between compute nodes, and the adoption of specialized algorithms that reduce latency while maintaining performance. The final layer, application innovation, is where the true potential of this infrastructure begins to manifest.

models are becoming increasingly complex, with some exceeding 100 trillion parameters. These models require not only more powerful hardware but also innovative cooling solutions to prevent thermal throttling. Liquid-cooled data centers, for example, are now standard in high-performance computing environments, reducing energy waste by up to 30% compared to traditional air-cooled setups. However, the environmental impact remains a critical concern, pushing the industry toward more sustainable practices such as using immersion cooling with biodegradable fluids and leveraging AI itself to optimize energy usage.

The implications of this infrastructure buildout extend far beyond technical specifications. It promises to unlock new capabilities in fields like healthcare, where AI-driven diagnostics could revolutionize patient care, or autonomous systems, where real-time processing powers next-generation robotics. Yet, the path forward is not without obstacles. Balancing the need for scalability with sustainability will require unprecedented collaboration between technology companies, energy providers, and policymakers.

NVIDIA stands at the center of this transformation, driving innovations in GPUs, data center design, and AI software that are critical to the success of this framework. Its latest GPU architectures, such as the Blackwell series, are designed to deliver up to 100 teraflops of performance while significantly reducing power consumption per operation. These advancements are a testament to the company's role in shaping the future of AI infrastructure.

The journey ahead is one of both opportunity and responsibility. The potential rewards—faster, more capable AI systems that can tackle complex global challenges—are immense. However, ensuring that this progress does not come at the cost of environmental sustainability will define the trajectory of this revolution. If executed thoughtfully, this infrastructure buildout could mark a turning point in how technology intersects with society, setting the stage for an era where AI is both a tool and a force for positive change.