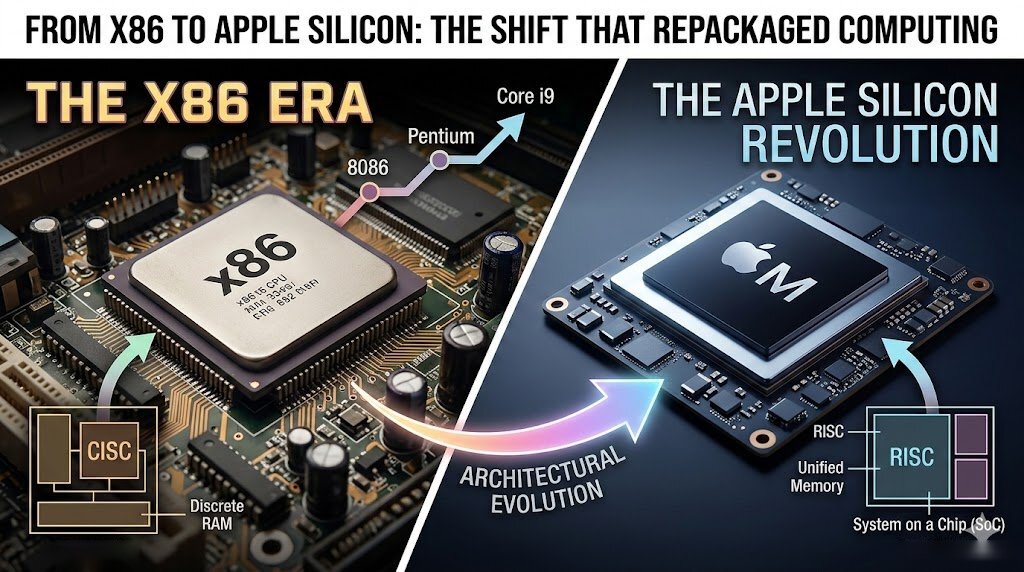

design is at a turning point. The days of chasing ever-higher gigahertz ratings have given way to a focus on efficiency, parallelism, and specialized execution units that can handle everything from integer math to vectorized AI operations.

Today’s processors are no longer just one thing that does everything. They’re heterogeneous systems with distinct front-end, execution, and memory-handling components working in lockstep. The goal isn’t just more instructions per second—it’s smarter execution, better power management, and the ability to scale from mobile devices to data centers.

At a glance

- Architecture: Modern CPUs use superscalar, out-of-order designs with dynamic branch prediction and register renaming.

- Execution units: Multiple ALUs, FPUs, vector/SIMD units for parallel workloads.

- Memory: 24-bit address buses (in some legacy designs), 64-bit addressing in modern chips, with deep cache hierarchies (L1/L2/L3).

- Performance: Focus on instructions per cycle (IPC) over raw clock speed; 10 GHz+ clocks possible but efficiency is prioritized.

The shift from clock-speed obsession to architectural innovation is reshaping how CPUs are built and what they can do. Instead of longer pipelines, today’s designs emphasize wider execution units, better branch prediction, and specialized hardware for AI, encryption, and multimedia.

What changed?

Modern CPU cores no longer rely on a single, rigid fetch-decode-execute cycle. They’ve evolved into dynamic systems where

- Instruction prefetch queues and speculative execution keep pipelines full by guessing branch outcomes ahead of time.

- Register renaming eliminates false dependencies, allowing more instructions to execute in parallel.

- Vector/SIMD units (like Intel’s AVX or ARM’s NEON) process multiple data elements at once, crucial for AI and multimedia workloads.

- Cache hierarchies (L1/L2/L3) reduce memory latency by holding frequently used data close to execution units.

This isn’t just about raw performance—it’s about efficiency. A 10 GHz Pentium 4 might have sounded impressive, but its long pipeline and high power draw made it impractical for most use cases. Today’s designs prioritize lower voltage, better thermals, and sustained throughput over peak clock speeds.

Who benefits?

Developers building performance-critical applications—whether for AI inference, encryption, or multimedia processing—stand to gain the most. The move toward specialized execution units means that certain workloads can be offloaded to dedicated hardware, reducing CPU load and improving power efficiency.

For example, a developer working on an AI model will notice faster training times not because of higher clock speeds, but because the CPU’s vector units can process large arrays of data in parallel. Similarly, encryption tasks benefit from SIMD acceleration, while general-purpose workloads rely on the core’s out-of-order execution and branch prediction.

What remains unclear

The biggest question isn’t whether these architectures will deliver performance gains—it’s how quickly software can adapt. Legacy x86 code still runs on modern CPUs, but the full potential of new instruction sets (like AVX-512 or ARM’s SVE) depends on compiler optimizations and developer adoption.

Additionally, while 10 GHz+ clocks are theoretically possible with advanced manufacturing, real-world power constraints mean that most high-end CPUs now balance clock speed with efficiency. The future will likely see a mix of ultra-high-frequency cores for specific tasks alongside efficiency-focused designs for general workloads.

What to watch

The pricing and availability of CPUs with advanced vector/SIMD units, as well as compiler support for newer instruction sets, will be key indicators of the industry’s direction. Developers and hardware manufacturers alike must navigate this transition carefully, ensuring that the promise of efficiency-driven performance is realized without sacrificing compatibility or flexibility.