A multi-layered approach to AI computing has emerged, promising to reshape how systems are designed and deployed. This new framework breaks down the stack into distinct layers, each with its own performance characteristics and optimization strategies.

The model introduces five key layers: Data Ingestion, Preprocessing, Feature Extraction, Model Execution, and Output Optimization. Each layer presents unique challenges for hardware manufacturers, software developers, and system integrators alike.

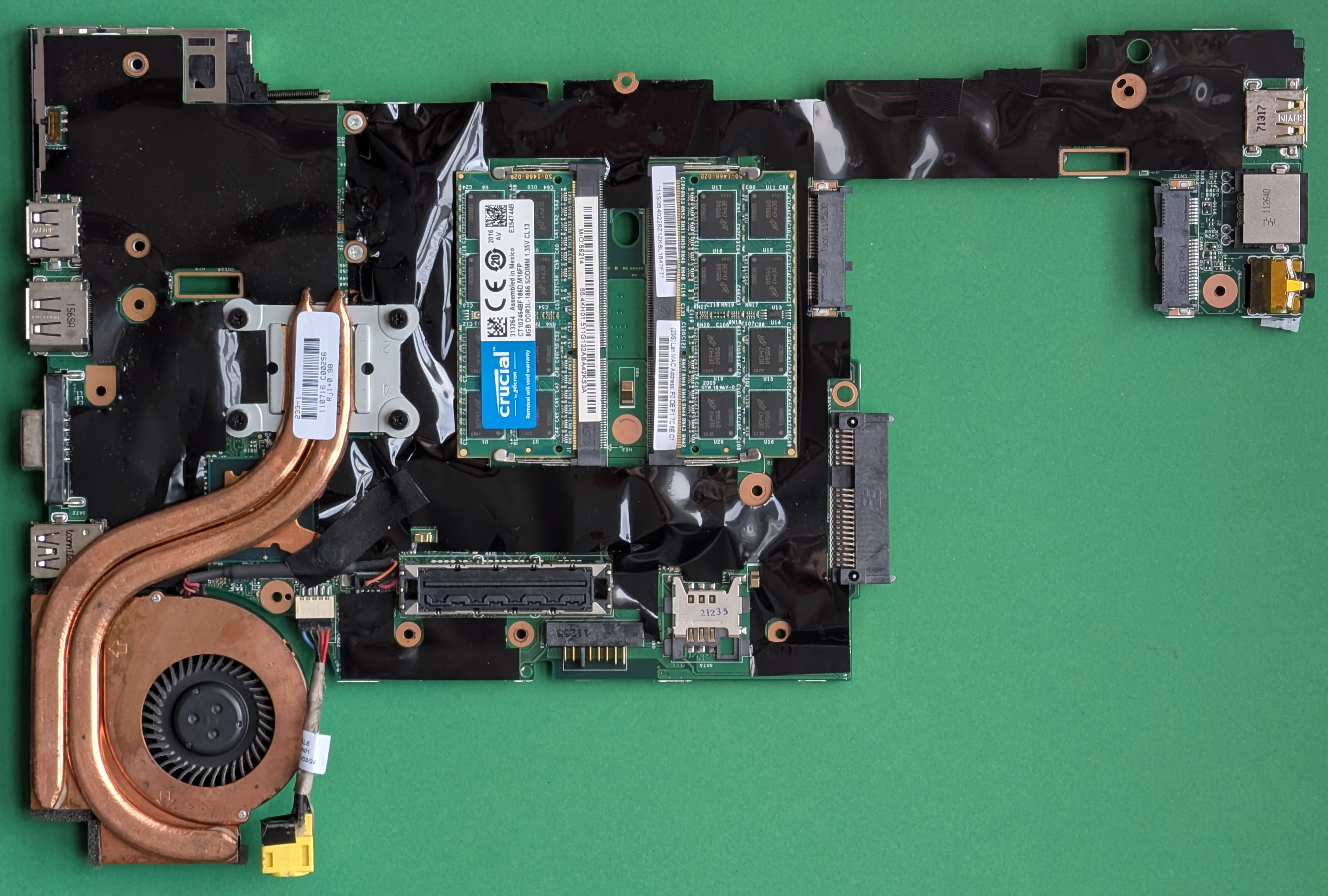

At the foundation lies Data Ingestion, where raw data is collected and prepared for processing. This layer demands high-bandwidth interfaces and efficient memory management to handle diverse input formats. Moving up, Preprocessing involves cleaning and normalizing data, a step that can significantly impact overall system performance if not optimized properly.

Feature Extraction follows, where the most relevant information is distilled from the dataset. This is often the most computationally intensive part of the pipeline, requiring powerful processing units capable of handling complex mathematical operations at scale. Model Execution then takes those features and runs them through trained neural networks, a process that can be both memory-intensive and latency-sensitive.

The final layer, Output Optimization, focuses on refining the results for end-users. This could involve post-processing techniques to enhance accuracy or compress outputs for faster transmission. Together, these layers form a cohesive framework that system builders must navigate when designing AI-ready infrastructure.

For PC builders and system integrators, this new model offers a roadmap for future-proofing their designs. By focusing on each layer's specific requirements, they can make informed decisions about hardware selections—whether it's choosing the right GPU for Feature Extraction or optimizing memory bandwidth for Data Ingestion. The framework also serves as a guide for software developers, helping them align their algorithms with the underlying hardware capabilities.

Early adopters are already seeing benefits from this structured approach. For instance, certain GPUs have been optimized specifically for Feature Extraction, leading to measurable improvements in training times and inference speeds. Meanwhile, advancements in memory technologies are addressing the bottlenecks at the Data Ingestion layer, enabling smoother data pipelines.

As the AI landscape continues to evolve, this five-layer model may become a standard reference point for industry discussions. It provides a common language for developers, hardware engineers, and end-users to discuss performance trade-offs and optimization strategies. For those looking to build or upgrade systems with AI in mind, understanding these layers will be crucial in making decisions that balance cost, performance, and scalability.

The most immediate beneficiaries of this framework are likely to be enterprise-grade AI deployments, where the need for high-performance, scalable infrastructure is paramount. However, as the model gains wider adoption, it could also influence consumer-facing AI applications, leading to more efficient and responsive systems across the board.