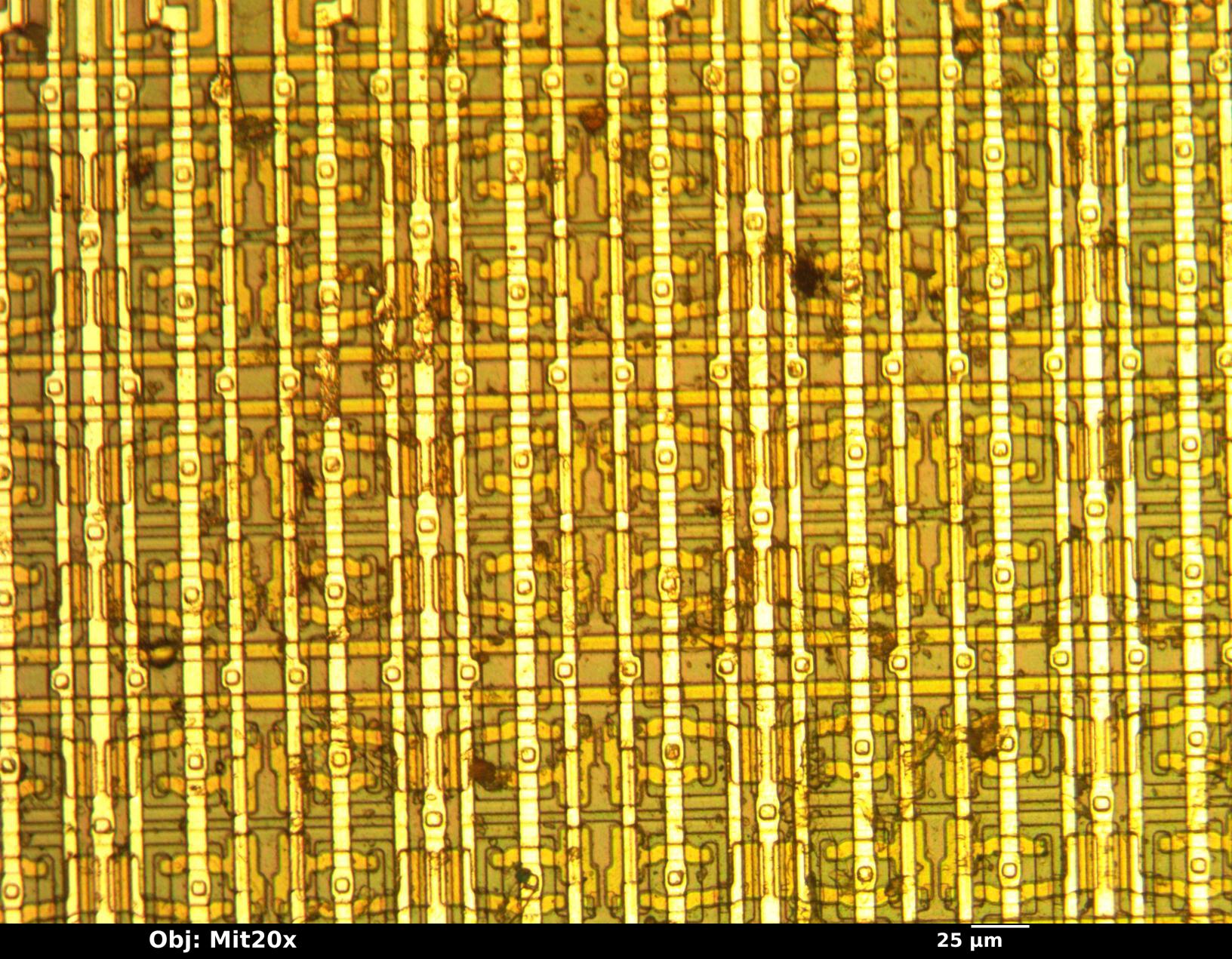

Samsung’s latest **HBM4** memory modules have passed NVIDIA’s rigorous quality checks, clearing the way for integration into the company’s **Vera Rubin** AI accelerator platform. The verification—confirmed by South Korean industry sources—marks a critical milestone in Samsung’s push to reclaim leadership in high-performance memory, particularly as demand for AI workloads surges.

This development follows months of speculation about Samsung’s ability to compete in the **HBM** market, where the company had trailed rivals during the **HBM3** and **HBM3E** eras. The new **HBM4** modules, built on a **4 nm** process, now meet NVIDIA’s exacting specifications, including a **22 TB/s** bandwidth target announced at **CES 2026**. While the exact role of Samsung’s **HBM4** in achieving this performance remains unofficial, industry observers suggest its **11.7 Gb/s** speed—exceeding the **10 Gb/s** JEDEC standard—could be a key factor.

What does this mean for NVIDIA’s roadmap?

Mass production of Samsung’s **HBM4** modules is expected to begin next month, with full-scale supply ramping up by mid-2026. The timing aligns closely with NVIDIA’s plans for **Vera Rubin**, a platform designed to succeed its current **Blackwell** architecture, which relies on **HBM3E**. Early batches may debut at **GTC 2026** (March 16–19), where NVIDIA typically showcases its most advanced hardware. Demonstrations could highlight the platform’s improved bandwidth and efficiency, particularly for AI training and inference tasks.

Who stands to benefit?

The partnership directly supports NVIDIA’s hyperscale customers, including cloud providers and research labs, which require the highest-performance memory solutions. Samsung’s ability to deliver **HBM4** without redesigning the modules—despite NVIDIA’s push for higher performance—underscores its engineering prowess. Meanwhile, competitors like SK Hynix and Micron may face increased pressure to match or exceed these specifications, particularly as AI-driven workloads continue to demand faster, denser memory.

What’s next for Samsung in memory?

Beyond **HBM4**, Samsung is also advancing its **2 nm** process technology, which could further reduce power consumption and increase memory density. The company’s Texas fabrication plant, now attracting major AI customers, may play a pivotal role in scaling production for next-gen memory. If successful, this could position Samsung as a dominant supplier for not just NVIDIA but also AMD, which also requires **HBM4** for its upcoming AI accelerators.

One lingering question: Will Samsung’s **HBM4** modules make their way into consumer hardware?

While the immediate focus is on AI infrastructure, the technology could eventually trickle down to high-end gaming GPUs, such as NVIDIA’s rumored **RTX 5090** (priced at **$5000**) or **RTX 5080 SUPER** variants. However, consumer adoption will depend on cost, availability, and whether NVIDIA prioritizes enterprise-grade memory for its next-generation GPUs.

The race for **HBM4** dominance is far from over. With Samsung now in full production mode, the next few months will reveal whether the company can sustain its momentum—or if rivals will close the gap in this critical segment of the AI hardware ecosystem.