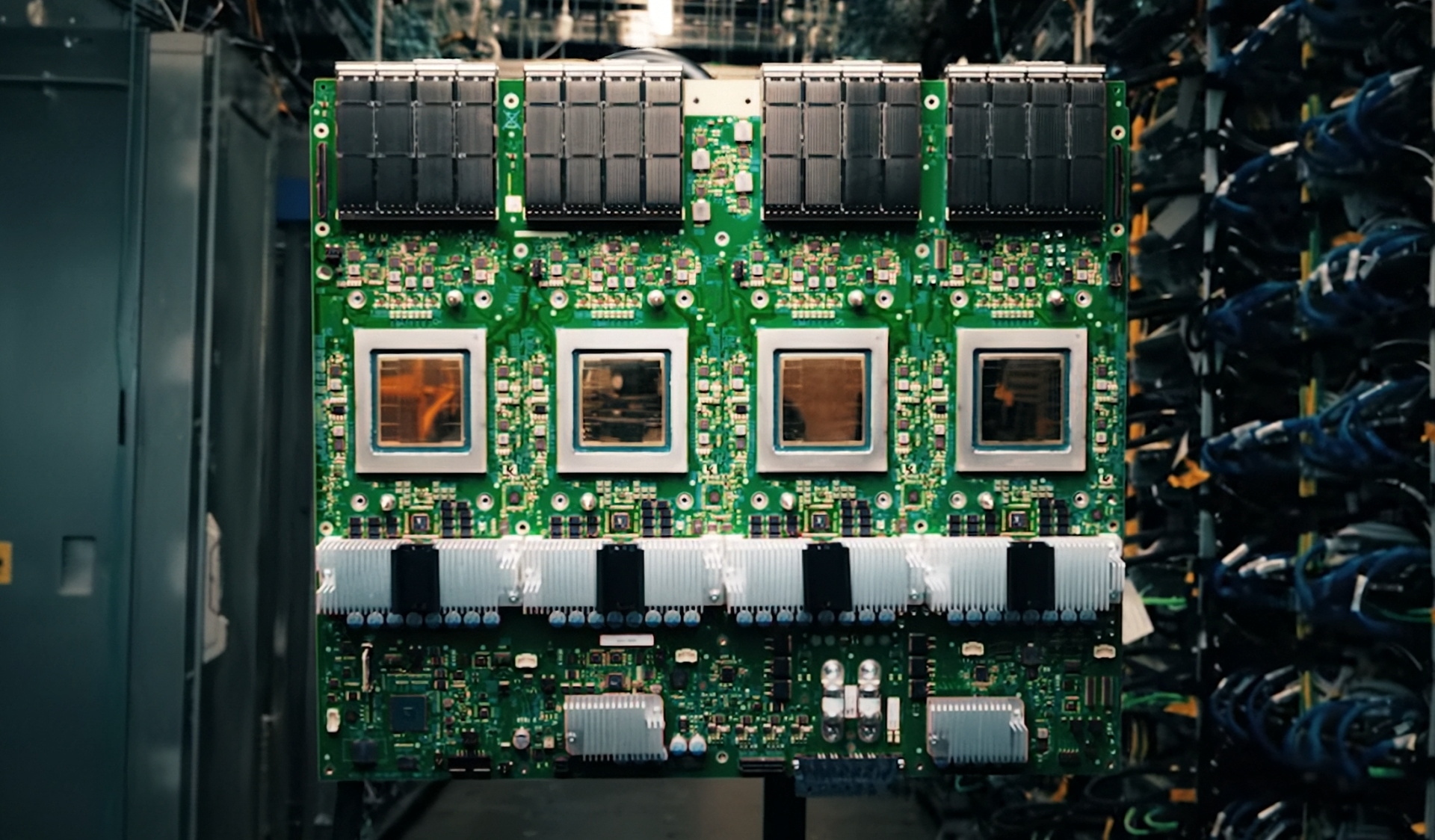

Data centers running high-end AI workloads are about to hit a wall—unless a newly announced GPU can scale fast enough.

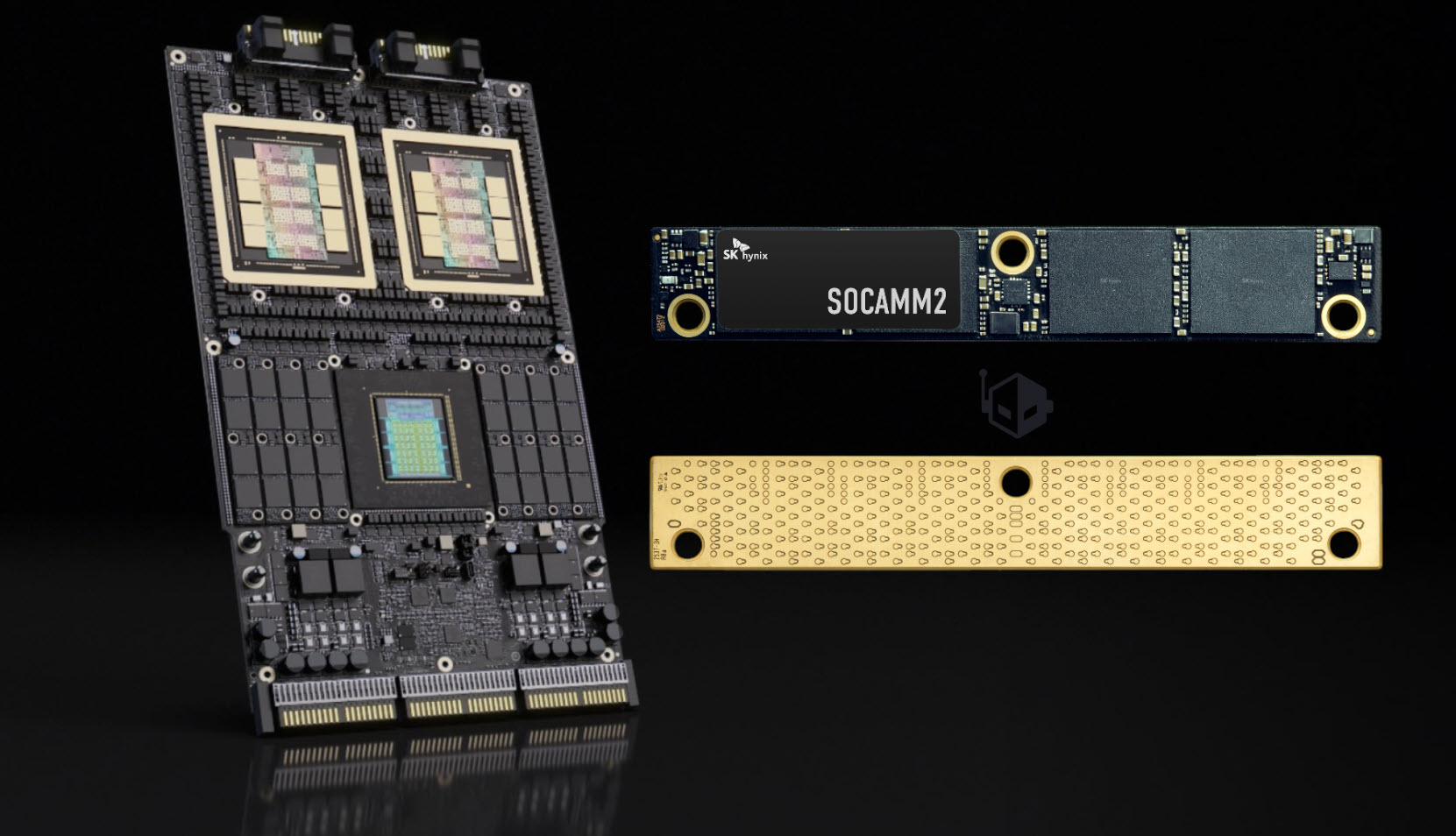

The architecture in question is designed to handle dense matrix operations, the kind that power large language models and generative AI. It promises 24 GB of HBM3 memory per chip, clock speeds up to 2.5 GHz, and TSMC’s N5 process node for efficiency. But with no confirmed production timeline, the real story may not be what it delivers, but whether it arrives when demand peaks.

How It Compares

The new GPU doesn’t just compete on raw specs; it targets a specific pain point: memory bandwidth. Current alternatives max out around 18 GB of HBM per chip, creating a bottleneck for models that need to process more data in parallel. If this architecture delivers on its claims, it could shift the balance—assuming it reaches volume before the next wave of AI training cycles.

Why Availability Matters

- Memory Bandwidth: 24 GB HBM3 per chip (vs. 18 GB in current high-end GPUs)

- Clock Speed: Up to 2.5 GHz (no direct comparison, but higher than some peers)

- Process Node: TSMC N5 (expected efficiency gains over N6)

The numbers alone don’t guarantee a breakthrough. The challenge will be ramping production in time for the next surge of AI workloads, which could stretch existing supply chains thin. If this GPU misses its window, the lead it builds today might not translate into market share tomorrow.

Who Benefits?

The biggest winners would be data centers running cutting-edge models that need more memory per chip to stay competitive. But without a clear timeline, the risk is that competitors will fill the gap first—leaving this architecture as an interesting but late arrival on the scene.