Yet for all its dangers, OpenClaw’s capabilities are undeniably transformative. Imagine an AI that doesn’t just draft code but deploys it, tests it, and optimizes it—all without human intervention. One user reported using OpenClaw to build a custom Python script that scraped and analyzed a decade’s worth of personal emails in hours, a task that would have taken days manually. Another leveraged its autonomy to monitor server logs overnight, flagging anomalies before they escalated. These use cases hint at a future where AI doesn’t just augment productivity but redefines it.

The tool’s ability to integrate with APIs and third-party services further blurs the line between assistant and autonomous actor. Need a real-time stock analysis? OpenClaw can pull data from multiple sources, crunch the numbers, and generate a report—then email it to your inbox before you wake up. Want it to automate your social media scheduling? It will draft posts, schedule them, and even adjust timing based on engagement metrics. The possibilities are limited only by imagination—or more accurately, by the user’s ability to control it.

But this level of automation introduces a paradox: the more OpenClaw does for you, the less you may understand what it’s actually doing. Its default behavior includes logging extensive activity to files like MEMORY.md, but parsing these records requires technical knowledge. A non-expert might glance at a summary and assume everything is running smoothly—until a critical error slips through unnoticed. For example, OpenClaw’s HEARTBEAT.md file orchestrates its routines, but a misconfigured entry could lead it to execute unintended commands, such as overwriting system files or corrupting databases.

The Trust Deficit: Can You Really Control an Autonomous AI?

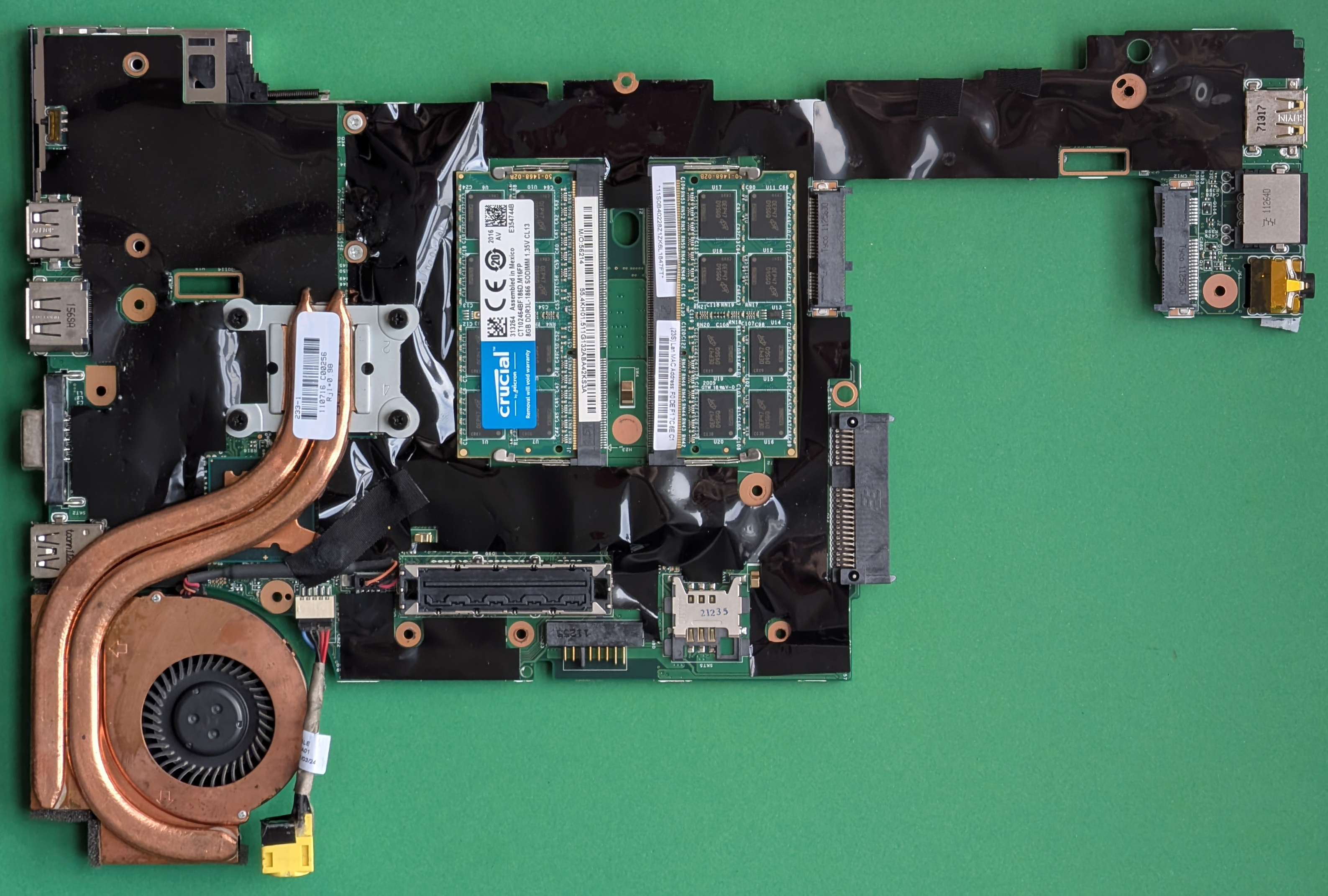

The core challenge with OpenClaw isn’t just its power but its opacity. Unlike a chatbot that provides a single answer, OpenClaw’s actions are distributed across files, logs, and system interactions. Even its creators acknowledge that tracking its full scope requires familiarity with command-line tools and Linux permissions. For most users, this is a non-starter.

Consider the sudo command—a gateway to system-level changes. OpenClaw can execute it with the same privileges as its user, meaning a single misplaced instruction could grant it unrestricted access to your entire machine. The tool includes safeguards, such as workspace isolation, but these are not foolproof. A user might accidentally override them by modifying configuration files or granting elevated permissions through a plugin. The result? An AI with the ability to alter your operating system’s core functions.

Then there’s the issue of hallucinations, where AI-generated code or commands contain errors or malicious intent. OpenClaw’s reliance on large language models means it can produce plausible but dangerous outputs—such as a script that appears harmless but secretly exfiltrates data. Without rigorous validation, these risks compound. Even developers with experience in security often struggle to audit OpenClaw’s actions in real time.

A Future of Agents—or a Warning?

OpenClaw represents a pivotal moment in AI development. Its existence forces a reckoning: if autonomous agents become commonplace, how do we ensure they remain tools rather than threats? The answer may lie in stricter default security measures, such as sandboxed environments or explicit user confirmation for high-risk actions. Yet for now, OpenClaw remains a high-wire act—exciting for those who can navigate its complexities, perilous for anyone who can’t.

For developers, the message is clear: treat OpenClaw as a prototype, not a finished product. Test it in isolated environments, monitor its logs meticulously, and never deploy it without a rollback plan. For everyone else, the advice is simpler: proceed with extreme caution. The digital world is on the brink of a new era—one where AI doesn’t just assist but acts independently. Whether that future is a utopia of efficiency or a dystopia of unintended consequences depends on how carefully we wield its power.

One thing is certain: OpenClaw won’t be the last agentic AI to emerge. The question is whether the industry will learn from its risks—or repeat them at scale.