The AI superchip from NVIDIA isn't just another performance boost—it’s a redefinition of what’s possible in machine learning workloads.

At its core, this new chip packs 270 billion transistors, operates at speeds reaching 1.4 GHz, and delivers up to 500 teraflops of AI performance. That’s not just faster; it’s a quantum leap that could reshape how developers train models, simulate complex systems, or analyze vast datasets.

But the question isn’t whether this chip is powerful—it’s whether that power translates into tangible benefits for everyday buyers. For researchers pushing the boundaries of AI, the answer may be clear-cut. For businesses or individuals looking to future-proof their setups without overhauling budgets, the calculus gets trickier.

Here’s what you need to know about NVIDIA’s latest offering: where it excels, where it stretches expectations, and whether it’s worth the investment today—or if waiting for the next iteration might be smarter.

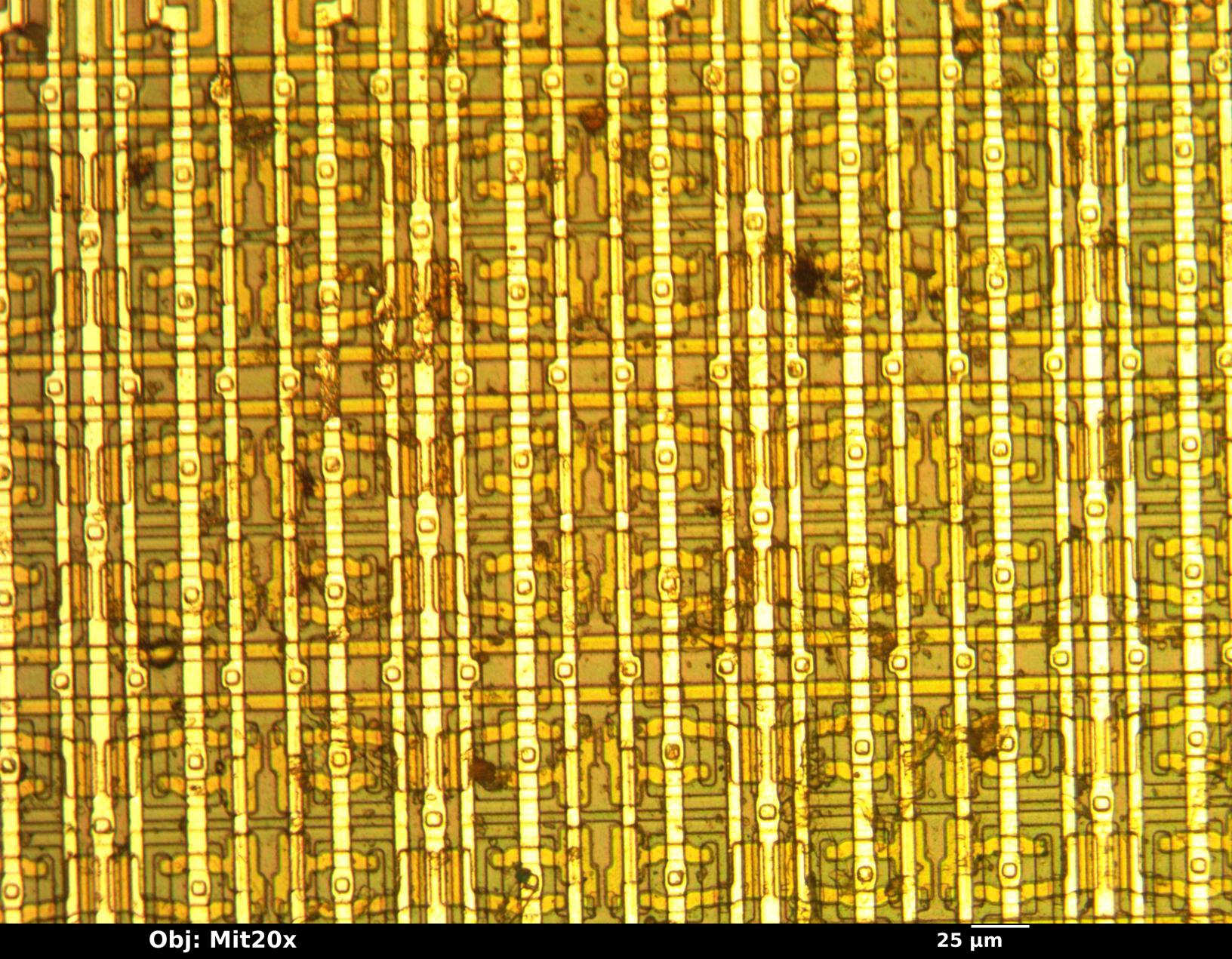

The chip itself is a marvel of engineering. It combines 5-nanometer process technology with NVIDIA’s proprietary Tensor Cores, designed specifically to accelerate AI workloads. That means tasks like matrix multiplication—critical in deep learning—are handled with unprecedented efficiency. The result? A system that can process AI training jobs up to 10 times faster than its predecessor.

What does that mean for buyers? If your workflow involves heavy AI training, real-time data processing, or large-scale simulations, this chip could be a game-changer. It’s not just about raw speed; it’s about reducing the time from idea to execution. For example, a task that once took days might now complete in hours.

But performance isn’t the only factor. Power consumption is another story. The chip draws up to 450 watts under full load—a significant jump from previous generations. That’s a critical consideration for data centers or workstations with limited power budgets. Cooling requirements also become more demanding, potentially adding complexity (and cost) to system design.

Then there’s the question of software maturity. AI frameworks and libraries are still catching up to the full potential of this hardware. While NVIDIA has made strides in optimizing its CUDA toolkit for the chip, some developers report that certain advanced features remain experimental or require deep integration efforts. For buyers who prioritize stability over cutting-edge performance, this could be a dealbreaker.

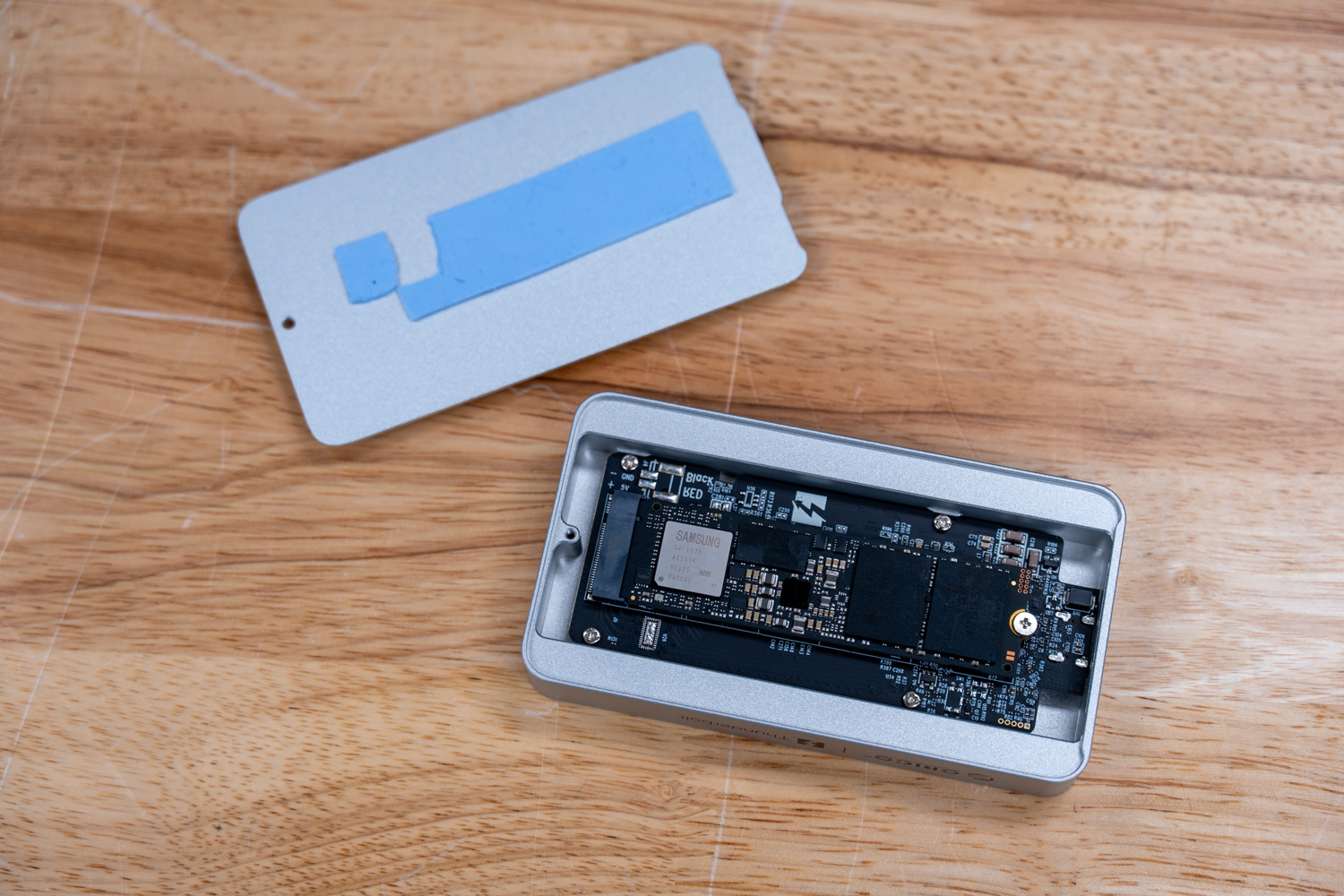

Price is another layer to consider. The chip isn’t available as a standalone component yet—it’s part of NVIDIA’s upcoming AI workstation lineup, with expected price points starting around $10,000 for a fully configured system. That puts it out of reach for most individual users or small teams but positions it as a serious contender in enterprise and research environments.

So, is this the future of AI computing? The evidence suggests yes—but with caveats. For those already deep in AI development, the leap to this chip could be seamless. For others, the decision hinges on balancing immediate needs against long-term scalability. If your workloads are pushing current hardware to its limits, upgrading now might make sense. If you’re still waiting for AI tools to mature or your power budget is tight, holding off could save both money and frustration.

What’s next? NVIDIA has hinted at further optimizations in the coming months, including improved power efficiency and broader software support. Pricing details will likely evolve as more systems hit the market. For now, buyers should weigh the performance gains against their specific use cases—and decide whether they’re ready to bet on this chip’s potential or wait for the next generation.