An IT team testing inference models on the NVIDIA V100 finds itself staring at a paradox: this GPU, launched eight years ago, now sells for under $100—a price no modern consumer card can match—yet it still delivers superior performance in AI tasks. The shift isn’t just about cost; it’s a window into platform longevity and the practical limits of today’s hardware ecosystem.

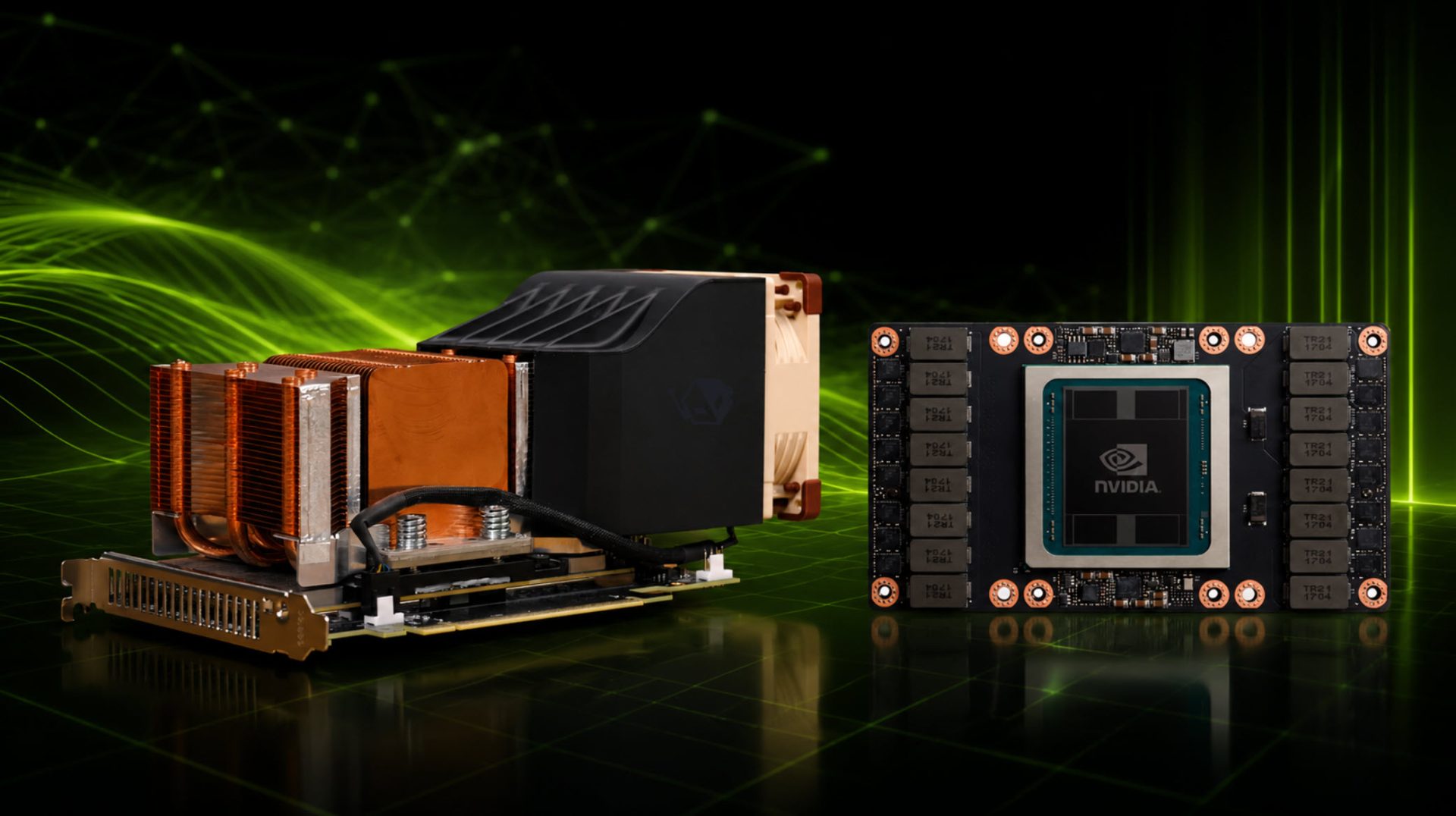

The V100, originally designed for data centers with a starting price around $3,500, has been quietly re-emerging on secondary markets. Its Tensor Cores, once cutting-edge accelerators for deep learning, are now being repurposed in smaller form factors. That’s the upside—here’s the catch: compatibility isn’t guaranteed.

Modern AI frameworks assume newer architectures like Ampere or Ada Lovelace as baselines. The V100 lacks native support for some CUDA features introduced after 2017, forcing IT teams to patch drivers or rewrite workloads. Yet benchmarks show it still crushes consumer cards in LLM inference—sometimes by a factor of three or more on single-threaded tasks. The tradeoff is clear: legacy hardware can be a performance bargain if the software stack plays along.

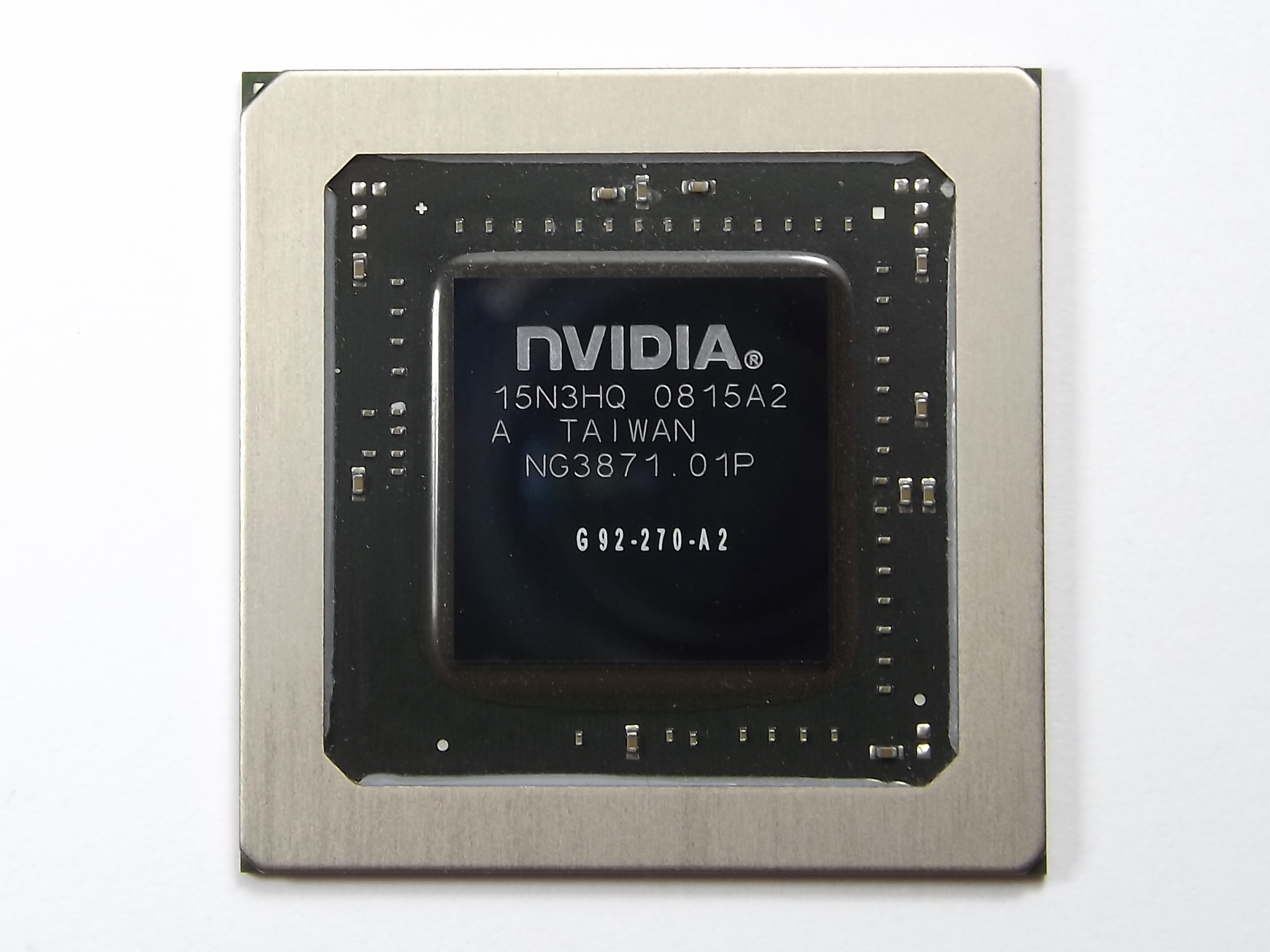

- Chip: Volta GV100

- Cores: 8,912 CUDA cores (arranged in 56 SMs)

- Tensor Cores: 3rd-gen (FP16, INT8, TF32 support)

- Memory: 16 GB HBM2 (2,048 GB/s bandwidth)

- Power: 250 W TDP

- Bus Interface: PCIe 3.0 x16

- Price (secondary market): ~$90–$120 per unit

The HBM2 memory is a standout—still the fastest on-silicon bandwidth available, even in 2025. But pairing it with today’s software requires careful configuration. Frameworks like PyTorch or TensorFlow may need legacy branches, and some libraries assume newer GPU features that the V100 can’t provide. That said, for IT teams running older models or constrained by budget, the V100 offers a rare balance: high performance without the power draw of modern GPUs.

The real question isn’t whether it’s fast—it’s whether supply will last. NVIDIA has no official roadmap for continued production, and secondary sources are limited to bulk orders from data center liquidation channels. If demand surges, availability could vanish as quickly as it appeared. For now, the V100 remains a reminder that hardware lifecycles aren’t just about new releases; sometimes, the best tools are the ones that outlast their time.